Intel predicts computers that can read your mind

Event wraps up with Intel's take on context-aware computing

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Justin Ratner, Intel's Chief Technology officer and freestyle futurologist, whipped out his crystal ball on the final day of the Intel Developer Forum (IDF) and predicted a future of pervasive context aware computing.

In his closing keynote, Ratner painted a picture of all-aware computers and devices that know not only where you are, but what you're doing, feeling and even thinking.

Practical demos included a prototype destination application from travel guide outfit Fodors. It was the usual restaurant-toting, point-of-interesting hawking application with the added twist of constantly learning your preferences in terms of factors such as cuisine and price points.

The application also had a snazzy auto-blogging feature that combined any photos taken with auto-generated commentary on your whereabouts and activities.

It's all about context

Next up was Lama Nachman, Intel's context-computing specialist. Nachman explained how an array of familiar sensors such as accelerometers, light sensors and GPS could be used to build up a detailed picture of behaviour, location and more.

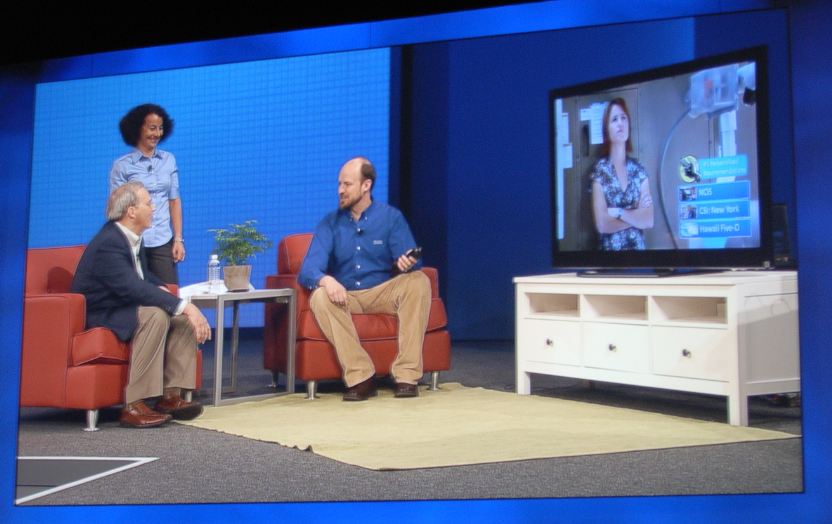

Much of the discussion was theoretical, but tangible examples included a TV remote that detects the user's identity based on how the device is wielded. From there, the system can present the user with preferred viewing options. Another near-future example involved animated avatars depicting an individual's status. Think of them as a replacement for those happy headshots in your Facebook feed.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Needless to say, neither choosing a restaurant nor allowing friends and family to know you're out jogging or in a meeting are terribly profound. Where things get really interesting is when you begin to build up a more detailed picture over time.

Computerised surveillance

Known as context aggregation, the analogy here is personal finance. It's easy enough to be aware of each individual financial transaction you perform. But keeping track of your broader financial behaviour is much more tricky. Thus, with context aggregation all kinds of short, medium and long term behaviour patterns can be tracked.

Forget kidding yourself over how many hours of work you've done, how much exercise you've been taken or how much quality time you've spent with the sprogs. In the future, it will all be logged in cold, hard numbers.

Of course, the ultimate end game here is direct reading of your thoughts by computers. Ratner duly delivered with a video showing research into just that by Carnegie Mellon University and supported by Intel. In truth, the video didn't reveal much beyond a system that can distinguish whether a human is thinking of one of two words, but the implications are still, well, mind boggling.

As for when we can expect context-aware computing to really take off, Ratner could not sprovide specifics. But as with all things computer-related, the safe bet is sooner than you think.

Technology and cars. Increasingly the twain shall meet. Which is handy, because Jeremy (Twitter) is addicted to both. Long-time tech journalist, former editor of iCar magazine and incumbent car guru for T3 magazine, Jeremy reckons in-car technology is about to go thermonuclear. No, not exploding cars. That would be silly. And dangerous. But rather an explosive period of unprecedented innovation. Enjoy the ride.