Nvidia sees a future of self-driving cars, and it's got some ideas on how to get there

With the right tools...

CES is always about discussing what's possible, even if it's not a product that'll be on the market this year, the next, or even the year after that.

Nvidia is following in the footsteps of many, discussing self-aware cars and the tools it's using to achieve such a thing during its CES 2015 press conference.

The key to creating self-aware (and eventually, self-driving) cars, said CEO Jen-Hsun Huang, is heavily updatable software. Cameras, he said, are key to developing autonomous roadsters, and for a car to become truly self-aware, every camera needs to be connected to a central super computer.

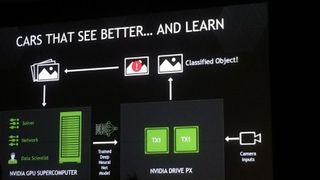

Luckily, Nvidia took it upon itself to develop the Drive PX, a 2.3 Teraflops auto-pilot car computer equipped with two Tegra X1 processors and 12 camera inputs.

Deep learning

The Drive PX introduces two new technologies: Deep Neural Network Computer Vision and Surround Vision, the former the far more interesting.

Deep learning is not a new concept, though its development is still in the nascent stages. Neural networks that pass through GPUs essentially recognize what's going on in the world around them. According to Huang, deep learning and neural nets help a car recognize images, like street signs or pedestrians, on its own.

This, Huang said, is a computationally intensive task, so a heavy duty CPU is needed to complete it. Being able to recognize and understand context is vital for a self-aware vehicle, Huang said, and a car can learn to classify objects overtime.

Get daily insight, inspiration and deals in your inbox

Get the hottest deals available in your inbox plus news, reviews, opinion, analysis and more from the TechRadar team.

Clearly something is needed to power this energy intensive neural network, and that's where the 256 GPU core Tegra X1 comes in.

Ricky Hudi, executive vice president of electronics development at Audi, revealed the car company is Nvidia's exclusive partner for autonomous driving.

Techradar's coverage of the future of tech at CES 2015 LIVE is brought to you courtesy of Currys PC World. Keep up to date with all the latest tech at Currys here

Michelle was previously a news editor at TechRadar, leading consumer tech news and reviews. Michelle is now a Content Strategist at Facebook. A versatile, highly effective content writer and skilled editor with a keen eye for detail, Michelle is a collaborative problem solver and covered everything from smartwatches and microprocessors to VR and self-driving cars.

Most Popular