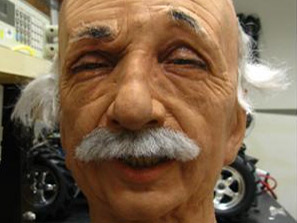

Robot Einstein learns to smile

Can't be long before it achieves singularity and enslaves mankind

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

A robotic Einstein has learned to do what Data and the Terminator never managed - crack a smile.

Researchers at the University of California, San Diego have taught their ultra-realistic brain-bot to grin, frown and make other complex facial expressions.

This could be the first step to teaching robots how to walk, talk, move and even think on their own.

Article continues belowBody babbling

Psychologists believe that infants learn to control their bodies through exploratory movements, including babbling to learn to speak. "We applied this same idea to the problem of a robot learning to make realistic facial expressions," said Javier Movellan, director of UCSD's Machine Perception Laboratory.

The San Diego researchers directed the £50,000 Einstein robot head to twist and turn in all directions, in a process called "body babbling." The robot could see itself in a mirror and analyse its own expression using software called CERT (Computer Expression Recognition Toolbox), providing data for machine-learning algorithms to map facial expressions to the movements of its muscle motors.

Once the robot learned the relationship between facial expressions and the muscle movements required to make them, the Einstein-bot learned to make facial expressions it had never encountered.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

"As far as we know, no other research group has used machine learning to teach a robot to make realistic facial expressions," said computer science Ph.D. studentTingfan Wu.

This Einstein robot head has about 30 facial muscles, each moved by a tiny servo motor connected to the muscle by a string. Until now, a trained person had to manually set up the servos to pull in the right combinations and make specific face expressions.