Meet the awesome tech that will soon make your smartphone smarter

It's all in the context

Context is everything. Your smartphone knows where you are and what direction you're facing, but that's about it. What if it knew your context?

Not just where you are, but what you're doing, who you're with – and what you're likely to do next. It's called contextual computing, and it's going to make your smartphone into a much better personal assistant.

With context, a smartphone, tablet, wearable device or headset could detect when you're driving and read your text messages out loud. It could detect when you're low on battery and start preserving power. It could even alert you when someone really important to you is suddenly nearby.

Contextual computing is about improving interaction between human and computer – and it's about computers becoming intelligent.

Adapting behaviour

"It's the ability for a device, object or service to be aware of not only the users surroundings but about the user, their views, behaviours and their interests," says Kevin Curran, senior member at the Institute of Electrical and Electronics Engineers (IEEE) and Reader in Computer Science at the University of Ulster. "They adapt their functionality and behaviour to the user and his or her situation."

Defining exactly what we mean by 'context' is tricky, but it's generally agreed that it includes the user's location, environment and orientation, their emotional state, the task they're engaged in, the date and time, and the people and objects in their environment. Your phone can probably already calculate some of those already.

Your next smartphone

Contextual computing isn't much about your smartphone; it's about your next smartphone. Producing context can be based on rules and the sensor inputs that now fill our phones, but it's also about machines making assumptions and anticipating our every whim. It's the missing link.

Get daily insight, inspiration and deals in your inbox

Get the hottest deals available in your inbox plus news, reviews, opinion, analysis and more from the TechRadar team.

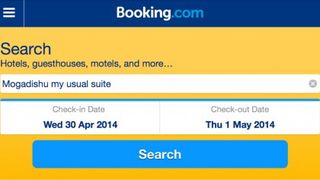

"Instead of the user having to go and look for something like hotels, this device would already know what kind of hotel you are looking for by using the information gathered on what hotels they have picked in the past what facilities they used," says Curran.

If you always stay at hotels with a swimming pool or a spa, that's the context within which the phone will help you search. But it could go further; your phone will know when you're due to arrive at the hotel, and if you're in the car, talk you in. If you're on the train, it will have downloaded your favourite podcasts and turned the ringer off if you're sat in the silent carriage.

Calculate the context

There are already some apps that try to make use of the sensors on smartphones to calculate some context. CallWho uses your call history to estimate who you would like to call; pull your phone out of your pocket for your daily phone call with your wife and her face will be at the top of the list. It also makes pictures of your contacts bigger; after all, who ever thought an A-Z list of contacts was pleasant to scroll through?

Sickweather monitors Facebook statuses about illness and cross-references GPS locations to tell you whether you're near to someone who might pass on sickness. In return you tell the app if you're unwell. That approach might seem hopelessly inaccurate or a novelty, but building models of movement can have life-saving effects. Back in 2009, researchers at Telefónica used mobile phone records of its users in Mexico to help the government limit the spread of the H1N1 epidemic.

By revealing exactly where people were, the government could evaluate their decision to close an airport and a university campus; in doing so they reduced mobility by a third and pushed back the spread of the disease by at least a couple of days. Combine social media with that – and the much more precise GPS data smartphones are capable of now – and contextual awareness becomes a powerful means of pattern-spotting.

A contextually aware app that does just that is Agent, which uses GPS, gyroscope, accelerometer, Bluetooth, temperature and WiFi data from your phone combined with social data on who you're with to assess your context, and make decisions for you.

It goes from the mundane (it knows when you're sleeping and automatically silences your phone) to the possibly life-saving (it can sense that you're driving and automatically reads allowed text messages), but there's more to come. "We have abundant computing power, along with lots of sensors, which means that smartphones can look at many inputs and start learning what we are doing, where we are, and who with,"

Kulveer Taggar, CEO at Agent, told TechRadar. Taggar thinks that in future Agent will be able to use a phone's microphone to sense that you're at a party or concert – somewhere loud where you're not going to hear your phone if it rings – and so switch to vibrate. For now Agent is limited to Android, but an iOS version is in the offing.

Jamie is a freelance tech, travel and space journalist based in the UK. He’s been writing regularly for Techradar since it was launched in 2008 and also writes regularly for Forbes, The Telegraph, the South China Morning Post, Sky & Telescope and the Sky At Night magazine as well as other Future titles T3, Digital Camera World, All About Space and Space.com. He also edits two of his own websites, TravGear.com and WhenIsTheNextEclipse.com that reflect his obsession with travel gear and solar eclipse travel. He is the author of A Stargazing Program For Beginners (Springer, 2015),

Most Popular