Facebook is testing ways to combat fake news

The jig is up for hoaxes

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Facebook is finally starting to act on fake news, announcing today a number of features it's testing to combat the spread of misinformation.

The social network is beginning by going after "the worst of the worst," posts that are blatantly false and generated for spammers' own gain, writes Adam Mosseri, the vice president who oversees Facebook's News Feed, in a blog post.

Perhaps the most significant feature it's trialing is tapping into third-party fact checkers to analyze the veracity of an article. Facebook will send suspicious stories to a fact-checking partner organization, among them Snopes, PolitiFact and ABC News, reports The New York Times. If the org decides it's playing loose with facts, the post will be flagged as disputed. A link to an article explaining why the post is under scrutiny will also be included.

Article continues belowThough users can still share disputed stories, these may be pushed down lower in the News Feed. Even more in your face - a warning will pop up when users go to share the story. Flagged stories will also be banned from being promoted and turned into ads, Mosseri says.

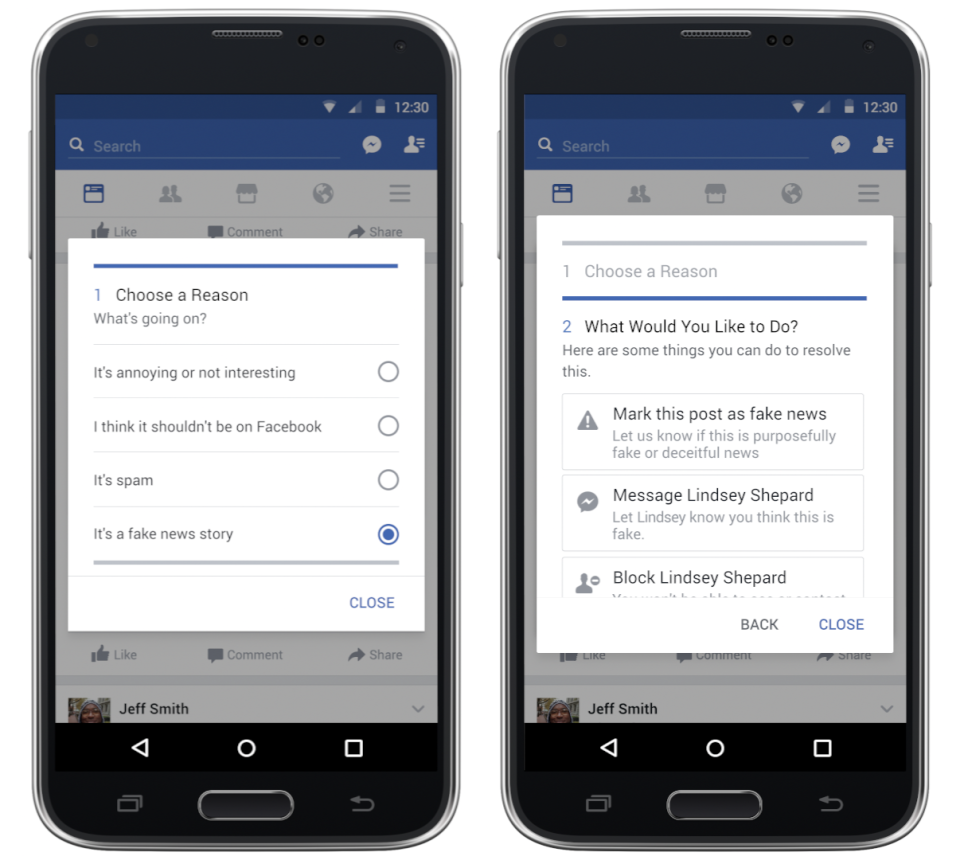

Facebook is also turning to users, testing a way for the community to flag a post they believe is a hoax. After clicking the upper right hand corner of a post, some users will see the option to select "It's a fake news story" as the reason they're singling it out for attention.

And the steps don't stop there. Facebook is looking into incorporating signals that a story is fake into how it ranks articles, such as people not sharing an article after they've read it, usually an indication they found it misleading.

Finally, Facebook is going to hit spammers where it hurts: their wallets. Because hoaxers make money when they pose as legit news organizations and direct users to their ad-filled sites, Facebook is getting rid of the ability to spoof domains. It will also look at publisher sites to see where policy enforcement may be needed.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Even though these features are part of a small test for now, it's a change of course for Facebook, which has been accused of sticking its head in the sand when it comes to its role as a source (and often, the source) of information. While it doesn't want to become the arbiter of truth, as Mosseri writes, Facebook is at least recognizing that, with over a billion people on its service, it has to take fake news more seriously.

Depending on what it learns from these trials, Facebook will release the fake news-fighting features more widely.

Michelle was previously a news editor at TechRadar, leading consumer tech news and reviews. Michelle is now a Content Strategist at Facebook. A versatile, highly effective content writer and skilled editor with a keen eye for detail, Michelle is a collaborative problem solver and covered everything from smartwatches and microprocessors to VR and self-driving cars.