The world's largest chip just received a major machine learning-flavored upgrade

CSoft version 1.2 includes PyTorch and TensorFlow support

Cerebras Systems, makers of the world’s largest chip, has announced that its CS-2 system now supports PyTorch and TensorFlow which will make it possible for researchers to quickly and easily train models with billions of parameters.

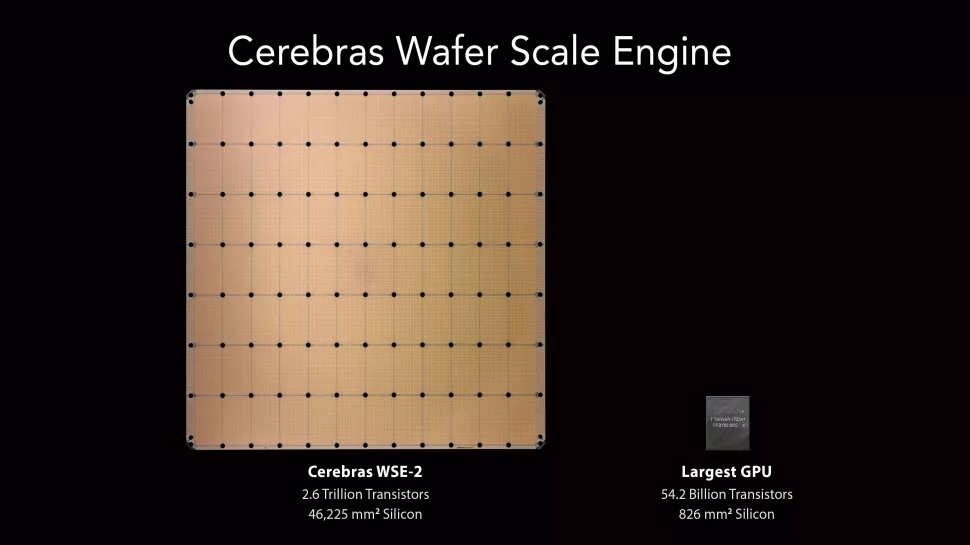

The company’s CS-2 is the world’s fastest AI system and is powered by its Wafer-Scale Engine 2 (WSE-2) CPU. With the release of version 1.2 of the Cerebras Software Platform (CSoft), the CS-2 now supports additional machine learning frameworks which will give developers even more choice when it comes to the types of models they want to run.

Senior director of AI framework at Cerebras Systems, Emad Barsoum provided further insight in a press release on how CSoft now enables developers to express models written in either TensorFlow or PyTorch, saying:

“From the start, our goal was to seamlessly support whichever machine learning framework our customers wanted to write in. Our customers write in TensorFlow and in PyTorch, and our software stack, CSoft, makes it quick and easy to express your models in the framework of your choice. By doing so, our customers gain access to the 850,000 AI optimized cores and 40 Gigabytes of on-chip memory in the Cerebras CS-2.”

Scaling large language models

CSoft version 1.2 now enables developers to write their models in the open source frameworks of PyTorch or TensorFlow and run them on the Cerebras CS-2 without any modification whatsoever. At the same time, an AI model written for a GPU or CPU can run in CSoft on the CS-2 without any changes.

With the combined power of CS-2 and CSoft, developers can seamlessly scale up from small models such as BERT to the largest models in existence like GPT-3.

Training large models using a GPU is challenging and time-consuming while training from scratch on new datasets often takes weeks and 10s of megawatts of power on large clusters of legacy equipment. Additionally, as the size of the cluster grows, power, cost and complexity grow exponentially.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Cerebras Systems built the CS-2 to address these challenges and its AI system can set up even the largest models in only a few minutes. Since developers spend less time setting up, configuring and training their models with the CS-2, they are able to explore more ideas in even less time.

After working with the TechRadar Pro team for the last several years, Anthony is now the security and networking editor at Tom’s Guide where he covers everything from data breaches and ransomware gangs to the best way to cover your whole home or business with Wi-Fi. When not writing, you can find him tinkering with PCs and game consoles, managing cables and upgrading his smart home.

Become a TechRadar Insider

Become a TechRadar Insider