Should Facebook police thought? - reflecting on the leak of its rulebook

Who watches the watchmen?

Warning: Some of the content of this piece may be upsetting or offensive.

Freedom of speech is a fundamental right included in the architecture of all civilised societies. The Universal Declaration of Human Rights created in 1948 and signed by 48 members of the United Nations states: “Everyone has the right to freedom of opinion and expression; this right includes freedom to hold opinions without interference and to seek, receive and impart information and ideas through any media and regardless of frontiers.”

Still, freedom of speech is a contentious issue. In this period of political bipartisanship, where lines are being drawn further and further apart, the right to the freedom of speech is being played as a trump card (no pun intended) to allow people to voice beliefs that they know are going to be offensive or upsetting. And to a certain degree, they are right to do so; that is the reason that the law surrounding the freedom of speech exists.

Article continues belowTo deal with this issue we are constantly censoring our own experience - either consciously or unconsciously - by deciding the people that we spend time with, the places that we go, who we vote for, what entertainment we consume. Peer groups are invariably drawn together by shared beliefs and experiences.

But this doesn’t mean something being offensive should preclude it from being part of a conversation. Some of the most important issues politically and socially are offensive. If there were rules that forbade the discussion of offensive issues, that censored life for us, it would feel very much like the dystopian vision of 1984’s ‘thought police’.

You can't say that!

Censorship has always been a prickly issue. There are undeniably certain things that are inappropriate to be shared because of the audience; everyone can agree that violent or sexual imagery shouldn’t be viewed by children, so it is right to censor entertainment that is likely to be viewed by youngsters.

Where censorship becomes difficult is if it infringes on the freedom of expression. If there is a documentary about marriage equality, some people would think that children should also be shielded from it, while others would think that it is essential for children to view.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

This gray area is difficult enough when the media is something that is being specifically produced for public consumption by a television company. When the populace are the ones creating the content it gets even more difficult.

J P Sears' popular satire about offence

This is the situation that Facebook is currently facing. As one of the largest social media platforms in the world, it is now a microcosm of society, and as such it includes all the quirks and foibles of the world. Unlike in offline interactions however, there is a permanence to things shared on the platform – and these posts are easily shared.

This raises an interesting issue about whether censorship is appropriate on self expression, and if it is, at what point the line is drawn. To highlight this issue, let’s look at one of the more shocking excerpts from Facebook's recently leaked rulebook on how it attempts to police its network, shared by The Guardian: “To snap a b***h’s neck, make sure to apply all your pressure to the middle of her throat”.

This post passes Facebook’s guidelines as it doesn’t include a direct threat, so is technically a moment of self expression. Now clearly there is a difficult line to draw as the violence included in that post is clearly disturbing, but to remove it would be censorship of personal thought, and then the conversation becomes about the value of thought versus protection from offence.

Relax, it was just a joke

Now there is an argument that the example post above could have been a joke, which further complicates the issue. Obviously, making light of violence against women in a world where the World Health Organisation estimate that: “1 in 3 (35%) women worldwide have experienced either physical and/or sexual intimate partner violence or non-partner sexual violence in their lifetime,” could potentially propagate and normalize violence.

At the same time, humor is often used as a way to draw attention to an issue, and can be used by survivors to deal with their experiences. So would censorship of this comment be appropriate?

The answer probably lies in context. The problem is that establishing context requires time, and with 1.3 million posts being uploaded every minute, there simply isn’t the time for moderators to establish context on every post that gets ‘flagged’ as offensive.

What makes things more difficult is that not every situation that faces censorship has a context based solution. Take images of abuse for example. In the rulebook there are clear stipulations about when images of animal and child abuse get removed and when they don’t.

Facebook post from Animal Abusers Exposed, who share animal abuse images and videos to try and catch perpetrators

On first hearing this, it’s difficult to think of a reason for child abuse to remain on the website, but in the files, Facebook state: “We allow “evidence” of child abuse to be shared on the site to allow for the child to be identified and rescued, but we add protections to shield the audience”.

So then what happens if the child has been rescued? Does the content stop being seen of as high enough in value? What is interesting is the stipulations that are placed about when such content would be removed: “We remove imagery of child abuse if shared with sadism and celebration.”

Why are you looking at that?

Clearly context is still at play here, only in the context of the posting not the context of the abuse. If someone is enjoying sharing the abuse, that's what makes it wrong. What this identifies is that there are users that enjoy sharing, and presumably viewing, abuse.

How do you then draw the line in stopping people from enjoying looking at content of abuse, even if it has been posted with good intent? Does Facebook have to make a decision about the risk versus the worth of people enjoying content they shouldn’t?

Where this proves particularly tricky is how it deals with nudity. Clearly, not all nudity is porn, and if there was a blanket ban on nudity, many art pieces, anthropological images, and images of vast cultural significance would be banned.

What this does mean is that Facebook has to take a view on when nudity is of a secondary importance in the image. So a picture of a topless woman isn’t acceptable, but an image of a topless woman in a concentration camp is, because of its educational importance.

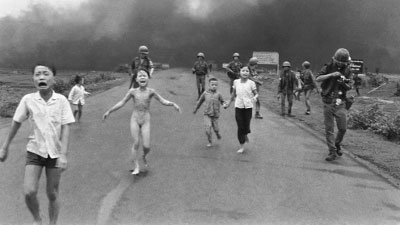

According to the files, an iconic picture of the Vietnam war was removed from the site due to the fact that it featured a nude child, leading to new guidelines being drawn up.

The Pulitzer Prize winning photo by Nick Ut, commonly referred to as 'Napalm Girl'

But then the obvious question arises, where is a line drawn about what is educational? If there was a more open conversation about sex, would the previously mentioned abuse statistics be affected? Is that a conversation that Facebook should be having? And if it is having that conversation, who gives it that right?

Clearly Facebook has a burden of care for its users, if the site were a total free-for-all, the propagation of extremist groups could go unchecked leading to greater radicalization of young people, it could become a hub for all sorts of illicit and illegal activities. But should the law be the limit of Facebook’s remit?

Facebook’s greatest blessing is also its greatest curse, that users can find groups of like minded people all over the world. But sometimes those people arguably shouldn’t be able to connect. In those situations, is it right for Facebook to intervene, or is it infringing on those user’s rights by doing so?

Ultimately, these questions all feed back to a larger question: how do we decide what should and shouldn’t be censored? And from that, are we comfortable with the way our world is being shaped by a company?

Andrew London is a writer at Velocity Partners. Prior to Velocity Partners, he was a staff writer at Future plc.

Become a TechRadar Insider

Become a TechRadar Insider