Robots could become much better learners thanks to ground-breaking method devised by Dyson-backed research — removing traditional complexities in teaching robots how to perform tasks will make them even more human

Robots have even been trained to put the toilet seat down

One of the biggest hurdles with teaching robots new skills is how to convert complex, high-dimensional data, like images from onboard RGB cameras, into actions that accomplish specific goals. Existing methods typically rely on 3D representations requiring accurate depth information, or using hierarchical predictions working with motion planners or separate policies.

Researchers at the Imperial College London and the Dyson Robot Learning Lab have unveiled a novel approach that could address this problem. The "Render and Diffuse" (R&D) method aims to bridge the gap between high-dimensional observations and low-level robotic actions, especially when data is scarce.

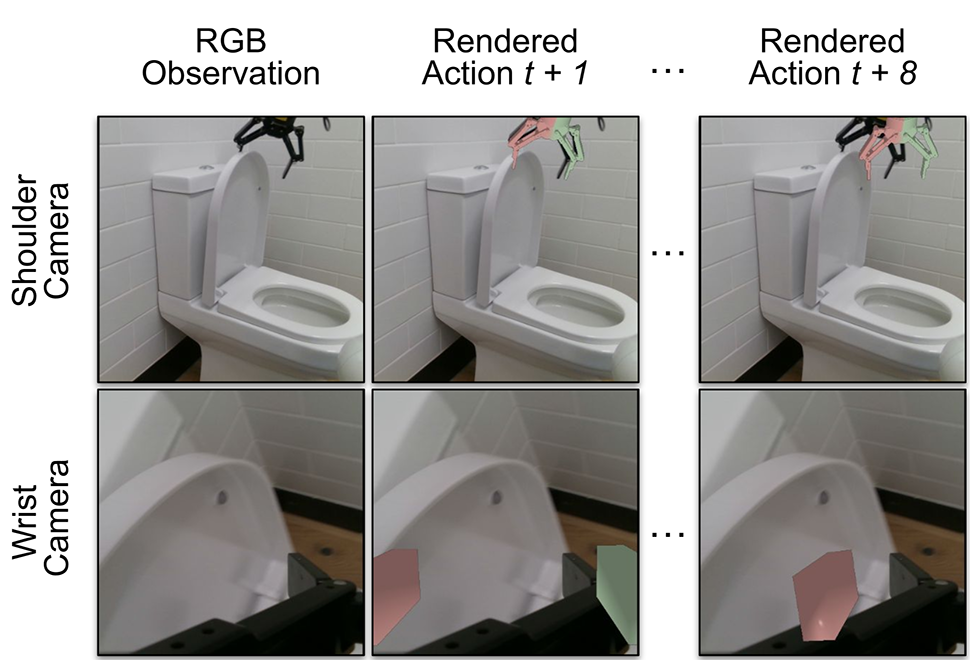

R&D, detailed in a paper published on the arXiv preprint server, tackles the problem by using virtual renders of a 3D model of the robot. By representing low-level actions within the observation space, researchers were able to simplify the learning process.

Imagining their actions within an image

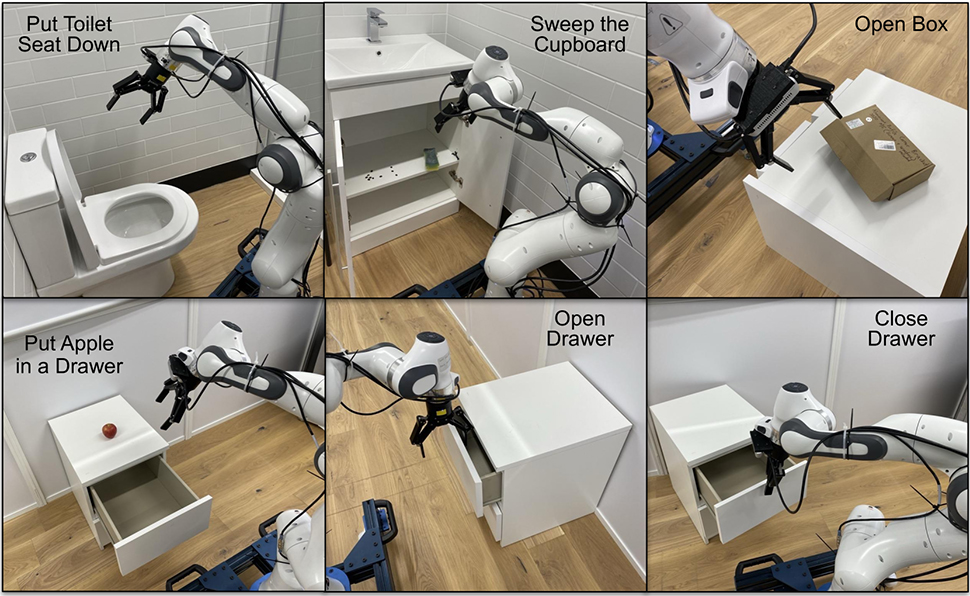

One of the visualizations researchers applied the technique to was getting the robots to do something that human men find impossible – at least according to women – putting a toilet seat down. The challenge is taking a high-dimensional observation (seeing the toilet seat is up) and marrying it with a low-level robotic action (putting the seat down).

TechXplore explains, “In contrast with most robotic systems, while learning new manual skills, humans do not perform extensive calculations to determine how much they should move their limbs. Instead, they typically try to imagine how their hands should move to tackle a specific task effectively.”

Vitalis Vosylius, final year Ph.D. student at Imperial College London and lead author of the paper said, "Our method, Render and Diffuse, allows robots to do something similar: 'imagine' their actions within the image using virtual renders of their own embodiment. Representing robot actions and observations together as RGB images enables us to teach robots various tasks with fewer demonstrations and do so with improved spatial generalization capabilities."

A key component of R&D is its learned diffusion process. This iteratively refines the virtual renders, updating the robot's configuration until the actions closely align with training data.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

The researchers conducted extensive evaluations, testing several R&D variants in simulated environments and on six real-world tasks including taking a lid off a saucepan, putting a phone on a charging base, opening a box, and sliding a block to a target. The results were promising and as the research progresses, this approach could become a cornerstone in the development of smarter, more adaptable robots for everyday tasks.

"The ability to represent robot actions within images opens exciting possibilities for future research," Vosylius said. "I am particularly excited about combining this approach with powerful image foundation models trained on massive internet data. This could allow robots to leverage the general knowledge captured by these models while still being able to reason about low-level robot actions."

More from TechRadar Pro

Wayne Williams is a freelancer writing news for TechRadar Pro. He has been writing about computers, technology, and the web for 30 years. In that time he wrote for most of the UK’s PC magazines, and launched, edited and published a number of them too.

Become a TechRadar Insider

Become a TechRadar Insider