Key tech anniversaries to watch out for in 2024

The top anniversaries to celebrate in 2024

Birth of IBM - 100 years ago

Originally established in 1911 under the name Computing-Tabulating-Recording Company (CTR), IBM decided to shake things up in 1924 and rebranded to the powerhouse house we know today. With a name that boldly declares its vision, IBM, short for International Business Machines, spent its formative years dominating the market—ranging from electric typewriters to electromechanical calculators (IBM created the first subtracting calculator) and personal computers. IBM also played a pivotal role in the development of many tech innovations including the automated teller machine (ATM), the SQL programming language, the floppy disk, and the hard disk drive.

By the mid-90s. the IBM mainframe stood as the unrivalled computing powerhouse and the brand boasted an impressive 80% market share of computers in the U.S. Yet, by 1992, IBM’s market share plummeted to a concerning 20%.

The root cause of IBM's decline can be traced back to the intense competition it faced in the personal computer market. Despite creating an impressive PC with features like 16 KB of RAM, a 16-bit CPU, and two floppy drives, IBM failed to foresee the soaring demand for personal computers. This miscalculation allowed rival companies such as Apple, Epson, and more to enter the scene, offering cheaper alternatives and creating a market where IBM was no longer in control.

As more competitors flooded the market, prices began to drop, and IBM found itself struggling to dictate market terms. Although IBM maintained high-quality machines, their premium pricing strategy contributed to diminished profits and a decline in market share.

A change in leadership, coupled with strategic cost-cutting measures and a keen focus on consumer feedback revitalized IBM. Although it may not be leading the pack as it did in its glory years, it continues to innovate and now stands as a major advocate for the adoption and responsible governance of Artificial Intelligence (AI).

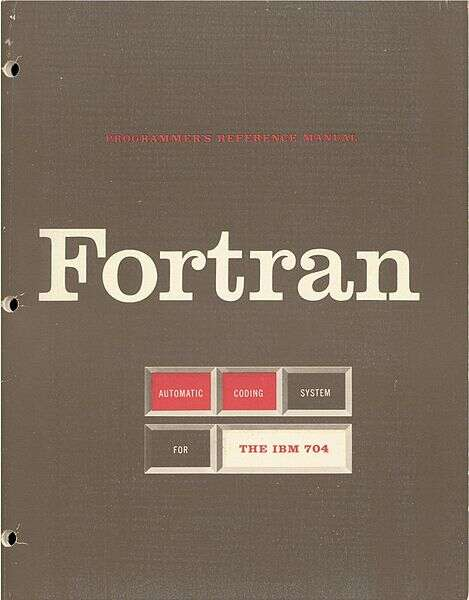

The oldest surviving programming language - 70 years ago

Where would we be today if John Backus, along with his team of programmers at IBM, had not invented the first high-level programming language back in 1954?

FORTRAN, short for Formula Translation, may seem different from the typical assembly languages, but it still shares the basic principles and some syntax as it was largely developed as an (arguably elevated) alternative to program mainframe computers.

The program first ran on September 20, 1954, but it wasn’t until April of 1957 that it was commercially released because customers elected to wait until its first optimized compiler was made available.

Now, why did the lack of a compiler seem like a deal breaker to users at the time? A compiler is a special program that translates source code—complex, human-readable language—to machine code or another simpler programming language, allowing the possibility of running it on different platforms.

And so, three years following its inception, it quickly gained traction as the dominant language for applications in science and engineering because of its speed when used in applications, as well as ease of use after it had made machine operations possible despite reducing the necessary programming statements by a factor of 20.

FORTRAN is not the only major programming language available today, but it boasts of being the oldest surviving one to date. Not only that, it still remains popular among users after many updates and iterations over the years. What makes it a great language is how, despite the new features included in each update, it still ensures compatibility with older versions. Its 70 years of trusted performance keeps it competitive with contemporary languages.

As a matter of fact, FORTRAN 2023 has just been released last year, replacing its 2018 standard, with its next standard rumoured to already be in development - so whoever said you can’t teach an old dog new tricks has clearly never met FORTRAN and the team behind it.

Lo (and Behold!), the first message on the Internet - 55 years ago

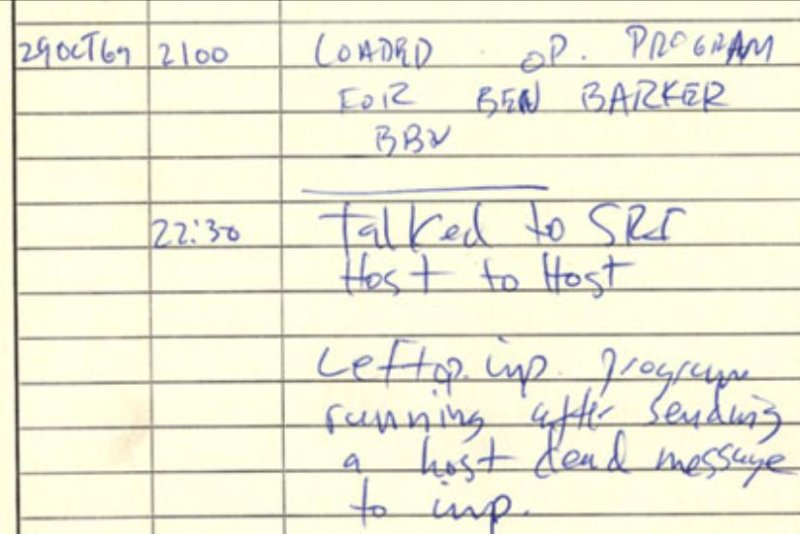

Over five decades ago, within the walls of UCLA's Boelter Hall, a cadre of computer scientists achieved a historic milestone by establishing the world's first network connection as part of the Advanced Research Projects Agency Network (ARPANET) initiative.

Conceived in the late 1960s, ARPANET aimed to improve communication and resource sharing among researchers and scientists who were spread across different locations. Under the guidance of the esteemed computer science professor, Leonard Kleinrock, the team successfully transmitted the first message over the ARPANET from UCLA to the Stanford Research Institute, situated hundreds of miles to the north.

And if, by now, you’re still unsure why this is so cool, it’s thanks to this team that we can now enjoy the perks of working from home.

The ARPANET initiative, spearheaded by the United States Department of Defense's Advanced Research Projects Agency (ARPA, now DARPA), aimed to create a resilient and decentralized network capable of withstanding partial outages. This initiative laid the groundwork for the rather dependable (on good days!) modern communication systems we enjoy today.

Amidst the astronomic graveyard of unsuccessful messages, which lone envoy emerged victorious, you might ask?

The first host-to-host message took flight at 10:30 p.m. on October 29, 1969, as one of the programmers, Charley Kline, tried to "login" to the SRI host from the UCLA host. According to Kleinrock, the grand plan was to beam the mighty "LOGIN" into the digital ether, but a cheeky little system crash cut off the dispatch, and the message received was "LO" as if the cosmos itself whispered, "Lo and behold!"

“We hadn’t prepared a special message[...] but our “lo” could not have been a more succinct, a more powerful or a more prophetic message.” Kleinrock said.

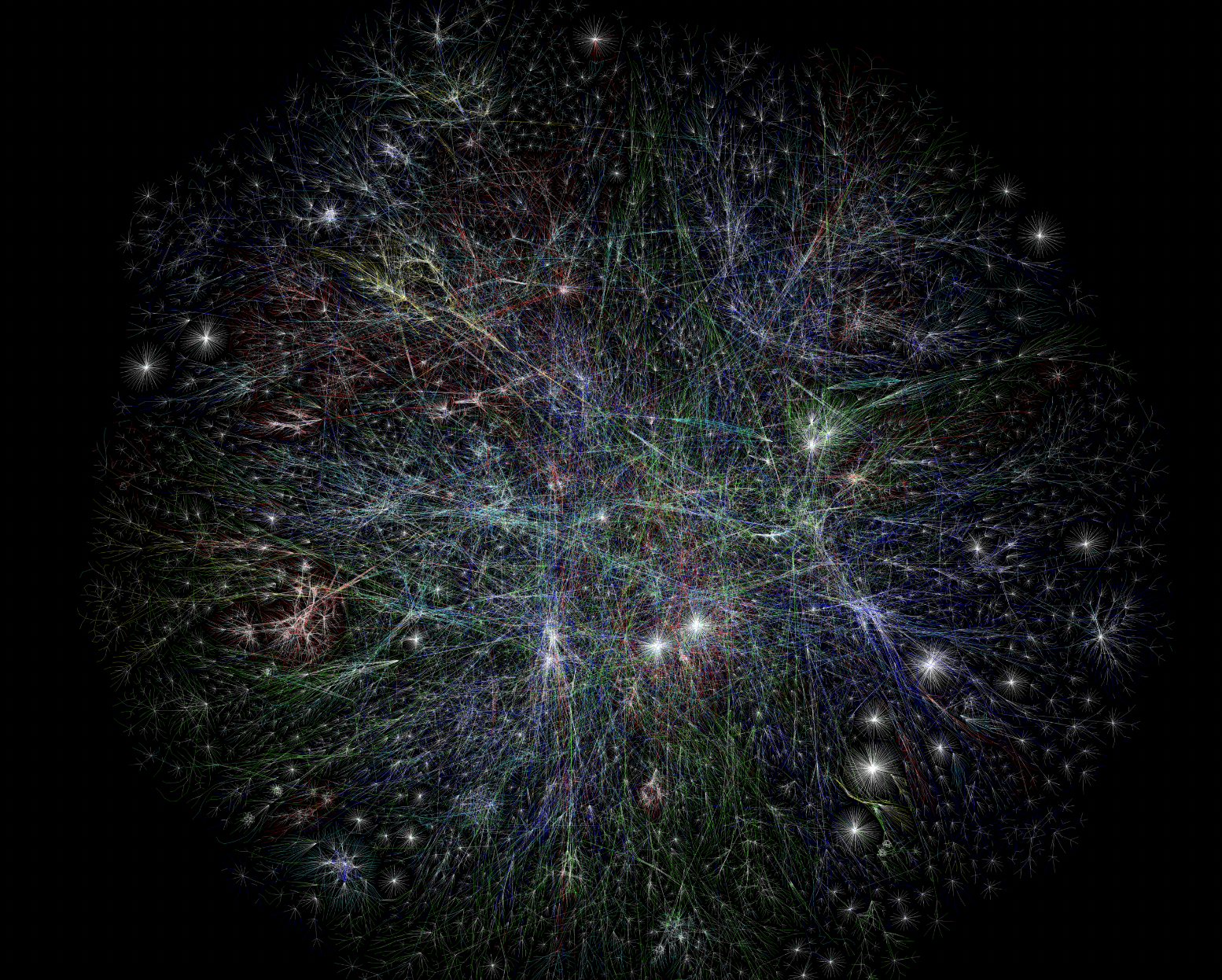

The birth of the Internet - 55 years ago

Let's time-travel to November 21, 1969, when the Internet took its baby steps. Just a smidge after the first test message was sent, the first permanent link on the ARPANet was established between the IMP at UCLA and the IMP at the Stanford Research Institute, marking the genesis of what we now recognize as the Internet.

According to the Computer History Museum, the initial four-node network was finally operational by December 5, 1969, and it employed three techniques developed by ARPANET:

Packet-switching: fundamentally, packet-switching is how we send information in computer networks. Rather than sending data as a whole, it is fragmented into smaller components known as "packets". Each packet has some data and details like where it's from, where it's going, and how to put it back together. This helps make data transmission more efficient and adaptable.

Flow-control: flow control manages how fast data travels between devices to keep communication efficient and reliable. It prevents a fast sender from overwhelming a slower receiver, preventing data loss or system congestion.

Fault-tolerance: fault tolerance refers to how well a system can tolerate problems like hardware failures or software errors and ensure that they don't cause the whole system to break down.

However, the Internet didn't claim its official birthday until January 1, 1983. Before this date, the digital realm existed in a state of linguistic chaos, with various computer networks lacking a standardized means of communication. It was not until the introduction of the Transfer Control Protocol/Internetwork Protocol (TCP/IP), which served as a universal communication protocol, that the digital fiasco finally seamlessly interconnected and birthed the global Internet we now know and love.

The term "internetted," meaning interconnected or interwoven, was in use as far back as 1849. In 1945, the United States War Department referenced "Internet" in a radio operator's manual, and by 1974, it became the shorthand form for "Internetwork."

The laser printer remains one of the most popular types of printers even 55 years later

The written word has been essential for information dissemination and integral to societal development since civilization moved from oral tradition. It has helped make knowledge permanent and has documented ideas, discoveries, and changes throughout history. Our ancestors may have started with manual writing, but we have long since passed that era of making copies of documents by hand with the advent of the printer.

Printers have helped make human lives easier since 1450 when Johannes Gutenberg invented the first printer, the Gutenberg press. As the name suggests, pressure is applied to an inked surface to transfer the ink to the print medium. We don’t really use printing presses as much anymore, except for traditional prints and art pieces as we moved from black and white only to vibrant color.

If you’ve ever wondered how “xerox” became a generic trademark in some parts of the world, here’s why:

The Xerox Palo Alto Research Center made its mark in history because this is where Gary Starkweather, a product development engineer at the time, had the brilliant idea of using light, particularly a laser beam, to “draw” copies or make duplicates. After developing the prototype, he then collaborated with two other colleagues, namely, Butler Lampson and Ronald Rider, to move past making copies to creating new outputs. They called it the Ethernet, Alto Research character generator, Scanned laser output terminal, or EARS, and this is what became the Xerox 9700, the first laser printer in 1969. It was completed by November 1971, but it wasn’t until 1978 that it was released to the market.

The laser printer has evolved from its first version, but more than that, other types of printers also came into fruition. Now, when you talk about printers, most people probably have two prevailing types coming to mind: the aforementioned laser printer and the inkjet printer, which came later and was introduced in 1984. There may be several differences between these two, but there is no doubt that these not only remain popular options despite being 55 and 40 years old, respectively, but also continue to improve upon their previous versions to accommodate evolving consumer needs in documentation and information dissemination.

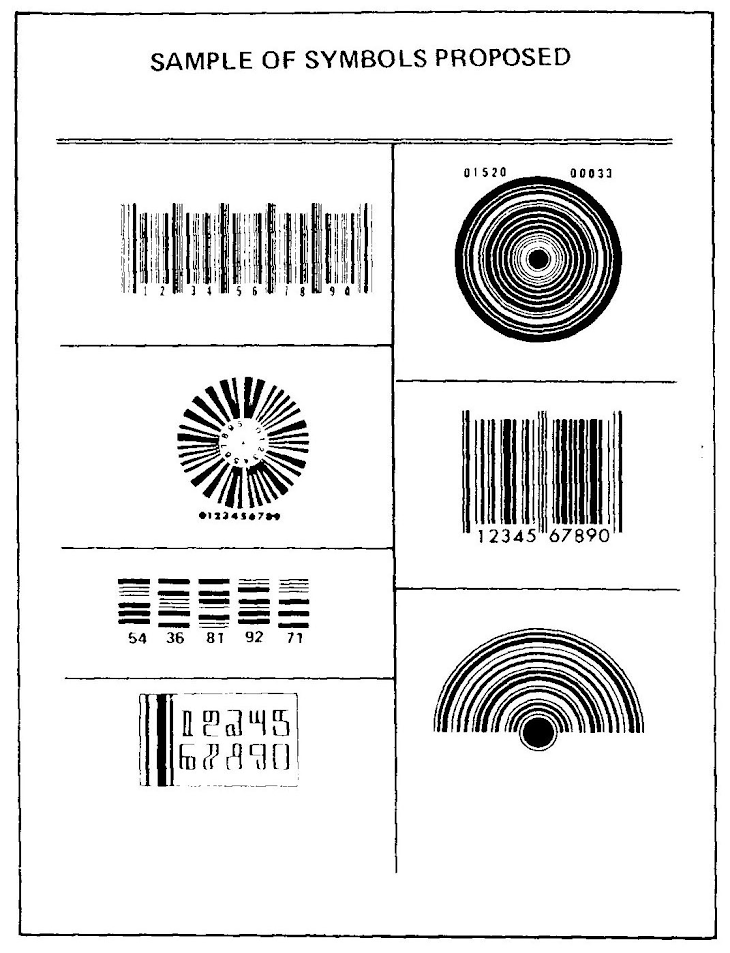

The first barcode and its scanner - 75 years ago

Have you ever wondered how checkout counters came to be? Or how our generation achieved the ease of scanning QR codes on our phones to access, well, pretty much anything on the internet?

This all dates back to October 20 1949 when Norman Joseph Woodland and Bernard Silver first invented the barcode and, eventually, its associated scanner. The barcode is a series of different shapes and forms containing data that is not readily available to the naked eye.

It may have begun as a series of lines and striations of varying thickness forming a round symbol, but it would later birth George Laurer’s barcode—what we now refer to as the universal product code or the UPC, which is still widely used to store product information, especially for supermarket and retail which need to catalog thousands of goods for tracking and inventory. However, on top of these features, it is said that the barcode’s greatest value to business and industry lies in how it has shaped market research by presenting hard statistical evidence for what’s marketable—what sells and what does not.

That said, it is important to note that the scale at which the UPC and other codes are used now would not have been possible without the means to access the data. This is where the scanner comes in as a device that uses fixed light and a photosensor to transfer input into an external application capable of decoding said data.

On June 26, 1974, the Marsh Supermarket in Troy, Ohio made history when a pack of Wrigley’s Juicy Fruit chewing gum became the very first UPC scanned. Imagine testing out a piece of equipment that’s pivotal in modern American history and the first thing you reach for is a pack of gum. Iconic.

To this day, the town continues to celebrate this historic event every few years. With its 50th anniversary approaching, you can check out (heh) the supermarket scanner among the collections at the Smithsonian.

50th anniversary of Altair 8800

The Altair 8800 was a ground breaking milestone that shaped the landscape of personal computing and laid the foundation for tech innovations benefiting B2B enterprises today.

For those who didn’t take Computer History 101, the Altair 8800 is the first commercially successful personal computer and served as a powerful driver for the microcomputer revolution in the 1970s.

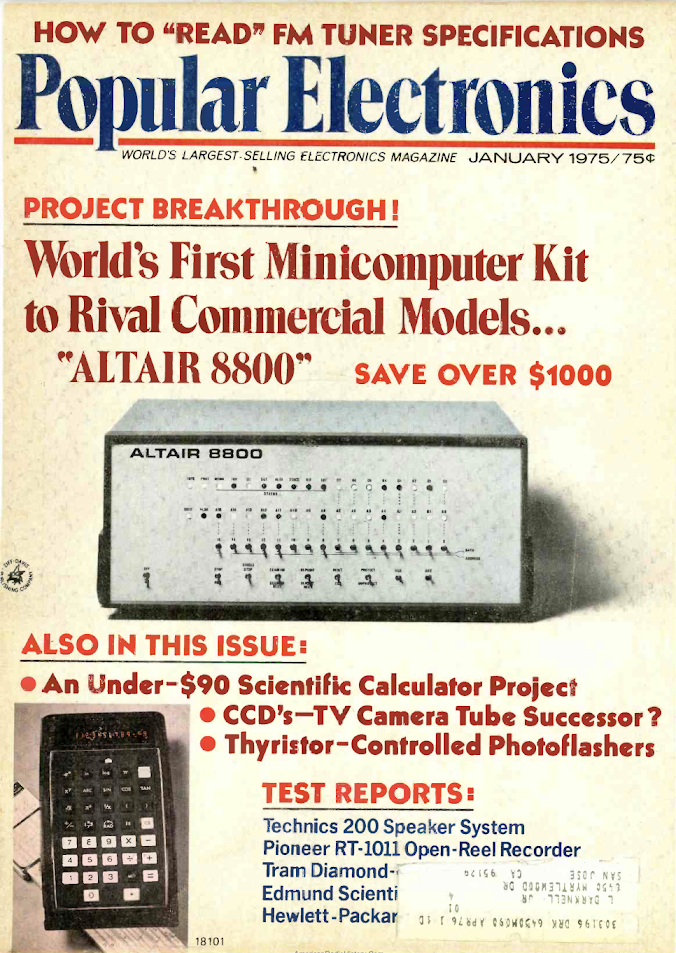

The Altair 8800 is a microcomputer created in 1974 by the American electronics company MITS or Micro Instrumentation and Telemetry Systems. It was powered by the Intel 8080 CPU and gained rapid popularity after being featured on the January 1975 cover of Popular Electronics. It introduced the widely adopted S-100 bus standard, and its first programming language, Altair BASIC, marked the beginning of Microsoft's journey.

It was developed by Henry Edward Roberts while he was serving at the Air Force Weapons Laboratory. After founding MITS with his colleagues in 1969, the team started selling radio transmitters and instruments for model rockets with limited success. From that point onward, MITS diversified its product line to target electronics hobbyists, introducing kits such as voice transmission over an LED light beam, an IC test equipment kit, and the mildly successful calculator kit, initially priced at $175, or $275 when assembled.

Their big break finally came with the Altair when they marketed it as “The World’s Most Inexpensive BASIC language system” on the front cover of Popular Electronics in the August 1975 issue. At that time, the kit was priced at $439, while the pre-assembled computer was available for $621.

Originally named PE-8, the Altair earned its more captivating moniker, "Altair," courtesy of Les Soloman, the writer for the article. This suggestion came from his daughter, inspired by the Star Trek crew's destination that week. A great reference considering the then sci-fi-esque Altair “minicomputer” was devoid of a keyboard and monitor, and relied on switches for input and flashing lights for output.

Microsoft BASIC was released 45 years ago

In the late 1970s, Microsoft, a budding tech giant, was poised to propel itself to new heights. Microsoft started the year by bidding farewell to its Albuquerque, New Mexico headquarters and establishing its new home in Bellevue, Washington on January 1, 1979.

Three months later, Microsoft unveiled the M6800 version of Microsoft Beginner's All-purpose Symbolic Instruction Code (BASIC). This programming language, developed by Microsoft, had already gained notable recognition for its role in the Altair 8800, one of the first personal computers. Over the years, Microsoft BASIC solidified its role as a cornerstone in the success of many personal computers, playing a vital part in the development of early models from renowned companies such as Apple and IBM.

But the year has just started for Microsoft. On April 4, 1979, the 8080 version of Microsoft BASIC became the first microprocessor software product to receive the esteemed ICP Million Dollar Award, catapulting Microsoft into the limelight. Later that year, Microsoft extended its global footprint by adding Vector Microsoft, located in Haasrode, Belgium, as a new representative, signalling Microsoft’s entry into the broader international market.

Founded by Bill Gates and Paul Allen on April 4, 1975, Microsoft's initial mission was to create and distribute BASIC interpreters for the Altair 8800.

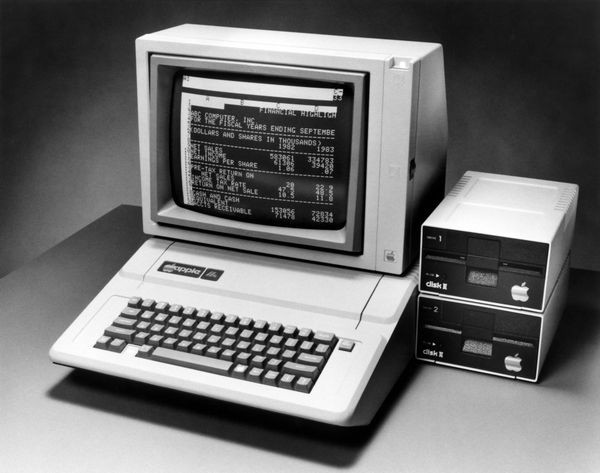

First Killer App, VisiCalc, was released on October 17, 1979

On October 17, 1979, the first killer app was released. The VisiCalc or "visible calculator" is the first spreadsheet computer program for personal computers. It’s the precursor to modern spreadsheet software, including the app we love to hate, Excel.

While the term "killer app" may sound like a phrase straight out of the Gen Z lexicon, its origins trace back to the 1980s during the rise of personal computers and the burgeoning software market. Coined during the era of emerging technology, the term "killer app" refers to a software application so ground breaking and indispensable that it becomes the reason for consumers to buy the specific technology platform or device that hosts it.

Due to VisiCalc’s exclusive debut on the Apple II for the first 12 months, users initially forked out $100 (equivalent to $400 in 2022) for the software, followed by an additional commitment ranging from $2,000 to $10,000 (equivalent to $8,000 to $40,000) for the Apple II with 32K of random-access memory (RAM) needed to run it. Talk about a killer sales plan.

What made the app a killer, you wonder? VisiCalc is the first spreadsheet with the ability to instantly recalculate rows and columns. Sha-zam! It gained immense popularity among CPAs and accountants, and according to VisiCalc developer Dan Bricklin, it was able to reduce the workload for some individuals from a staggering 20 hours per week to a mere 15 minutes.

By 1982, the cost of VisiCalc had surged from $100 to $250 (equivalent to $760 in 2022). Following its initial release, a wave of spreadsheet clones flooded the market, with notable contenders like SuperCalc and Multiplan emerging. Its decline started in 1983 due to slow updates and lack of innovation, and its reign was eventually eclipsed by Lotus 1-2-3, only to be later surpassed by the undisputed world dominator, Microsoft Excel.

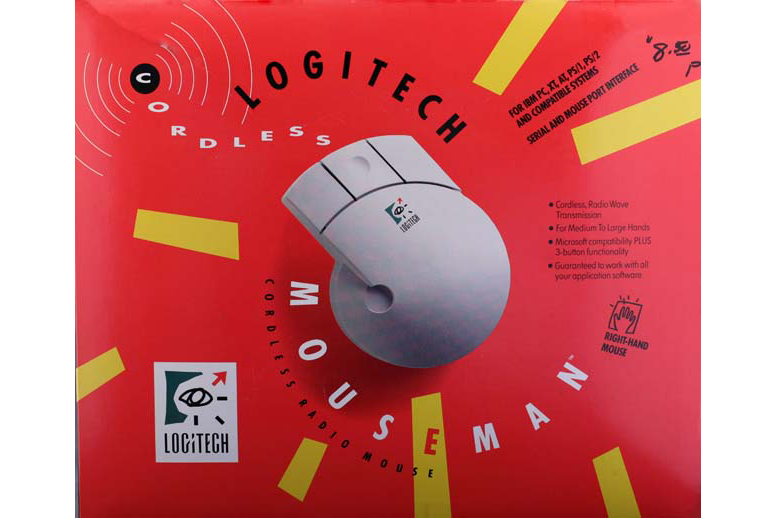

First wireless mouse - 40 years ago

In 1984, Logitech threw down the gauntlet in the tech peripherals arena by dropping the mic – or rather, the cord – with the world's first wireless mouse. No more cable chaos, Logitech was set to give the world of computing freedom.

The breakthrough came with Logitech's use of infrared (IR) light, connecting the mouse to the Metaphor Computer Systems workstation. Developed by former Xerox PARC engineers David Liddle and Donald Massaro, this wireless setup initially required a clear line of sight, limiting its effectiveness on cluttered desks.

However, Logitech continued to push boundaries by introducing the first radio frequency-based mouse, the Cordless MouseMan, in 1991. This innovation eliminated the line-of-sight constraint of the earlier version, setting the stage for efficient, clutter-free, and Pinterest-worthy desk setups.

Beyond snipping cords, the Cordless MouseMan was Logitech's adieu to the brick-like vestiges of the past. It's the first mouse that looked just like the modern versions we now effortlessly glide across our desks. Plus, it even came with an ergonomic thumb rest, the first of its kind.

Logitech began selling mice in 1982, and its commitment to catering to a wide range of user preferences solidified its position as a leader in the mice market today. Originally established to design a word processing software for a major Swiss company, it quickly and fortuitously pivoted to developing the computer mouse in response to a request from a Japanese company. It is known as Logitech globally, derived from logiciel, the French word for software, except in Japan, where it is known as Logicool.

Logitech remains a powerhouse in the mice market, showcasing its prowess with a diverse line up. Among its offerings, the MX mouse line up stands as a crowd favorite, renowned for its ergonomic design and functionality. On the minimalist end, there’s the chic and compact Pebble Mouse 2, and for gamers seeking top-tier performance, there’s Logitech G Pro X Superlight 2 Lightspeed.

40 years of GNU and software freedom, yes or yes?

If you’ve ever heard of the operating system called the GNU, there’s a high chance you’ve heard it being called Linux or Unix—it’s not.

The GNU Project started on the 5th of January 1984. Interestingly, GNU—pronounced as g’noo in a single syllable, much like how one would say “grew”—stands for “GNU’s Not Unix” but is often likened to Unix. Why? While it already had its own system or collection of programs with the essential toolkit, it was yet to be finished and therefore used Unix to fill in the gaps.

In particular, GNU had a missing kernel, so it is typically used with the Linux kernel, leading to the GNU/Linux confusion. The Linux kernel is still in use, not because GNU doesn’t have its own kernel. As a matter of fact, the development of its kernel, the GNU Hurd, began in the 1990s, even before Linux was developed, but it is unfinished and continues to be worked on as a technical project courtesy of a group of volunteers.

It proudly remains a 100% free software, in which the concept of software freedom functions similarly as freedom of speech and freedom of expression instead of just being free of cost. Its open source nature means users are free to run, copy, distribute, make changes, and improve upon the existing software.

At a more extreme level, some supporters argue that the GNU made it possible for anyone to freely use a computer. Whether you agree with or believe that statement is up to you. Regardless, the GNU maintains this stance of freedom as it advocates for education through free software by ensuring that the system is not proprietary and therefore can be studied by anyone willing to learn from it. It also puts users in control of their own computing and, in theory, makes them responsible for their own software decisions.

That said, GNU’s software freedom can incite one of two perspectives from users: potential or pressure (or both). Which one is it for you?

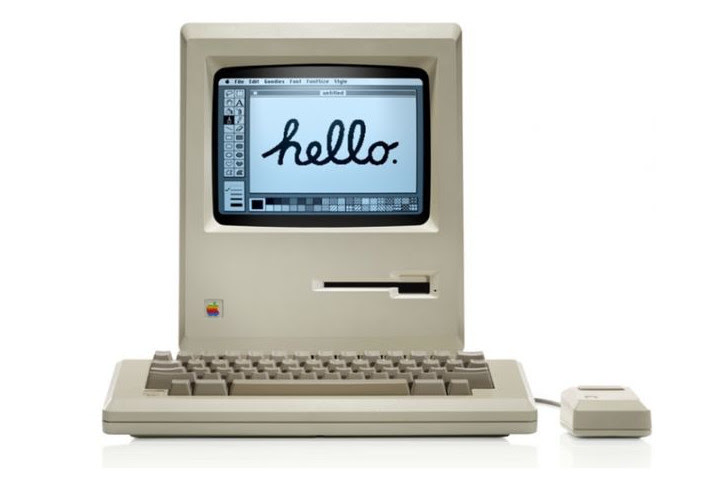

Macintosh first says hello, 40 years ago

The Apple Macintosh, later known as the Macintosh 128K, is the OG in Apple's line up of personal computers. Affectionally dubbed the first cool computer, it was the first desktop PC that had it all: a sleek graphical user interface, a built-in screen, and, wait for it… a mouse.

It was released with an initial price tag of US$2,495 (now equivalent to a whopping $7,000), making the brand’s latest M2 Macbook Pro look like an affordable choice. It was a thing of beauty, enclosed in a sleek beige case, with a chic carrying handle - anyone who has unboxed an Apple product knows how satisfying unwrapping their goodies is.

Now, let's talk about its debut – cue the "1984" TV commercial during Super Bowl XVIII on January 22, 1984, directed by none other than Sir Ridley Scott. The response? Incredible. In four months, the Macintosh sold 70,000 units despite its premium price.

The Macintosh came bundled with two applications, MacWrite and MacPaint, specifically designed to showcase its revolutionary interface. Users also had access to applications such as MacProject, MacTerminal, and the widely-used Microsoft Word.

At the heart of the computer beats a Motorola 68000 microprocessor, intricately linked to a 128 KB RAM, which is shared by both the processor and the display controller. Notably, and one that would make all tech YouTubers unhappy if they existed then, Apple did not provide options for RAM upgrades.

Jobs asserted that "customization really is mostly software now ... most of the options in other computers are in Mac" and only authorized Apple service centers were allowed to open the computer. After months of user dissatisfaction over the Mac's limited RAM, Apple officially unveiled the Macintosh 512K.

Steve Jobs insisted that if a consumer needed more RAM than the Mac 128K offered, he should just pay more instead of attempting to upgrade the computer himself…a marketing strategy that Apple seems content to uphold even forty decades years later.

Dell celebrates its 40th anniversary

Dell reaches an impressive 40 years in the tech arena this year. Originally established on February 1, 1984, as PC’s Limited by its CEO Michael Dell, who at that time was a 26-year-old student at the University of Texas in Austin.

Dell's origin story is every startup’s dream - no high-rise offices, just a college student, a dream, and a dorm room. Michael Dell's big idea? To sell IBM PC-compatible computers, carefully pieced together from stock components according to each user’s personal needs, directly to customers.

And sell he did. In 1985, the company unveiled its first independently designed computer, the "Turbo PC," with an Intel 8088-compatible processor capable of reaching a maximum speed of 8 MHz. Each Turbo PC was carefully pieced together according to individual orders, giving buyers the flexibility to personalize their units with a range of options with prices lower than retail brands.

In an interview with Harvard Business Review’s editor Joan Magretta in 1998, Dell talked about his direct business model: “You actually get to have a relationship with the customer,” Dell explained. “And that creates valuable information, which, in turn, allows us to leverage our relationships with both suppliers and customers. Couple that information with technology, and you have the infrastructure to revolutionize the fundamental business models of major global companies.”

In 1987, the company abandoned the PC's Limited moniker to adopt the name Dell Computer Corporation, signalling the commencement of its global expansion. In 1992, Dell Computer Corporation secured a spot on Fortune's list of the world's 500 largest companies, propelling Michael Dell to the position of the youngest CEO of a Fortune 500 company at that time.

Dell operated solely as a hardware vendor until 2009, when it acquired Perot Systems, marking its entry into the IT services market. 40 years after its inception, it continues to deliver best-in-class products such as their latest eye-friendly UltraSharp monitors specifically designed for hybrid workers.

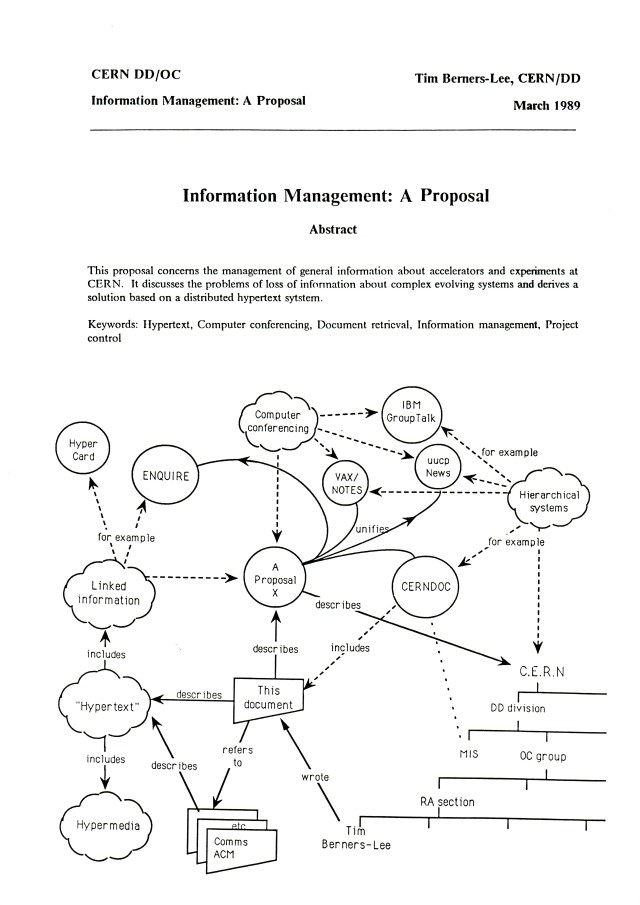

WWW proposal - 35 years ago

March 12, 2024 marks the 35th birthday of the World Wide Web. From its humble beginnings as an information-sharing tool for scientists at CERN (that’s European Council for Nuclear Research or Conseil Européen pour la Recherche Nucléaire in French), the web has burgeoned into a sprawling network that interconnects billions of users worldwide.

The World Wide Web, also referred to as "the web" began as many great projects did…with the very human desire to share information with others. But we're not talking about sharing cat videos or the latest phone update with your work bestie here, we're talking about an expansive community of over 17,000 scientists across 100+ countries needing an accessible and powerful global information system.

Tim Berners-Lee and informatics engineer Robert Cailliau Belgian set the wheels in motion on March 12, 1989, proposing an information management system and finally establishing the first successful communication between an HTTP client and server via the Internet in mid-November.

By the tail end of the 90s, Berners-Lee had the first-ever Web server and browser doing the hustle over at CERN. If you chance to visit the lab today, you can check out the computer with a note in red ink saying, "This machine is a server. DO NOT POWER IT DOWN!!”

Of course, that computer is now shut down. But no worries, web server software was developed to enable computers to function as web servers. In 1999, the Apache Software Foundation was founded, fostering numerous open-source web software projects. This eventually led to the release of various web browsers, including Microsoft Internet Explorer, Mozilla's Firefox, Opera's Opera browser, Google's Chrome, and Apple's Safari.

Tim Berners-Lee is the creator and director of the World Wide Web Consortium (W3C) and a co-founder of the World Wide Web Foundation. In 2004, he received a knighthood from Queen Elizabeth II in recognition of his ground-breaking contributions.

NeXTStep OS unveiled to the world 35 years ago

On September 18, 1989, NeXT Computer introduced NeXTSTEP version 1.0, an object-oriented and multitasking operating system (OS). Originally tailored for NeXT's computers, it later found its way onto different architectures, including the now widely used Intel x86.

NeXT Computer is a company founded by Steve Jobs after he was forced to leave Apple, due to a massive disagreement with the board and then CEO John Sculley. Jobs' original plan for NeXT was to design computers customized specifically for universities and researchers. However, with each unit costing up to $6,500, the venture struggled to find success in a market where the demand was for computers priced below $1,000. So in 1993, NeXT gracefully bowed out of the hardware spotlight, and turned its focus on software.

NeXTStep was the OS for NeXT's line of workstations, including the NeXTcube and NeXTstation. NeXT machines became the creative canvas for programmers, giving birth to iconic games like Quake and Doom, and the first app store was also invented on the NeXTSTEP platform.

Notably, Sir Tim Berners-Lee, Founding Director of the World Wide Web Foundation and the visionary computer scientist behind the World Wide Web, reportedly built the first web browser on a NeXT computer.

In 1997, Apple bought NeXT for $429 million, and Jobs returned to once again become CEO of Apple. Mac OS combined NeXT's software with Apple's hardware and Mac OS X was born.

OS X seamlessly incorporated key features from NeXTSTEP, such as the iconic dock and the mail app, alongside subtle nuances like the familiar spinning wheel of patience. In 2001, OS X's debut offered users a sneak peek into the Mac's future. If you were an early Mac fanatic, you may have noticed the radical design shift and was wildly amused by the column view in Finder and System Preferences.

The first successful tablet computer that birthed generations of offpsirng - 35 years ago

Tablets nowadays are so advanced and function similarly as other devices, often merging the features of phones and desktops, but the world’s first tablet computer ran on the MS-DOS operating system. The only clear similarities it has with the latest models of tablets are 1) the on-screen keyboard, with an external keyboard available for connection, and 2) the stylus, though the stylus here is attached by wire.

Imagine being in 2024 and having a 10-inch LCD display capable only of 640 × 400 black-and-white graphics running on 1 MB of internal memory and 256 KB and 512 KB flash memory card slots—yes, MB and KB, respectively. Granted, files back then didn’t take up as much space as they do now, but it’s nearly impossible, right?

That’s because the GRiDPad 1900, a product of GRiD Systems, was commercially released 35 years ago in October 1989 at a whopping price of $2,370.

For full disclosure, the Linus Write-Top technically came before the GRiDPad 1900 when it was released in 1987. However, the latter was the first to successfully achieve the goal for which it was created by Jeff Hawkins, and its success upon launch was mostly attributed to military use as its price and weight proved unappealing to the general public.

Current market prices for the best tablets out there are half the price of the GRiDPad 1900 or less (unless, of course, you choose to buy the largest memory and opt to include all of its available accessories). There are also numerous options available now, so you can certainly make your decision based on your needs.

If you’re looking to buy one, you can find our list of the best tablets around today.

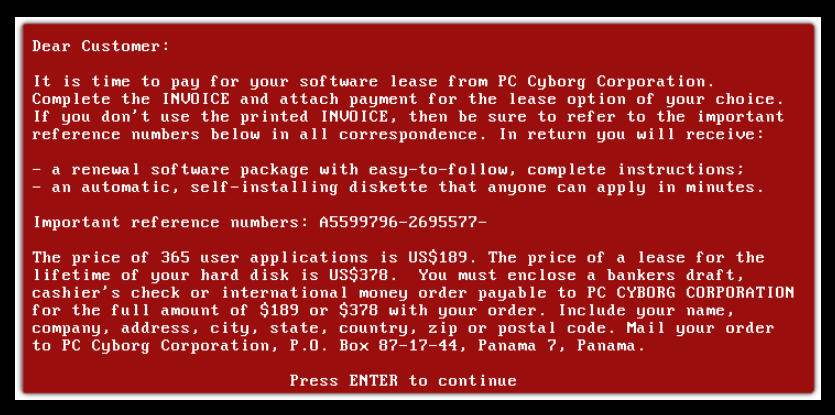

AIDS Trojan, the spread of the first ransomware in history - 35 years ago

The first ransomware cyber attacks occured over two days in 1989—eight on December 15, and one on December 19.

Known worldwide as the AIDS Trojan, it was created by Dr Joseph Popp, who has been hailed the father of ransomware for being the first to hold users in virtual capture. The virus was dubbed as such for four reasons: 1) 1989 was the year the AIDS epidemic reached 100,000 recorded cases and therefore referred to this as the digital version of the virus, 2) it targeted AIDS researchers, 3) it was created by an AIDS researcher himself, and 4) all profits were then donated to AIDS research per Popp’s declaration upon his arrest.

Reading about this now, you might think it’s similar to the extortion brought on by ransomware of the present, but Popp’s invention differed as it was sent via postal mail in the form of over 20,000 floppy disks.

Those were sent from London to 90 countries, specifically to people with ties to the World Health Organization and its magazine subscriptions. The five affected countries, including Belgium, Italy, Sweden, the United Kingdom, and Zimbabwe, were referred to as “known victims” to an undisclosed extent.

The attack didn’t happen upon infection; it reportedly took the 90th boot-up of an infected device to activate the ransom note that asked for either $189 for a year-long license or $378 for a lifetime license.

The virus was occasionally referred to as PC Cyborg based on the corporation supposedly in charge of the lease: PC Cyborg Corporation. Luckily, no one lost a penny to this attack apart from the investigators who followed the instructions and mailed payments to the indicated P.O. Box in Panama just to see what would happen.

Amazon was founded, 30 years ago

Amazon sprang to life on July 5, 1994 with a remarkably bookish origin story. Jeff Bezos started the brand from the confines of his garage in Bellevue, Washington to take advantage of the rapidly growing phenomenon that was the Internet. Bezos was 30 at that time, earning well at Wall Street, and a person like that suddenly announcing to people that he’s thinking of selling books… his family and friends must have thought he had gone crazy, right?

Not at all. Interviews reveal that his parents were one of his early investors, forking out $300,000 from their retirement savings after a two-minute phone call with their son, and not because they believed in Amazon, but because they believed in Jeff. The Return on Jeff (ROJ) was substantial, Amazon is now one of the world’s most valuable and influential brands.

The whole venture started when Bezos read a report on web commerce growth that could make anyone's eyes pop—2,300% growth annually.

Now, why books? Well, Bezos saw the global demand for literature and, more importantly, the infinite sea of titles that are simply not practical to print in a single catalog. An online bookstore was born, and with it, users were able to access an extensive list of titles that only an online store can offer.

Bezos christened his brainchild "Cadabra, Inc." A few months into the journey, however, a quirky turn of events led to a name change. A lawyer misheard "Cadabra" as "cadaver," prompting Bezos to rebrand. After a dictionary deep dive, he chose the name "Amazon" to evoke the imagery of an exotic rainforest and the grandeur of a river.

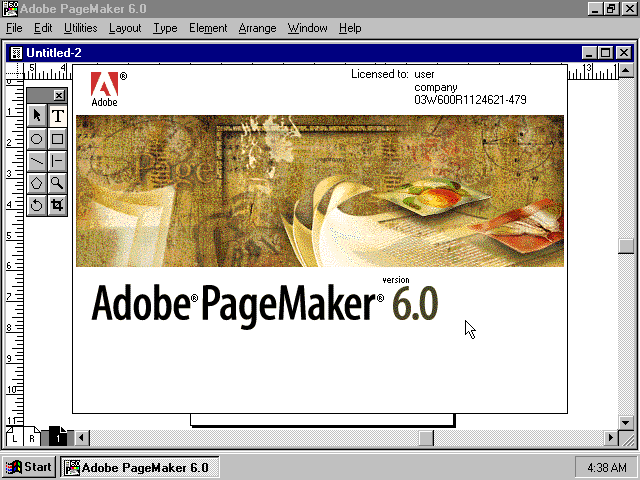

Adobe merges with Aldus, 30 years ago

The Adobe-Aldus merger on August 31, 1994 stands out as a pivotal moment that reshaped the publishing industry.

The merger solidified Adobe's position as a leader in the software industry, expanding its product portfolio with the addition of Aldus' key offerings, notably the PageMaker, which unquestionably strengthened Adobe's dominance and competitiveness in the market.

Established in 1984, Aldus Corporation earned widespread recognition for its ground breaking desktop publishing software, the renowned PageMaker. PageMaker played a pivotal role in revolutionizing print publication by allowing users to easily design layouts and format text. It was the Canva of 1984, providing an innovative and accessible solution that empowered businesses to publish their content more efficiently.

Adobe's acquisition of Aldus gave rise to Adobe PageMaker, which was then specifically designed to run on the Apple Macintosh platform, and took the publishing world by storm.. The desktop publishing revolution has begun and it’s rooted in the synergy of these three: Macintosh's intuitive graphical user interface (GUI), the PageMaker's user-friendly publishing software, and the precision of the Apple LaserWriter laser printer.

Where is PageMaker now? You might wonder.

In 2004, Adobe halted all further development of PageMaker and actively urged users to transition to InDesign, which is what most young content and graphic designers are now familiar with. The last iteration, PageMaker 7.0.2, was released on March 30. Since then, Adobe has discontinued updates for PageMaker, positioning its successor, Adobe InDesign CS2, as the premier tool for modern designers and publishers.

The BlackBerry 850 launches 25 years ago

On January 19, 1999, the BlackBerry 850, a two-way pager designed to deliver e-mail over different networks was launched - the first device to bear the BlackBerry name.

The name "BlackBerry" was chosen by a marketing company called Lexicon Branding. In an interview, Lexicon elaborated on its process of choosing a name: “The word “black” evoked the color of high-tech devices, and the gadget’s small, oval keys looked like the drupelets of a blackberry.” Plus, it was quick and easy to pronounce, the Lexicon executives revealed, akin to the speedy operation of the push email system.

In its prime, circa 2010, BlackBerry dominated the market, commanding over 40% of the US mobile device market share. It was even dubbed "Crackberry" owing to its rather addictive allure. Among the notable fans of the brand was Google's then-Executive Chairman, Eric Schmidt, who opted for his reliable BlackBerry over Google's Android smartphones, emphasizing his preference for the BlackBerry keyboard.

However, BlackBerry later missed the opportunity to establish a marketplace for third-party applications, comparable to the Apple App Store or Google Play Store.

Additionally, the migration to touchscreen devices led to a decline in the appeal of BlackBerry's physical keyboard, which BlackBerry loathed to let go of. BlackBerry's struggle to contend with the iPhone and Android resulted in a notable decrease in its market share over the subsequent years and on January 4, 2022, BlackBerry officially ended the production of its phones.

Wi-Fi Alliance formed 25 years ago

The Internet has become ingrained in everyone’s daily lives, so much that one could argue it’s inseparable from society at this point.

Wi-Fi has become so widespread that some countries have provided it freely all over their major cities. In other countries, it’s a selling point in certain establishments to draw customers in and have them buy your products or avail of your services.

Thinking about these, it’s a wonder that the Wi-Fi is already turning 25 this year when recent history has had many of us putting up with the rather iconic tone of a dial-up connection. Wireless connectivity seemed like an impossible dream until six companies founded the Wi-Fi Alliance, with a maximum operating speed of approximately 11MBps and minimal security. It eventually grew it into what it is now, with about a thousand companies involved and a few providers announcing 50GBps of an even more secure connection for 2024.

Back then, there was a lot of hope for development, but the idea of efficient wireless interoperability was so new and unfamiliar. It was also limited to computers and other gadgets of a similar purpose. Now, nearly every other device, from operating basic household appliances to remotely driving cars, has connectivity features. The scale at which Wi-Fi connectivity has grown is exponential, with several aspects of life, down to the flow of money within the economy, becoming heavily dependent on ensuring availability and putting up safety nets to avoid a media blackout.

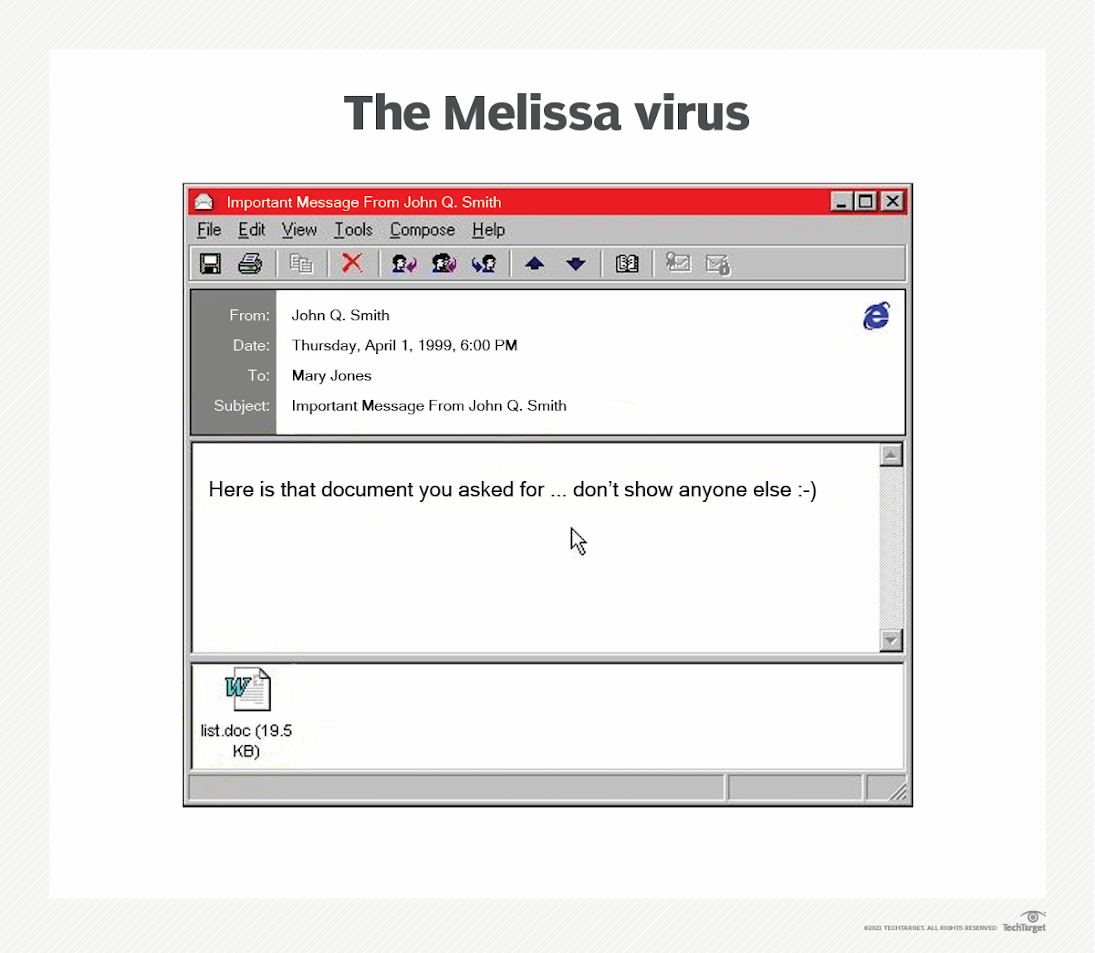

Can Melissa still effectively hijack email systems with infected attachments 25 years on?

Two and a half decades ago, the only conception that society had of a virus was that which affects our bodies and requires a live host to replicate itself. Efficient, preventive cybersecurity was practically non existent, inconceivable even. One attack the year before the turn of the millennium would change the course of cyber history forever.

The year 2024 marks Melissa’s 25th anniversary. Who—or what—are we celebrating, you ask? The more important question is: Should we be celebrating in the first place?

Have you ever received a chain message over the course of your virtual/digital life? Melissa functioned similarly back when email was still relatively recent. It was a mass-mailing macro virus or malware primarily designed to hijack email systems that, instead of targeting individual computers, infected Word documents then disguised these attachments as important messages from people you know.

Back then, there was no reason to distrust a file attached in an email sent by a friend, an acquaintance, or a co-worker. Once opened, these attachments would automatically be forwarded to the first 50 addresses in the mailing lists. This guaranteed the quick spread of the virus.

A programmer by the name of David Lee Smith released the Melissa virus sometime in early March 1999, but it wasn’t until the 26th of that same month that it spread like wildfire. The couple of weeks that it took for the spread to pick up may seem like a long time now, but it was dubbed the fastest spreading infection at the time.

According to the FBI, approximately one million email accounts were affected and resulted in a slowing of internet traffic. Experts had it mostly contained within a few days, but it took longer to put an end to the infection, consequently leading to over $80 million worth of damage, including the cost for repairs. This incident raised awareness among individual users, as well as among the government and private sectors, of the dangers of carelessly opening unsolicited emails and of the vulnerability of an unprotected network.

One could argue that while it laid the foundations of computer viruses as we know them today, it also further sparked the conversation about developing systems of cybersecurity. You’d be surprised at how many people still fall for the same trick 25 years later.

Chernobyl virus, 25 years on

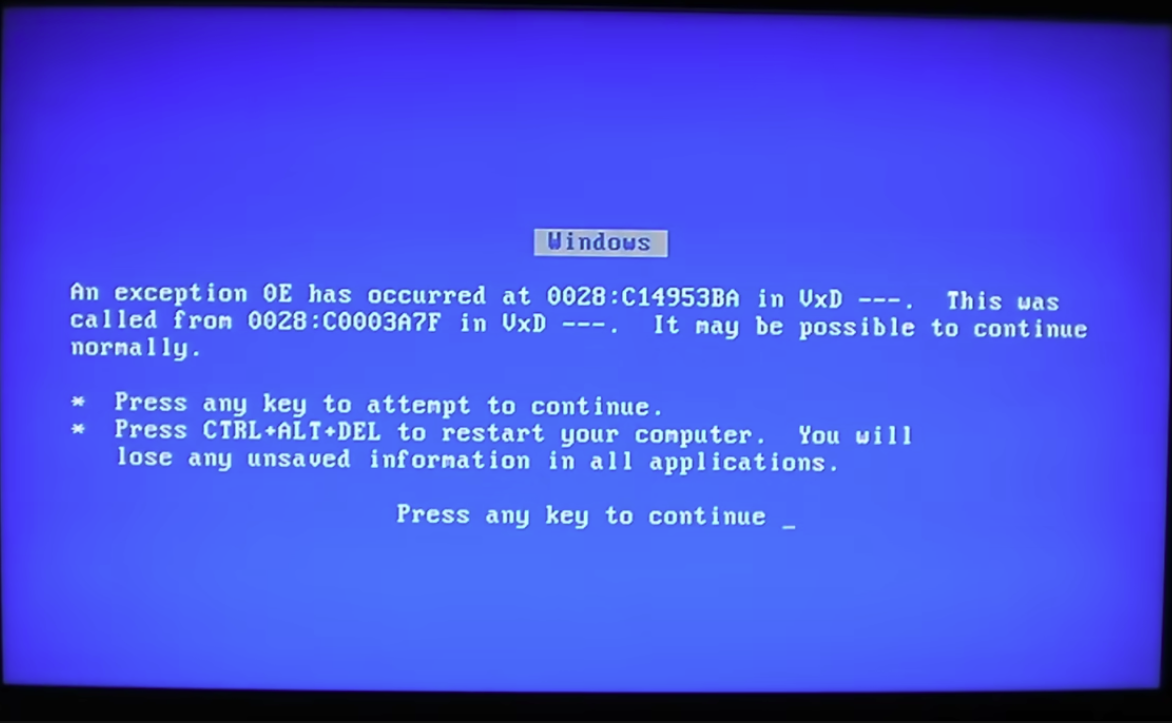

For those fortunate enough not to be acquainted, CIH, also kown as Chernobyl or Spacefiller, is a computer virus designed for Microsoft Windows 9x systems that made its debut in 1998.

This malicious software packed a punch, with a highly destructive payload, playing havoc with vulnerable systems by messing with their vital information, and causing irreversible damage to the system BIOS.

CIH derives its name from the initials of its creator, Chen Ing-Hau. It also became known as Chernobyl, as its payload trigger date, April 26, 1999, coincided with the anniversary of the infamous Chernobyl nuclear disaster. It also happens to be the birthday of CIH’s master creator.

Korea bore the brunt of the CIH virus, but the impact was staggering, affecting an estimated one million computers and causing over $250 million in damages overall. Its global sweep left 200 computers in Singapore, 100 in Hong Kong, and countless others worldwide grappling with its destructive prowess.

In a daring move, Chen declared that he wrote the virus to challenge the bold claims of antivirus software developers regarding their effectiveness. He escaped charges from prosecutors in Taiwan because no victims came forward with a lawsuit at that time. Nevertheless, the fallout from these events prompted the introduction of new computer crime legislation in Taiwan.

Why does CIH hold a spot on this list, and what's its lasting mark? Well, despite being a bit of a digital relic, the Chernobyl Virus has left a lasting impact on how we think about computer security. CIH has made everyone—from regular folks to professional IT admins —realize the importance of having good anti-virus protection and staying on top of security patches.

Know more about how viruses work and check out the best antivirus softwares for PC.

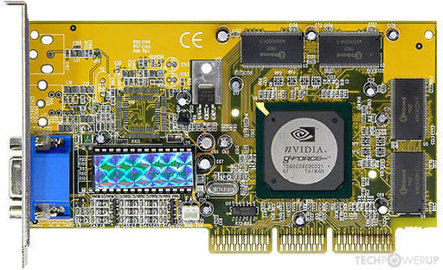

Nvidia GeForce 256, the first-born integrated GPU, made history 25 years ago

Anyone who dabbles in graphics (photo and video editing) or gaming is likely to have an idea of what the graphics processing unit or the GPU is. Advancements in technology and other gadgets now come at a fast pace, with newer models being released into the market merely a year after their predecessors.

This was not the case 25 years ago when the Nvidia GeForce 256, the world’s first intergrated GPU was announced on August 31, 1999 and subsequently launched on October 11 of that same year.

To highlight the impact of the GeForce 256 as a milestone in the history of technology, we need to differentiate the two types of GPUs in the market.

Integrated graphics boast of space-saving, energy-efficient, and cost-effective qualities as the unit is built right into the board; these are more often found on compact devices, such as phones and tablets or even budget laptops, because these share memory with the main system and therefore balance out performance and efficiency. On the other hand, dedicated graphics are larger and more expensive. While these deliver better graphics and have their own memory, as seen in mid-range or higher-end units, these are also known to generate a lot of heat.

The GeForce 256 falls under integrated graphics. Some would argue that it had nothing much to offer beyond faster gameplay at 1024 × 768 resolution; others would defend it by saying time is of the essence in certain games and therefore speed is crucial. Regardless of users’ feelings about the GeForce, there is no doubt about its role in inevitably popularizing the GPU simply from its add-in board design.

Nowadays, the GPU specifications have made it into the list of features to consider before purchasing a new device, be it a phone or a laptop. It has also been a factor in determining whether gadgets belong to the low, mid, or high price ranges. But ultimately, the deciding factor would (and should) be the purpose of the device—and your budget.

Of panic, preventive measures, and the new millennium, 25 years on

The turn of the millennium—literally December 31, the last day of the year 1999—ushered in a bug that shook the world to its core. The projected impact of the Y2K or Millennium Bug caused much anxiety across the globe, as it could range from an individual computer malfunctioning, to government records and processes experiencing delays and errors, to armed forces and police being mobilized should any large-scale appliances or pieces of equipment containing computer chips (eg, utilities, temperature-control systems, medical equipment, banking systems, transportation systems, or emergency services) fail, to nuclear reactors shutting down.

It basically seemed like the end of civilization as we knew it. All because computer systems back then were not made to interpret “00.”

For context, programmers used 2-digit codes to indicate the year, leaving out the initial 19 of years in the 20th century. That decision would’ve been efficient, but it failed to account for the fact that the next century also meant the next millennium, with the first 2 digits of the year moving from 19 to 20. In the then-current systems, 00 from 2000 would’ve been read as 1900, and you could imagine the implications that would have for everyday utility.

To avoid these impending errors, it is said that approximately $300 billion was spent to employ preventative measures that upgraded computers and applications to be Y2K-compliant, with nearly half of the amount from the United States alone. Engineers and programmers all over the world worked tirelessly in anticipation of this major event, so when the time came, most of the damage had been solved prior to taking effect.

This led to a polarized public opinion, with one side praising the success of the Y2K-compliance campaign and the other side claiming that the potential effects of the Y2K were gravely exaggerated so that providers could take advantage of vulnerable customers by selling unnecessary upgrades under the guise of protection. While these vultures may have existed, a number of analysts pointed out, even years after the incident, that the improved computer systems came with benefits that continued to be seen for some time after.

Would we really want to see the full extent of the Y2K bug wreaking havoc in several aspects of civilization? Of course not. This phenomenon may have happened 25 years ago, but the lessons learned live on as proof that in crises, being preventive beats being reactive.

Gmail was introduced to the public 20 years ago

Gmail is celebrating its 20th anniversary in 2024. Launched on April 1, 2004, Gmail was the first email service provider that allowed users up to one gigabyte of storage.

Gmail's inception was spearheaded by Google developer Paul Buchheit, and arose from his frustration with sluggish web-based email interfaces in the early 2000s.

During its development, Gmail operated as a clandestine skunkworks project, shrouded in secrecy even within the confines of Google. However, by early 2004, it had permeated almost every corner of the corporation, becoming everyone’s go-to platform for accessing the company's internal email system.

Finally, Google unveiled Gmail to the public on April 1, 2004, triggering widespread speculation after persistent rumors during its testing phase. The April Fool's Day launch also raised eyebrows in the tech community, given Google's reputation for April Fool's pranks.

But it was real and it was poised to revolutionize the way teams communicate globally. Without the limitation of the depressing 2 to 4 MB storage that was standard at the time and required users to diligently clean up their emails (hello to inbox-zero advocates), Gmail outshined Yahoo and Hotmail by becoming a storage solution for emails and files that matter.

However, Google faced a challenge as it lacked the necessary infrastructure to offer millions of users a dependable service with a gigabyte of storage each, and had to initially limit its beta users. Thankfully, the limited rollout ended up adding to its appeal. Georges Harik, then overseeing most of Google's new products, notes, "Everyone wanted it even more. It was hailed as one of the best marketing decisions in tech history, but it was a little bit unintentional."

Gmail has swiftly risen to prominence, now standing as one of the world's most widely-used email platforms and offering users a complimentary 15GB of storage. Paired with Google Workspace, formerly known as G Suite, it has become an indispensable asset for businesses of every size.

Bitcoin turns 15

Cryptocurrency is a widely accepted and used digital currency and payment system across the globe, with Bitcoin turning 15 years old in 2024?

Bitcoin was launched on January 9, 2009 by Satoshi Nakamoto, a programmer (or group of programmers) shrouded in mystery, with their identity unknown even to this day. It can be divided into “satoshis” or “sats” in short form, which are the smallest units or denominations of a bitcoin (up to eight decimal places), named after the creator.

Bitcoin is an encrypted digital asset that boasts secure transactions. Its decentralized nature renders third parties unnecessary in the distribution of its services. This is made possible by the blockchain system in place, otherwise known as the ledger technology that functions on a peer-to-peer network to promote ownership and prevent double spending when Bitcoin is used to exchange and/or purchase goods or to store value whether in cash, gold, or other forms of property (art, stocks, real estate, etc).

Before gaining worldwide, mainstream renown, Bitcoin had next to no value during its inception. The early years had very little traction. So little, in fact, that Google Finance had only begun tracking its pricing data in 2015, approximately two years after the first few investors took a risk and changed its trajectory.

Unfortunately, not even a big cryptocurrency name like Bitcoin was exempt from the effect of an economic crisis, with its value having a continuous downtrend which was further exacerbated by the dawn of the pandemic, as well as other conflicts that have impacted global finance. Some financial and crypto analysts have dubbed this seeming freefall as the “crypto winter,” but someday spring will come.

Android gets its first big update, 15 years ago

When it comes to most mobile devices, tablets, or even smart TVs, the operating system or OS market has been largely monopolized by two tech giants—Google’s Android and Apple’s iOS.

These began as a mobile OS but, like most technological advancements of late, quickly expanded to provide service to numerous other platforms.

Only 15 years have passed since the first major Android OS update, Cupcake, was released on April 27, 2009. From its most basic iteration of Android 1.0 in the previous year, which could only support text messaging, calls, and emails, Cupcake came with added features, including an onscreen virtual keyboard and the framework for third-party app widgets.

Now, Android holds the title of the most popular OS worldwide with over 70% market share.

Several dessert options later (remember Cupcake, Donut, Eclair, Froyo, Gingerbread, Honeycomb, Ice Cream Sandwich, Jelly Bean, KitKat, Lollipop, Marshmallow, Nougat, Oreo, and Pie before Android 10), we are currently at iteration 14.

But did you know that before its use and popularity skyrocketed, the Android OS was initially meant to service standalone digital cameras? This was a few years after the first camera phone was created in 1999—and we all know how advanced phone cameras have become—and before Google bought Android, changing both the company’s and the industry’s trajectory.

What makes it so popular among smartphone companies is that Google committed to keeping Android an open-source OS. This allows third-party companies to tweak it as they so desire, creating their respective user interfaces to match their flagship phones, hence the plethora of options out there.

Whatever purpose you may have for a smartphone, it seems Android can already fulfill it now. And given the increasing demand from both the industry and the public, who knows what Android will have in store for us for the next 25 years?

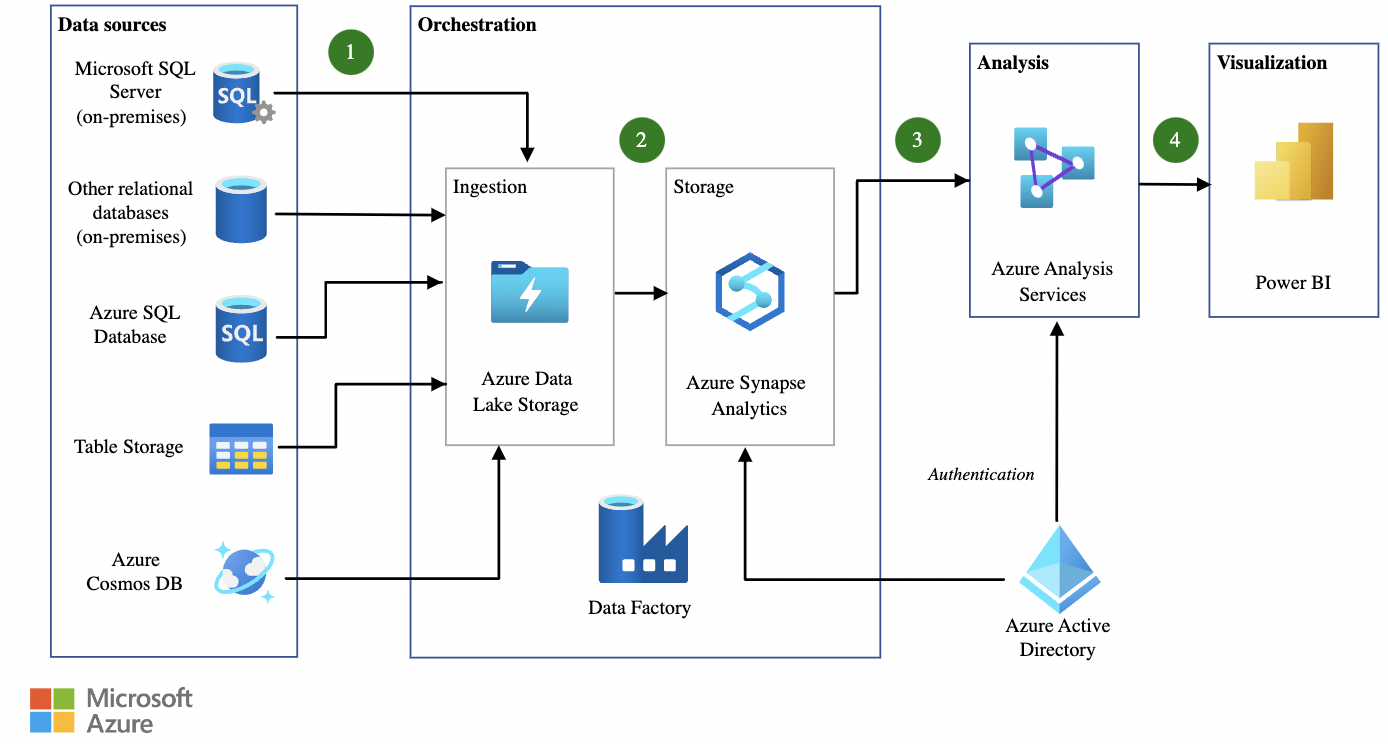

Microsoft Azure launches, 15 years ago

On November 17, 2009, Microsoft announced the availability of the Windows Azure platform, a cloud computing operating system tailored for businesses and developers, at the Microsoft Professional Developers Conference (PDC). Its unique selling point? A coding-free experience.

The announcement at PDC was met with enthusiasm, as Microsoft Azure ushered in a new era of accessibility and efficiency for both seasoned developers and businesses eager to embrace the potential of cloud computing.

Operating under the codename "Project Red Dog," Azure was Microsoft's countermove against Amazon EC2 and Google App Engine. Developed as an extension of Windows NT, it signalled the dawn of Microsoft's Cloud Platform as a Service (PaaS). Paired with the debut of the SQL Azure relational database that broadened support for programming languages such as Java, PHP, and specific microservices, Microsoft established itself as a formidable entity in the cloud computing arena.

Azure's grand entrance into the market marked a significant leap forward for cloud computing, offering a robust set of tools for professionals across various industries. Launched with the promise of scalability, flexibility, and advanced capabilities, Azure catered to the diverse needs of businesses, from start-ups to enterprise-level corporations.

15 years later, Azure shows no signs of stopping. Microsoft's current collaboration with industry giants such as Intel, NVIDIA, and Qualcomm is geared toward elevating Azure IoT Edge as the premier platform for seamlessly incorporating Artificial Intelligence (AI) models. This commitment is further underscored by Microsoft’s strategic investments in databases, Big Data, AI, and IoT, solidifying Microsoft Azure's role as a comprehensive cloud service provider.

Five years since the initial deployment of 5G services

Ever reminisce about the dark dial-up ages before 5G? This tech superstar made its grand entrance in 2019 and quickly became every remote worker’s BFF. With its turbocharged speed and unmatched reliability, 5G has made it possible for freelancers and entrepreneurs to have buffer-free (most times!) meetings while watching 4K videos on the side.

While 4G tech, its successor, still provides connectivity to most mobile phones today, more and more brands are releasing 5G-capable phones. In fact, most top-ranking flagship mobile phones today are 5G-enabled as it boasts impressive download speeds and faster data transfer.

The journey towards 5G technology traces its roots back to 2008, when in April of that year, NASA collaborated with Geoff Brown and Machine-to-Machine Intelligence (M2Mi) Corp to pioneer a fifth-gen communications technology, primarily focused on nanosatellites.

Zooming into 2019, South Korea stole the spotlight by being the pioneer in adopting 5G technology with South Korean telecom giants—SK Telecom, KT, and LG—roping in a whopping 40,000 users on day one.

However, a report from the European Commission and European Agency for Cybersecurity highlights security concerns about 5G and advises against relying on a single supplier for a carrier's 5G infrastructure. Additionally, due to concerns about potential espionage, some countries, such as the United States, Australia, and the United Kingdom, have taken measures to limit or exclude the use of Chinese equipment in their 5G networks, as exemplified by the US ban on Huawei and its affiliates.

Women and girls hold up half the world, including STEM

The United Nations or the UN, through the UNESCO and UN Women, declared February 11 as the International Day of Women and Girls in Science to raise awareness on women’s and girls’ situation in science, technology, engineering, and mathematics—better known as STEM, to celebrate the importance of diversity and inclusion in STEM, and to recognize the contributions and achievements of women and girls within the field.

The date was chosen to pay homage to one of the most prominent figures in STEM, renowned for being a pioneer in nuclear physics and radioactivity, and truly an inspiration to all who dabble in the field, especially to girls who have big dreams of one day becoming a woman of science—Madame Marie Curie.

This day has become an annual event observed to promote gender equality, breaking down barriers, glass ceilings, and stereotypes that have limited women’s participation in the past and, unfortunately still, in the present; highlighting achievements despite the limitations that current systems perpetuate; inspiring future generations to dream big because anything is possible; and advocating for inclusion not only in access to opportunities and resources but also in control over them and participation in decision-making.

It opens up avenues to involve women and girls from all walks of life and to empower them to take action where it matters. In fact, last year’s International Day of Women and Girls in Science initiated a project called Innovate. Demonstrate. Elevate. Advance. Sustain. or I.D.E.A.S. which aimed to push for more involvement with the UN’s Sustainable Development Goals, from improving health to combating climate change. Additionally, the first science workshop for blind girls was held with the title “Science in Braille: Making Science Accessible.”

This year, the annual observance will be held on February 8 and 9, 2024, in support of the theme for the International Women’s Day 2024: Inspire Inclusion. The theme is especially significant with the knowledge that, despite being half the world’s population, the height of women’s participation in STEM remains at approximately 30%.

Further information about this has already been published in numerous websites; specifically, the event agenda is available here.

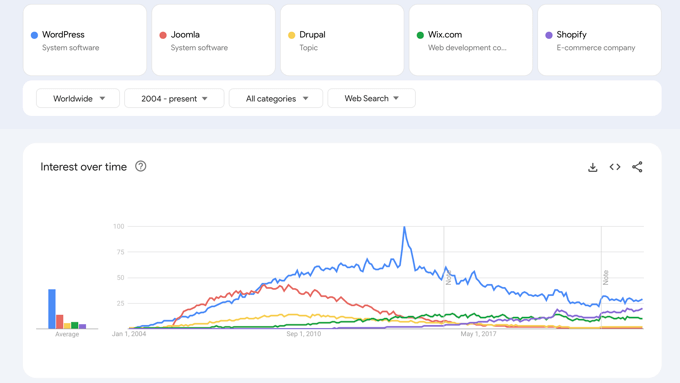

WordPress set to power more than half of all websites in 2024

WordPress, the cornerstone of digital content management, and the driving force behind the many SMBs’ online presence, is set to power more than half of all websites by 2024.

Released into the digital wild on May 27, 2003, WordPress was the brainchild of American developer Matt Mullenweg and English developer Mike Little. While it was originally designed for blog publishing, WordPress has now evolved to support various web content formats such as traditional websites, mailing lists, digital portfolios, online shops, and learning management systems that are rapidly growing in popularity.

Currently, it powers over 35 million websites and powers 42.7% of all websites (if we count websites that don’t use a CMS), including major brands such as Sony, CNN, Time, and Disney. However, in the world of content management systems (CMS), it already dominates the market at 62.5% CMS market share.

The WordPress Foundation is the brand’s proprietor, managing WordPress, its projects, and affiliated trademarks. Globally embraced for its versatility, WordPress has garnered a stellar reputation as a free, user-friendly software. Its widespread adoption underscores its status as one of the premier content management systems globally, celebrated for its ease of use.

While there's occasional speculation about the demise of WordPress, reminiscent of debates about the death of blogging and writing in general, the statistics affirm that the brand is not losing momentum. Continuously surpassing its rivals, WordPress remains top of the pack and the numbers just keep going up. During WordCamp Asia 2023, Matt Mullenweg, the co-founder of WordPress, confidently stated, "I envision WordPress not only enduring but becoming a fundamental element of the web—100 years from now.."

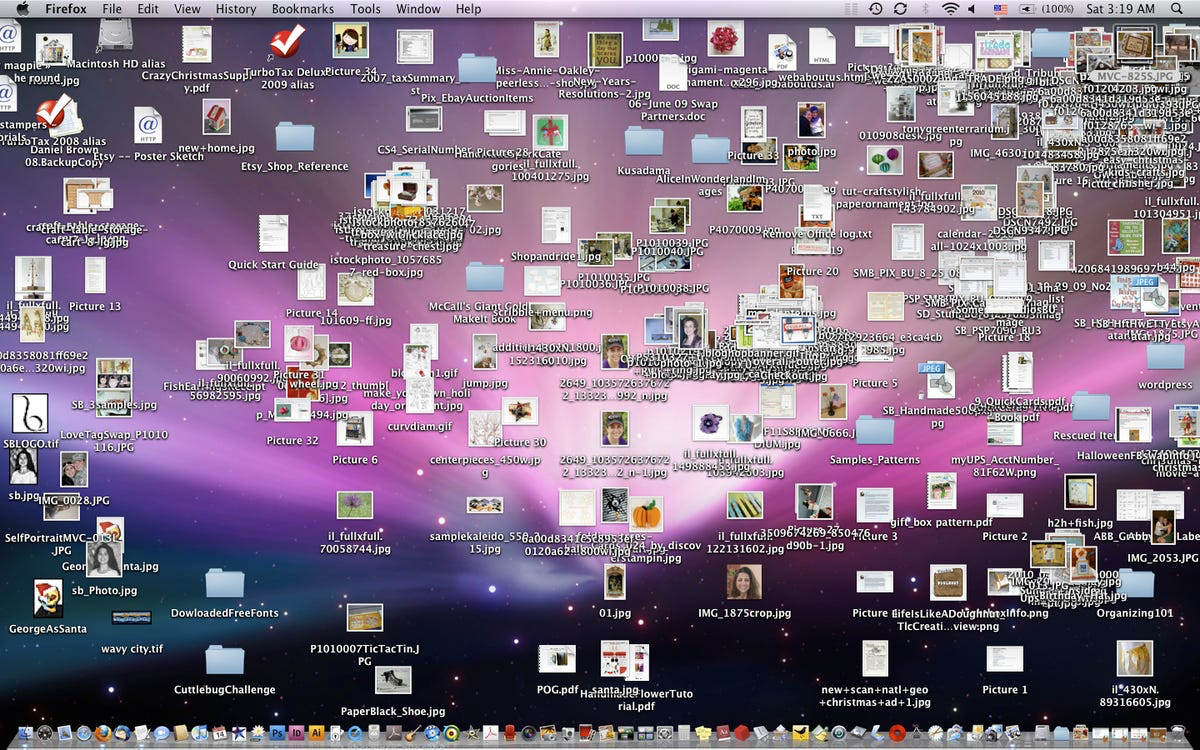

National Clean Your Virtual Desktop Day

Ever heard of the saying that a cluttered space reflects a cluttered mind? Clutter is said to have a negative effect on a person’s mental health. If you’re looking for a sign to start your decluttering journey, October 17, 2024 is it. Take the opportunity to free your mind by clearing (virtual) space.

National Clean Your Virtual Desktop Day was created in 2010 by the Personal Computer Museum of Canada, but it has spread across different parts of the world since then, falling on every third Monday of the month of October.

Technological advancements know no end. And if you’re working on personal and professional projects, your files are bound to grow in number, especially if you’re one of those people who use one file for the first draft and several rounds of revisions—anyone familiar with Filename_Final_New Final_Final Version_Finalv2_Final Final.extension?

This year’s observation has already laid out a number of activities for anyone interested in participating. First, you will need to back up all your important or relevant files beforehand. Next, you have to archive all your files into organized folders that are clearly and appropriately named; if you can close all opened tabs in the many browsers/windows you use, better. And last but certainly not the least, let go—anything you decided didn’t need to be backed up, anything you may have moved into a Trash folder, and anything will have no use for? All of these need to go.

Not only will your brain thank you for this, your virtual workspace probably will too. All your hard work during clean up will be rewarded with more space in your desktop, faster device performance, an organized filing system to adhere to as you work, the unobstructed view of your beautiful (or plain, to each their own) wallpaper, and a fresh start to approach the coming year until the next scheduled clean up.

October is for cybersecurity

Most day-to-day processes have shifted from traditional platforms to digital, and those who were reluctant to follow that shift were forced to rely more and more on an increasing number of online tools when the pandemic hit. Unfortunately, this shift also meant that cyber crimes and other attacks have become stronger than ever. Hence, in October of every year since 2003, the National Cybersecurity Awareness Month is celebrated.

Cybersecurity and cyber crimes aren’t the dramatic scenes we see in movies and TV shows where a Robin Hood-esque hacker goes toe to toe with law enforcement. It’s usually simpler than that, but it can also be much worse. Anyone can be a victim, so we all have to be careful.

Something as seemingly ordinary as giving out one’s username and password or clicking an infected link can have grave repercussions, such as identity theft, financial loss, and/or data loss or leaks—all of these leave victims vulnerable to further attacks, cyber or otherwise.

The National Cybersecurity Awareness Month celebration has each week of October corresponding to specific themes designed to raise awareness and promote best practices of cybersecurity. These range from simple steps for online safety, elevating these from a personal scale to a much larger one, recognizing and combating cyber crimes, being vigilant and deliberate about our online connections, and building resilience and securing our systems and infrastructure.

There is no one-size-fits-all solution to cybersecurity and the threats against it because technological advancement and evolution wait for no one. Some of these can be prevented with proper knowledge and vigilance. However, it isn’t as easy as isolating an infected seed to prevent the spread.

With continued effort, every October and beyond, the hope is to nip these attacks at the bud.

Pam is a BA Creative Writing graduate who realized midway through uni that she prefers reading books more than writing them. In 2018, she joined Marque Media, a US-based digital marketing agency, where she wrote for clients across various industries, including tech, healthcare, finance, and wellness. Later, she became lead content writer at Mindful Digital Marketers and fleet management content specialist at Expert Market UK.

When not staring at a blank page, Pam is probably busy getting her daily 15k steps in, listening to K-pop, visiting second hand bookstores, and drinking the strongest iced coffee she can get her hands on.

Become a TechRadar Insider

Become a TechRadar Insider