Cube processors to bring eye-popping speed

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

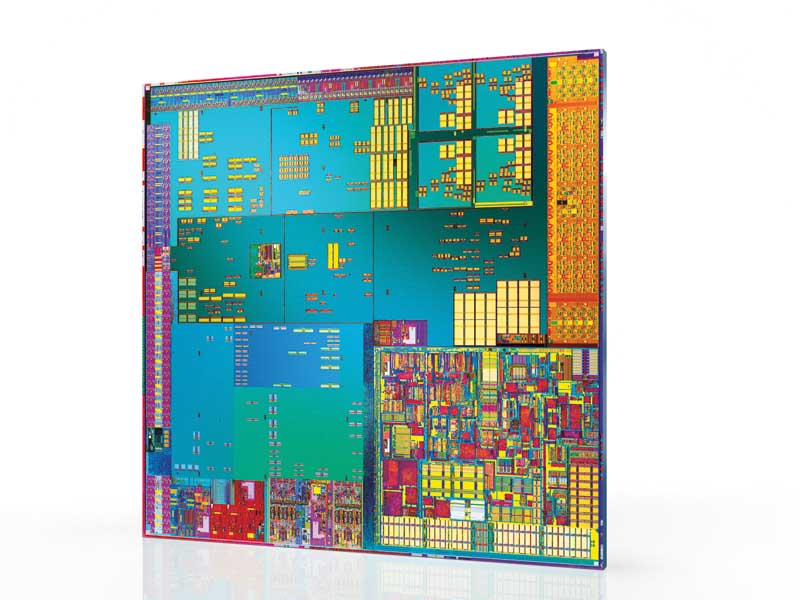

Today's flat, 2D silicon microprocessors are becoming so fast that the time it takes to get a signal from one side of the chip to the other – let alone between processors – is becoming a significant limiting factor.

One way to tackle this is being explored in the field of silicon photonics – replacing electrical connections with faster moving light – but researchers at the University of Rochester in New York State say they've cracked this problem another way: by creating the world's first working chip in three dimensions.

"I call it a cube now, because it's not just a chip anymore," says Eby Friedman, the University of Rochester's Professor of Electrical and Computer Engineering. "When the chips are flush against each other, they can do things you could never do with a regular 2D chip," he claims.

Build up, not out

Correctly called a 3D NoC (Network on Chip), Friedman's cube isn't several conventional microprocessors simply stacked up using common data and address buses. He and engineering student Vasilis Pavlidis designed their device from scratch. Their NoC has better synchronisation, power distribution and data transfer capabilities than stacked conventional chips. The prototype runs at a respectable 1.4GHz, as described in a paper found at www.tinyurl.com/3uumee.

The reason Friedman's chip is a breakthrough is that you can only pack transistors so tightly in two dimensions before they begin to malfunction. This dictates the upper limit to the processing power that a single chip can command. Friedman says that 3D chips should suffer no such limit.

"Are we going to hit a point where we can't scale integrated circuits any smaller? Horizontally, yes," he says. "But we're going to start scaling vertically, and that will never end. At least not in my lifetime. You should talk to my grandchildren about that."

Sign up for breaking news, reviews, opinion, top tech deals, and more.

The architecture the team has designed contains three specially designed silicon chips, and has millions of holes drilled into the insulation that separates them in order to make connections between transistors in different layers. Key to the design has been making all three layers interact like a single chip.

In a press release issued by the University of Rochester, making the three chips work together was described as "like trying to devise a traffic control system for the entire United States – and then layering two more United States above the first and somehow getting every bit of traffic from any point on any level to its destination on any other level."

Major advantages

The incentive for overcoming these difficulties was worth it, however. "The major advantage [of 3D chips] is the considerable reduction in the length and number of global interconnects," says Friedman. This, he claims, will result in greater performance and lower power consumption.

The prototype already shows a power saving of between 58 to 62 per cent compared to a conventional 2D microprocessor of the same speed. Friedman claims that the individual layers needn't even be silicon, giving rise to the possibility of creating some very strange new processors.

Some of the layers in a typical 3D NoC could be used to perform specific functions, such as data storage and image processing, or they may consist of custom chips for use in mobile phones and other consumer goods. Because each layer works in parallel with the others, a future MP3 player might have a layer that converts stored tunes to audio on demand.

Because 3D NoCs are essentially just folded up circuit boards with shorter connections between them, the chips inside gadgets such as the iPod could potentially be reduced to a tenth of their size with ten times the speed.

How long we may have to wait for the first 'cube PCs' is anyone's guess, but chip designers are already beginning to feel the limitations of 2D. A 3D x86 or even 64-bit design could push the architecture line far much further by increasing performance while decreasing power consumption.

Of course, this all depends on whether light-based interconnects turn out to be as speedy as Intel would have us believe…

First published in PC Plus, Issue 275

Now read Core i7: Your essential guide to Intel's new killer chip

Become a TechRadar Insider

Become a TechRadar Insider