TechRadar Verdict

SQL Server 2014 is a major upgrade that also has a host of invaluable smaller improvements, but you'll need to invest time in database design to get the most from it.

Pros

- +

Simple conversions for in-memory tables

- +

Fast migration and disaster recovery

- +

Automatic backups

- +

SSD support

Cons

- -

Limitations on stored procedures

- -

Need VPN for high availability

- -

Need SQL Server Enterprise for some features

- -

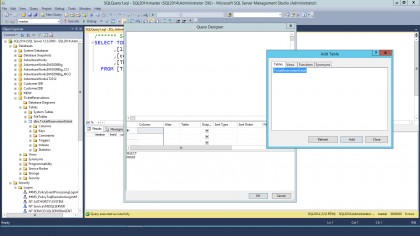

SQL Server management tool looks dated

Why you can trust TechRadar

The latest version of SQL Server is a major new release, but not a disruptive one. You get security and backup improvements that you can start using straight away and you can keep working the way you always have while making the changes needed to take advantage of performance increases.

And instead of making licencing more complicated, Microsoft actually gives you more with the Standard edition of SQL Server 2014. Especially for smaller businesses that don't have a full-time database admin, the new managed backup feature will make things much simpler.

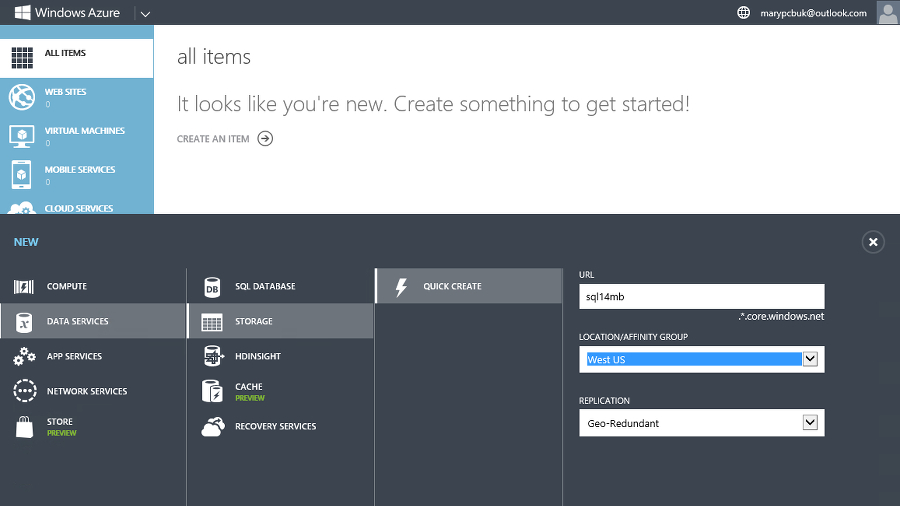

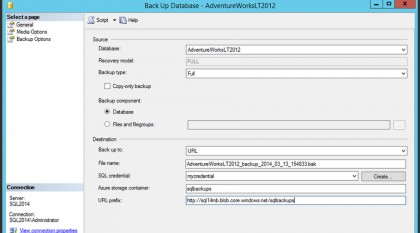

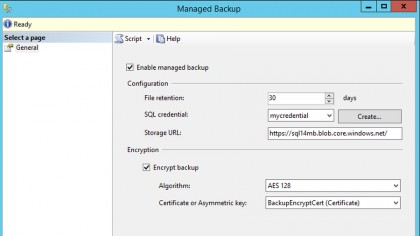

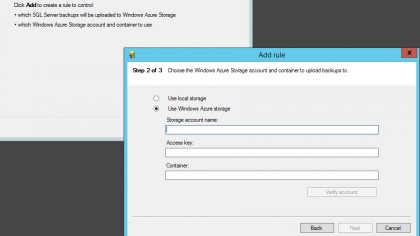

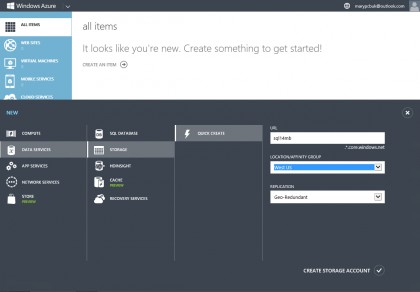

It's simple to turn this on for a database in the interface or using a script. When you do, as soon as you've made enough changes to a database it automatically gets backed up for Azure and stored for up to 30 days; if you change more than 5Mb of data that triggers a log backup, and if you change more than 1GB of data that creates a full backup (and you get a full backup every day to store smaller changes).

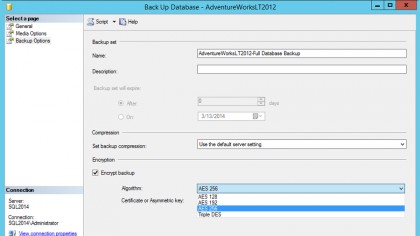

You can also turn on encryption and compression for these backups, which saves you money on the third-party tools you'd have needed before. That means no more worrying about changing tapes or getting backups offsite; you just pay for Azure storage and you'll always have multiple backups.

As automatic backup wasn't in earlier versions of SQL Server, Microsoft has produced a free tool that will monitor your network and automatically copy backup files to Azure for you, so you can have a single cloud backup for all your databases even if you don't upgrade them.

You can move tables you use infrequently to Azure as well as an archive, or keep database replicas there for disaster recovery and that's all integrated into SQL Server 2014; for always-on replicas there's a wizard that sets up all the steps for you.

That gives you options for getting all your data into the cloud (and Microsoft remains committed to matching and sometimes undercutting Amazon prices, so this is a cheap way of getting secure backup).

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

You'll still want a beefy server setup to run SQL Server, especially if you're adding SSD and more memory to get performance improvements and it's unlikely that your network connection will be fast enough to let you work live against data on Azure though.

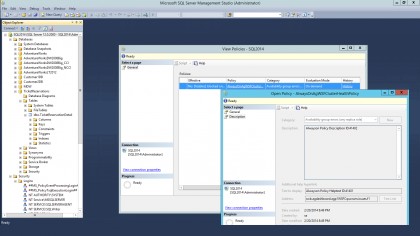

Some new server-level permissions give you more security options. Not only can you let a specific login connect to any database you already have and any new databases you create, you can choose whether you allow one login to impersonate another. Because this applies to the server, you can create the security policy you want and have it automatically apply to all new databases.

It's also good to see support for the ReFS file system introduced in Windows Server 2012; now you can take advantage of the improvements over NTFS for resiliency on your database server as well.

Performance

But the heart of what's new in SQL Server 2014 are the ways it offers dramatically faster database performance.

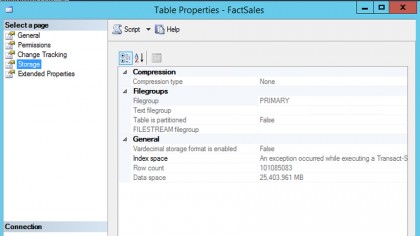

SQL Server 2012 already lets you speed up data warehouse applications by converting key sections to columnstore indexes that put each column in its own set of disk pages, so when you only need to retrieve information from a few columns you don't have to load the whole table to get them; it uses less CPU and is anything up to a hundred times faster, but before you had to remove and recreate the indexed columnstore whenever the data changed.

In SQL Server 2014 they can now be updated instead of needing to be recreated every time something changes, which means you get all the speed without the inconvenience.

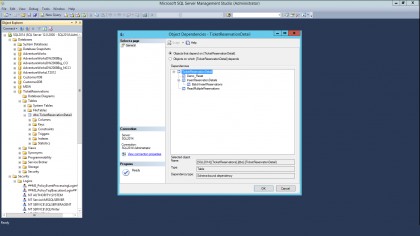

It also has a second, brand new database engine for in-memory data processing that can speed up transactions. This engine, which Microsoft refers to as Hekaton, is just part of SQL Server so you don't pay extra for it or install it separately and you don't even need to code databases differently.

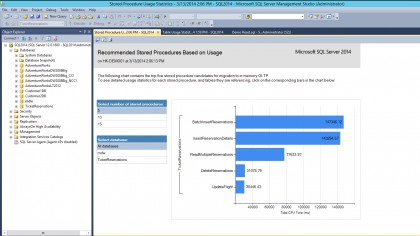

Take a database app you already have and tell SQL Server to analyse it for in-memory use and the Memory Optimisation Analyser will find the tables and stored procedures that will run faster with the new engine, then do the table conversion for you in a matter of minutes (you have to migrate stored procedures yourself in this version).

Unlike just about every other in-memory database system, you don't have to put all the tables in memory, so you can improve performance without needing to have enough physical memory for an entire database.

There are some things that you can't put in memory including cursors, sub-queries, common table expressions, triggers, constraints, foreign keys and sparse columns. It's worth trying to recode your database app to avoid those for the performance gains. On our test databases we saw anything from 10 to 30 times better performance.

The difference is because memory is so much faster than hard disk (and even than SSD), and because the data structure of in-memory tables can be more efficient because it never has to wait to load data halfway through; there are no locks or latches to slow things down.

The difference in performance for applications that can take advantage of Hekaton is enough to take something you used to run once a week and let you run it on demand, giving you real-time access to something you used to have to batch up and run when the database wasn't busy.

Put that behind an ecommerce store and not only can you process purchases more quickly, but you could also calculate recommendation lists like top ten purchases or accessories other customers bought with a product in real time rather than loading a canned list – which means you can take advantage of trends as soon as they start.

And because this isn't an esoteric solution with a different way of building database apps, it's something you can take advantage of quickly. SQL Server 2014 has the potential to make in-memory processing mainstream rather than only for specialist developers.

Even if you don't use the in-memory options your databases can speed up in SQL Server 2014. New support for using SSDs to extend your buffer pool (up to 500Gb) gives you another way to speed up databases reasonably cheaply.

If you have 64GB of RAM and you drop in a 256GB SSD, you could see your database app run two or three times as fast as before. This is a different system from Storage Spaces, which get SSD support in Windows Server 2012 R2, so you do need to manage the resources separately.

The Resource Governor can finally control physical IO to avoid bottlenecks; you can set the amount of IO that a query is allowed to consume and if it goes over that (which usually means it's a badly formed query that isn't going to give you useful results) it will get stopped instead of running until it uses up so many resources the system hangs or crashes.

Delayed Durability doesn't actually make a database faster, but it makes it more responsive. Usually, when you update a record you have to wait until the change you made is saved into the log (in memory) and then the log itself is saved to disk.

Delayed Durability lets you carry on working with the database before the log record gets saved; in the worst case, a crash at the wrong moment would lose the data that was still in memory so you can choose whether this applies to the whole database or less critical operations, but if logging makes your database appreciably slower you can make it feel much faster without investing in extra hardware.

The performance improvements are where you'll care most about licencing. You need the Enterprise edition to get the in-memory online transaction processing of Hekaton (as well as the improvements to high availability), but the Standard edition gets the SSD support and can use 128GB rather than 64GB of physical memory.

That removes a limit that has made SQL Server Standard less and less appealing and means that you can run more demanding database loads without needing the Enterprise edition.

Mary (Twitter, Google+, website) started her career at Future Publishing, saw the AOL meltdown first hand the first time around when she ran the AOL UK computing channel, and she's been a freelance tech writer for over a decade. She's used every version of Windows and Office released, and every smartphone too, but she's still looking for the perfect tablet. Yes, she really does have USB earrings.