Microsoft Cortana: reaching new levels of voice recognition

The feather in the cap of Microsoft Research

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

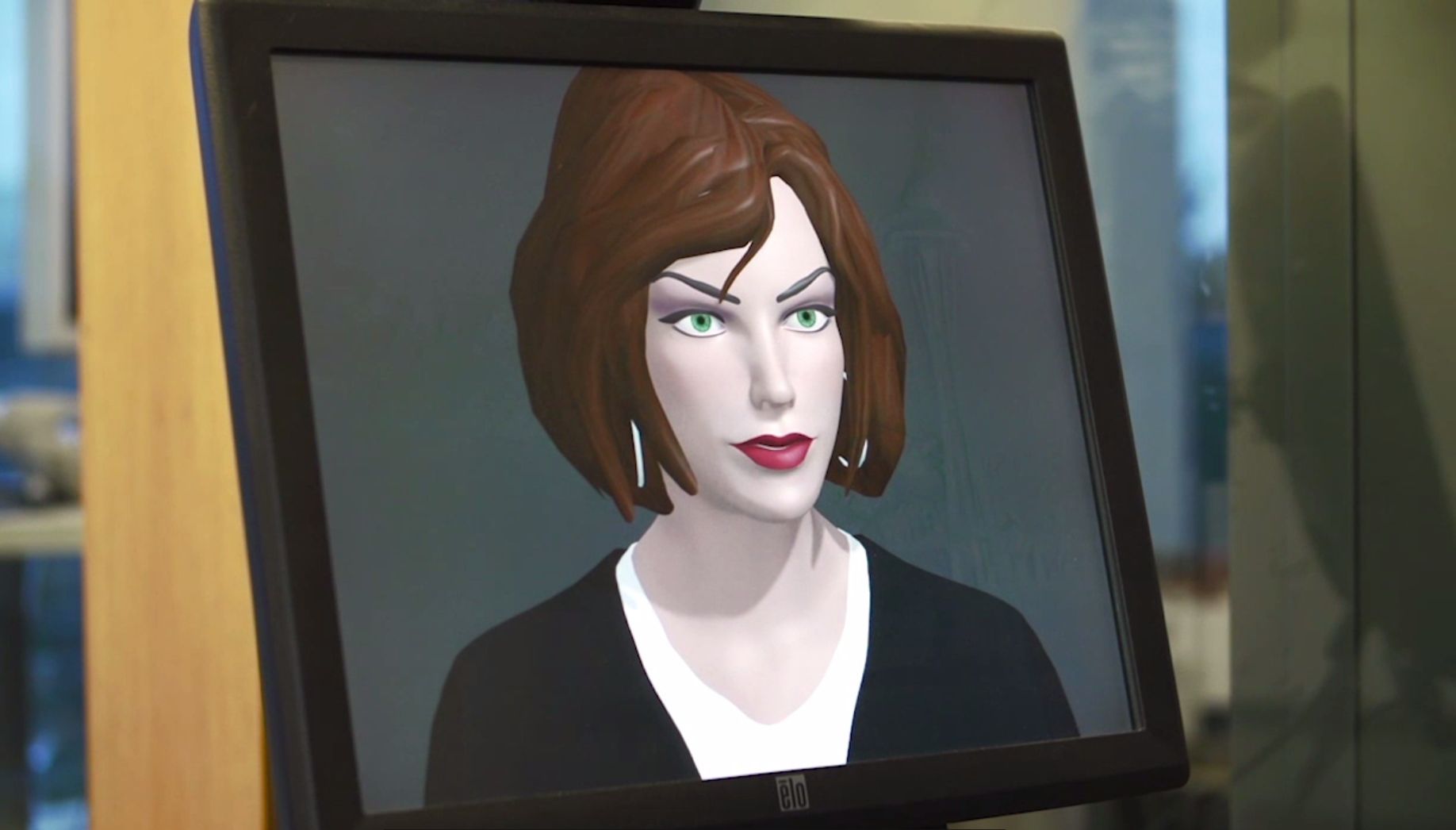

If you like the look of Cortana but don't want to switch to Windows Phone, Microsoft's director of research Peter Lee has some good news for you.

What you see on the phone today is "just one of the many user experiences we will have for this," he told us at the recent Future in Review conference. "Cortana is going through this extended beta process. It's available on phones first and we're giving it a period to learn before it's made available more widely."

Voice recognition is pretty much a solved problem, he suggests.

Article continues below"We are really getting to the point where computers are able to transcribe human speech in real time better than humans can. As soon as we knock down some engineering details having to do with [dealing with] lots of microphones, this will reach PCs and tablets and phones."

New levels of recognition

But what Microsoft is working on is bigger than just voice search or even a friendly personal assistant; he calls them "The basic building blocks for natural interaction; Can you talk to it and have it understand you? Can it watch you and understand you? Can it understand what's going to feel creepy, what's going to feel pressured?

"Can we mine all the data we have access to - the web, millions of interaction users have with our systems - and out of that extract a basic form of intelligence and put it in a substrate that can be surfaced [in different ways]?"

What's different about Cortana isn't the accuracy of recognition or even the humour that's built into it (Microsoft Research developed a system called Chitchat which Lee calls "lightweight machine learning in constant evolution so it's able to increase the number of domains Cortana is able to 'chit chat' with you about").

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

It's the system's ability to learn and keep on learning without needing to be reset for accuracy. "Today, an artificial intelligence system goes through a huge training room in a back room until it's ready and then it gets deployed. But over time you have a process of 'machine learning rot' and they have to go through retraining."

Fluid learning

Think if it like taking a car in for maintenance. The hope is that Cortana will be more self-maintaining. "Cortana is continuously learning; if Justin Bieber does something in the morning, in the evening Cortana can talk to you about it."

There are certainly limitations and things Cortana can't do. "While we have this tremendous optimism, it is all based on machine learning, based on finding correlations in large amounts of noisy data. It is powerful but it is something that can lead to false inference."

Set a machine learning system to watch video of millions of people walking down the street and it's not going to know why things are happening or that one thing causes another, just that they happen one after another; it doesn't rain because people have opened their umbrellas, but a machine learning system might conclude that.

"Crossing the chasm from correlation to causal inference is one area [we haven't yet solved]."

Another thing that's missing is what he calls 'situated understanding'; "if we're talking together, everyone in the room can look at us and see two people talking and realise the situation; that's beyond Cortana."

Robot trials

Microsoft Research has made some progress here, with a robot receptionist in its building on the Redmond campus. "If two people walk up to it together it tries to understand if those people are talking to each other." The system also learned to spot which people are hanging around in the lobby talking and who wants to take the elevator.

Mary (Twitter, Google+, website) started her career at Future Publishing, saw the AOL meltdown first hand the first time around when she ran the AOL UK computing channel, and she's been a freelance tech writer for over a decade. She's used every version of Windows and Office released, and every smartphone too, but she's still looking for the perfect tablet. Yes, she really does have USB earrings.