Presented by

Autodesk Flow Studio: I explored everything the new Wonder 3D tools can do

Explore all parameters available to you

In my previous article, covering Autodesk Flow Studio’s new Wonder 3D tools (read it here), I showed you where they’re located in the interface, and what the three main tools now part of the free tier can do for you.

As there’s a lot going on there, I thought it would be worth going deeper and explore all available parameters.

Sure you could just stick to the default settings, and truth be told, that’s almost always the best option here, but on the flip side, where’s the fun in that? So let’s look under every rock, and shine a light into all those dark corners… virtually speaking.

Autodesk is running a flash sale on some of its most popular tools. For a limited time only, first-time subscribers can save up to 20% on business-grade software including AutoCAD, Revit LT, Flow Studio, 3ds Max, and Maya. Sale ends 20 March

Accessing Flow Studio

If you're new here, Flow Studio’s new Wonder 3D tools use text prompts or images to create either 2D or 3D objects. If you know generative AI, you’ll understand the concept. Although most subscription tiers have to be paid for, you can gain access to some of the tools on offer for free.

There are limitations on the free plan, as you'd expect, such as only being able to work with 300 credits each month (which means, as each generated AI creation costs 20 credits, you’re limited to a maximum of 15). However, there’s a lot you can experiment and have fun with, helping you get a real feel for how the service works, were you to decide to open your wallet.

You can check out Flow Studio by clicking here.

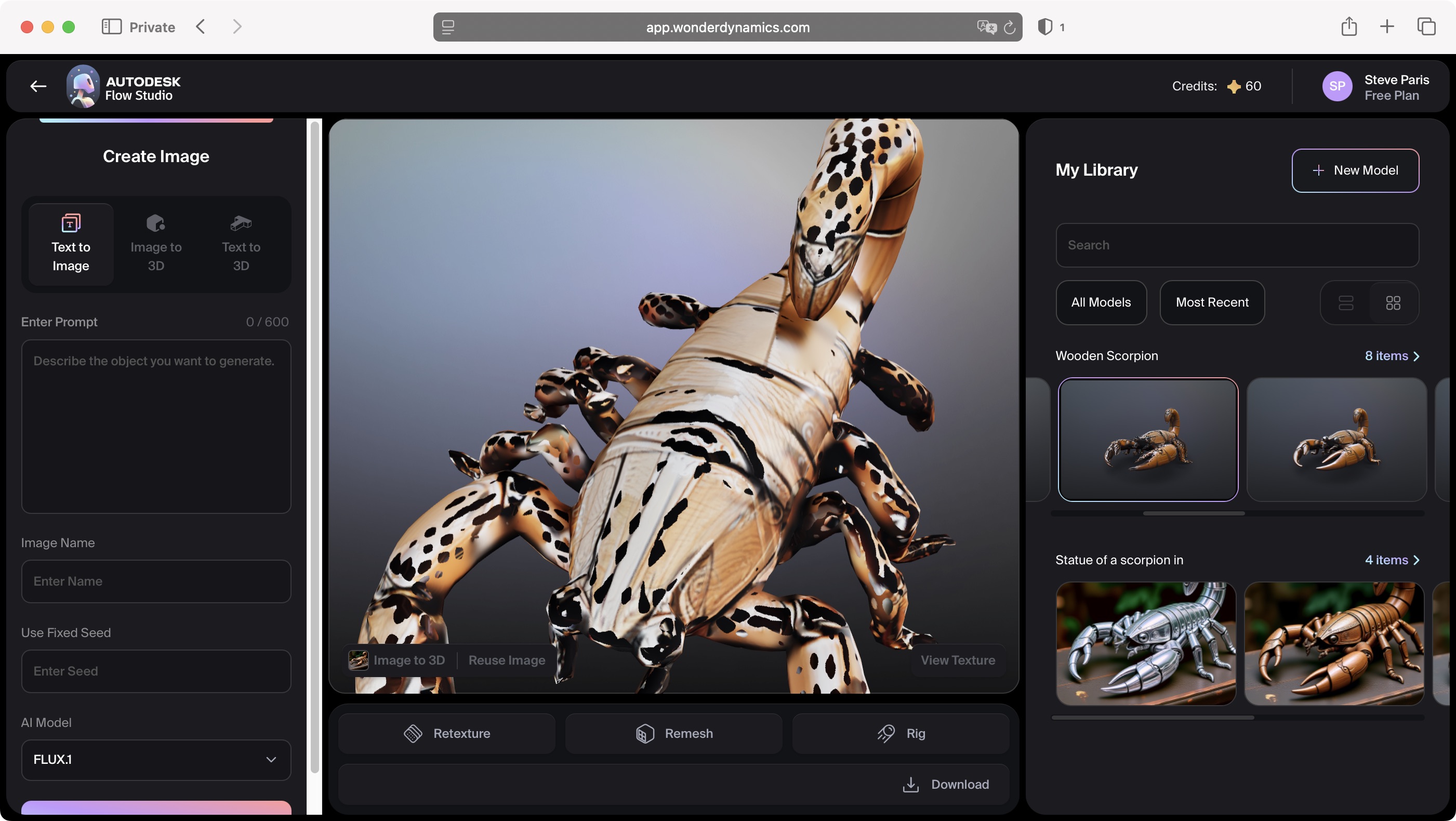

Text to Image

Let's take a look at the simplest tool: Text to Image. As this is generative AI, to use it, you type what you’d like to see, and Flow Studio will get cracking for 20 credits. We’ve already explored this in the previous article, and the process works as you’d expect. But there’s a few features here I think are worth looking at further.

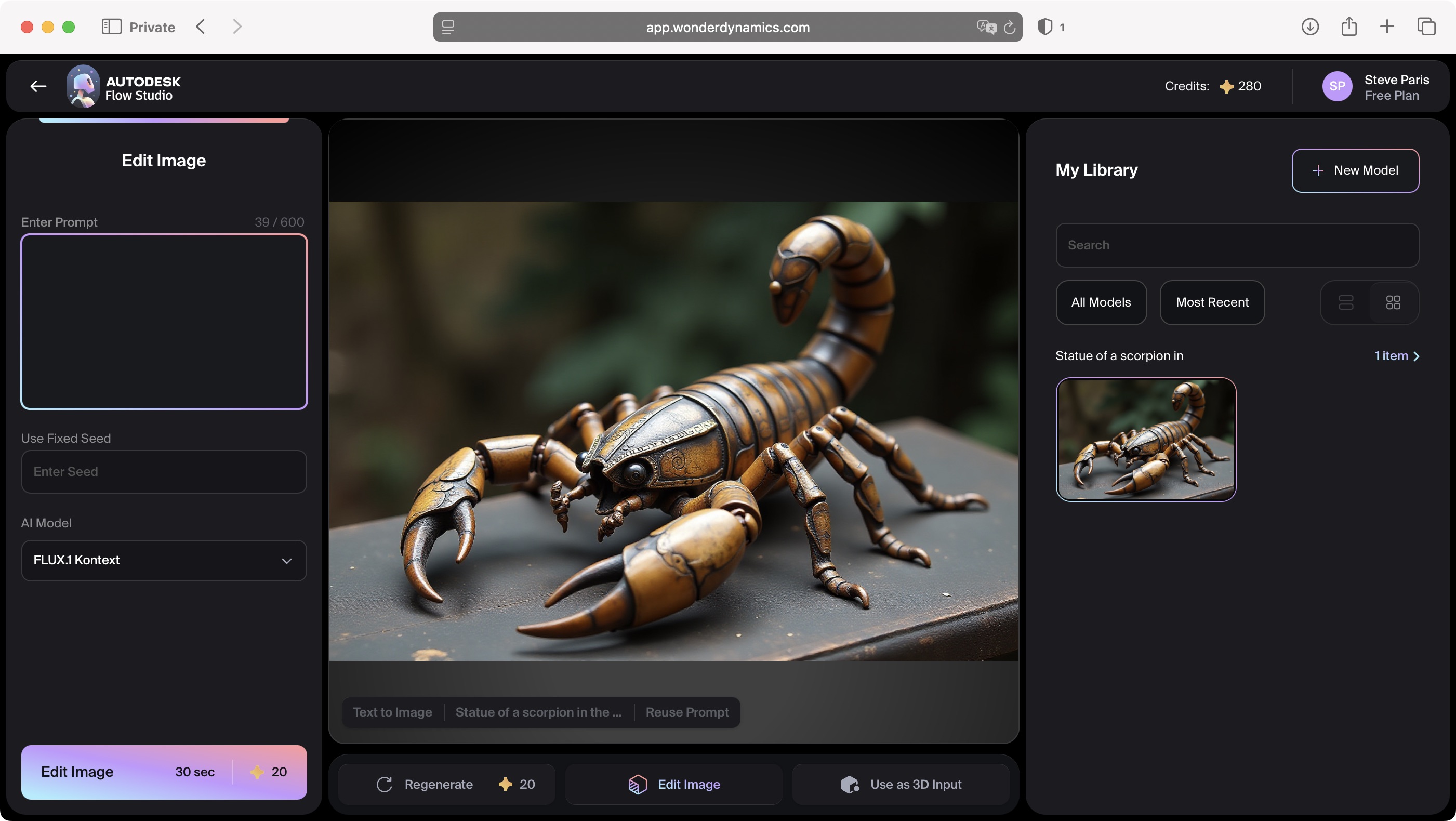

For instance, I asked Wonder 3D to create a “statue of a scorpion in the steampunk style”. I quite liked the result, but I wanted to refine the result.

How to do that without starting from scratch? As we all know, the next result based on the exact same text prompt will not give me the same output - that’s the beauty of generative AI.

However, the clever engineers at Autodesk thought of that, and under the current image are three buttons. The middle one is labeled “Edit image”.

Now, you won’t see a series of Photoshop-like tools appearing, or anything like that, when you click on that button. Instead, you’re back to the usual text prompt, but instead of the left sidebar saying “Create Image”, it’s now labeled, “Edit Image”.

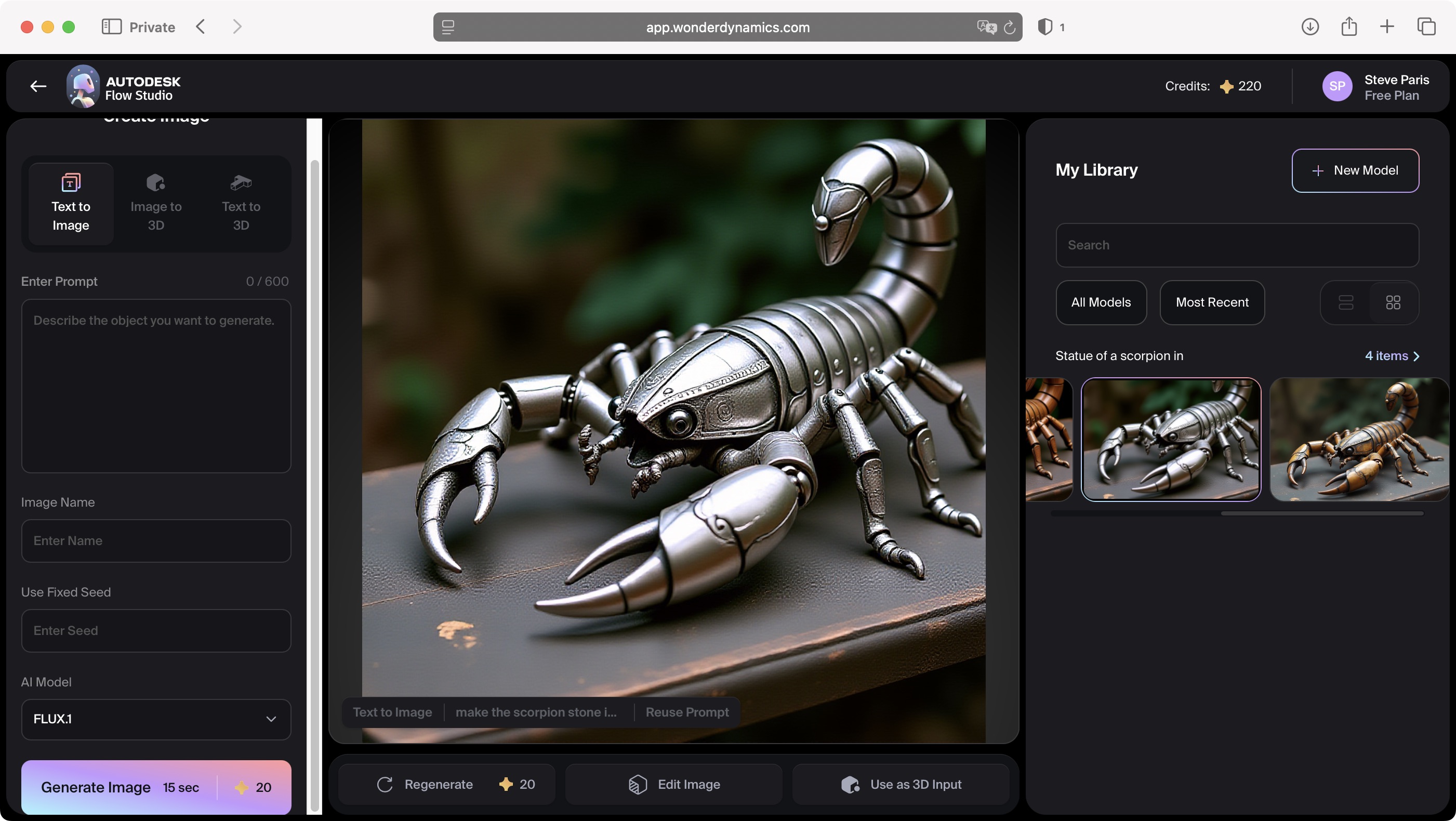

So, I typed “make the scorpion silver instead of copper”, and it did so. The resulting image wasn’t 100% the same - my scorpion was now more zoomed in for some reason, but its angle was the same, and so was the book it was resting on, as well as the background. Except now, it was silver instead of copper.

As you’d expect, every single request, even those that don’t satisfy you, will cost you 20 credits. On the plus side, all these variants are preserved in your Library, so you can go back to any of them at any time. I had fun making my scorpion out of other materials like wood, which worked fine, but somehow it couldn't handle crystal or stone…Your mileage will vary of course, as what we refer to as AI, isn’t perfect. Not yet, anyway.

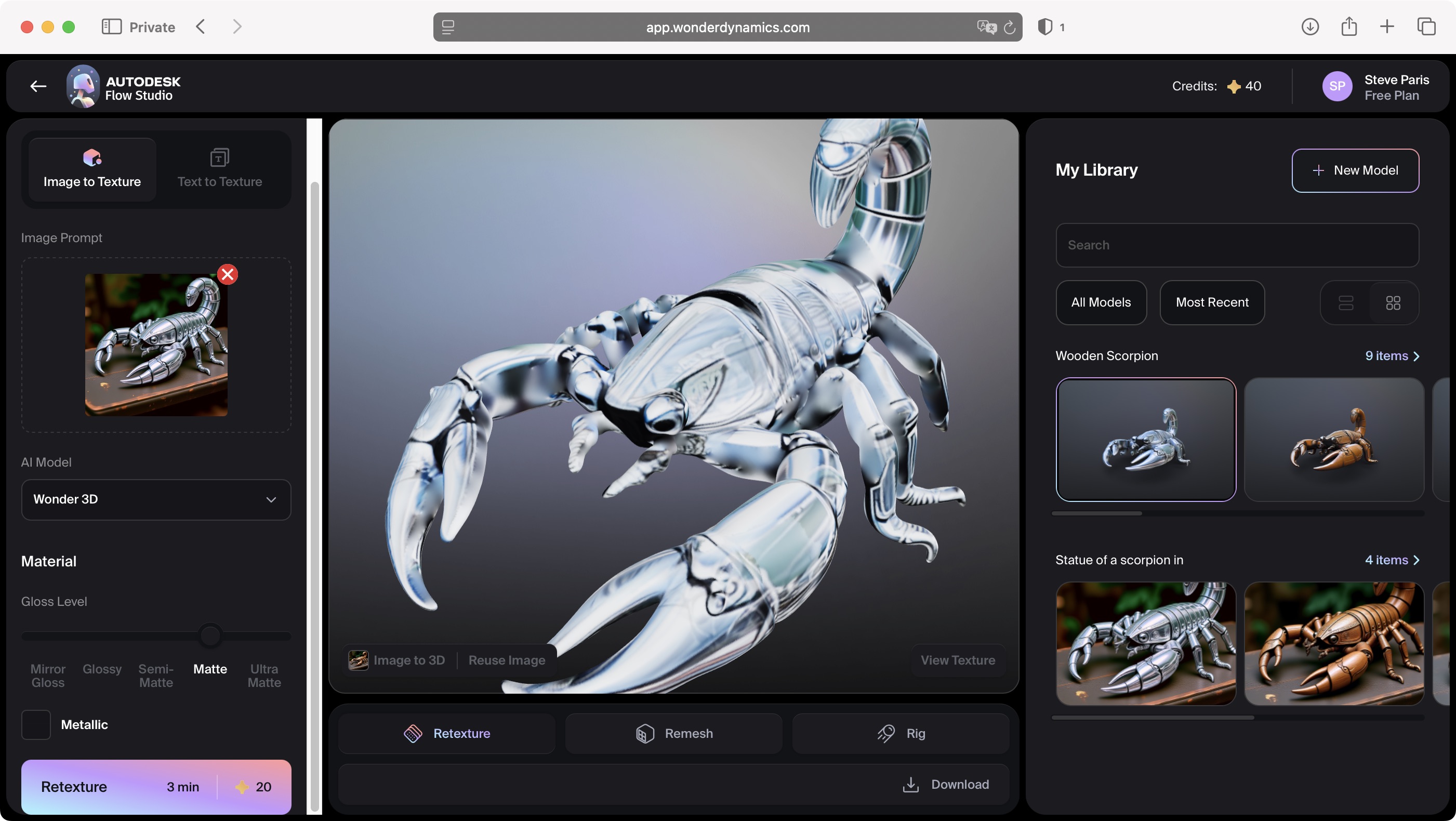

Image to 3D

Those of you who’ve read my previous article will know where we’re going next: provide an image for Flow Studio to convert into a 3D object. I experimented a few times with it, and was really impressed with the results, but what if the object you need doesn’t exist?

Let’s take my wooden scorpion statue. As it’s not a real world object, I can’t take a photo of it to feed the algorithm… except I do have a photo of it: Wonder 3D made one for me, plus there’s no need to download it, in order to re upload it or anything like that: as long as I’m still in ‘Text to Image’ mode, all I need to do is select the image from my online Library and click on the ‘Use as 3D Input’ button at the bottom of the image.

Doing so may not appear to change anything: the interface looks pretty much the same, but if you look at the top of the left sidebar, you’ll notice you’ve been switched to the ‘Image to 3D’ section, and my scorpion has been added to the Image Prompt without me having had to do anything.

Now the 3D process hasn’t started yet, since you need to pay the piper his 20 credits, and thankfully Flow Studio doesn’t just grab those without authorisation.

At the bottom of the left sidebar, your little pink button now says ‘Generate Mesh’. When you're ready to pay for the work, click on it (although don’t forget to give your forthcoming 3D creation a name - for some reason, unlike in the image section, that’s not done automatically for you)

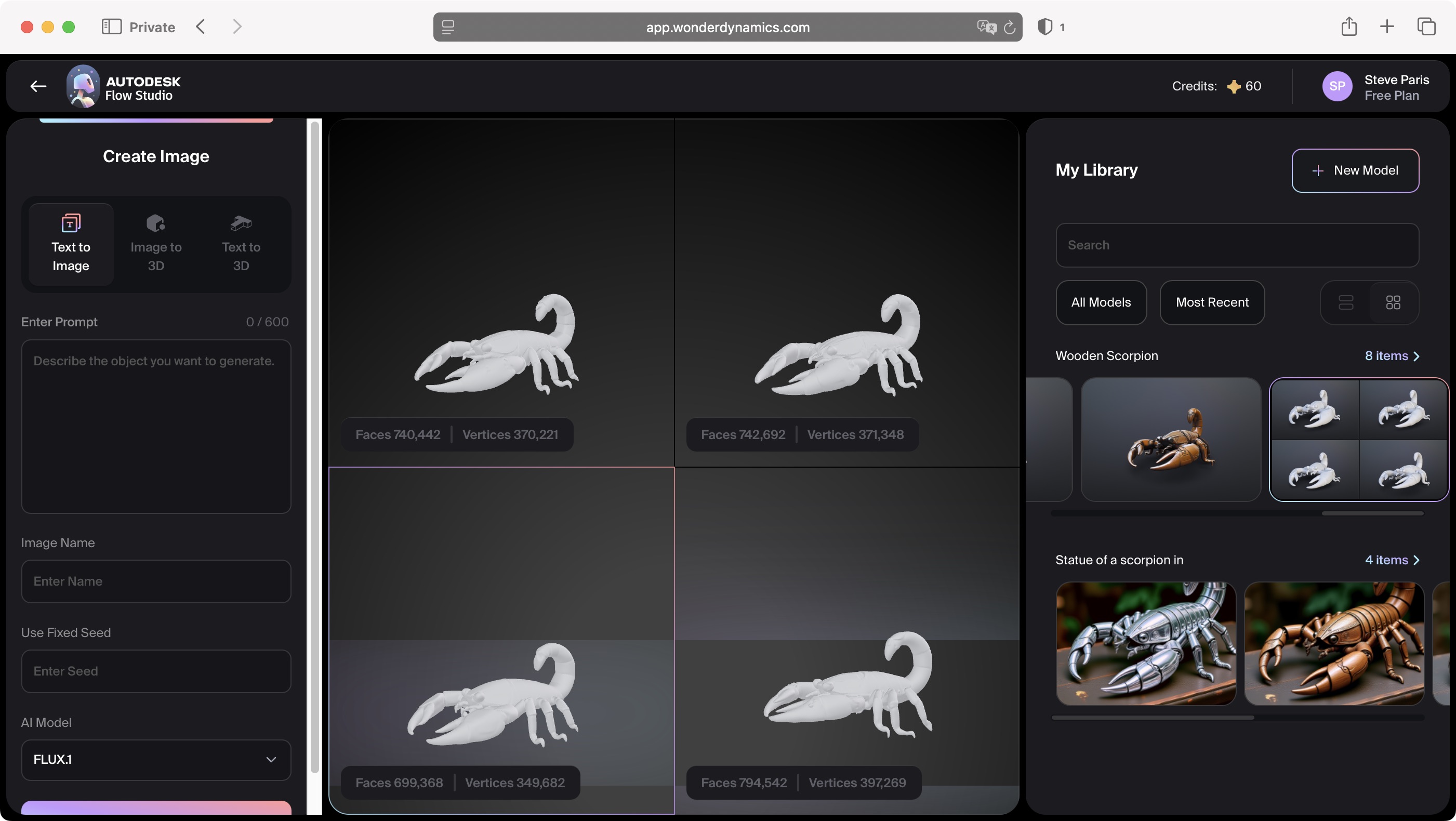

Give the algorithm a few minutes, and you’ll end up with four variants to choose from. The next stage of the process for ‘Image to 3D’ (which we're currently exploring) is exactly the same for ‘Text to 3D’, your third tool available for free. The biggest difference is the fact ‘Text to 3D’ would offer you 4 visibly different variants based on a text prompt.

When the algorithm is inspired by an image, as we’re doing here, the differences will be primarily down to the number of faces and vertices that were used to create the mesh.

3D Model Generation Options

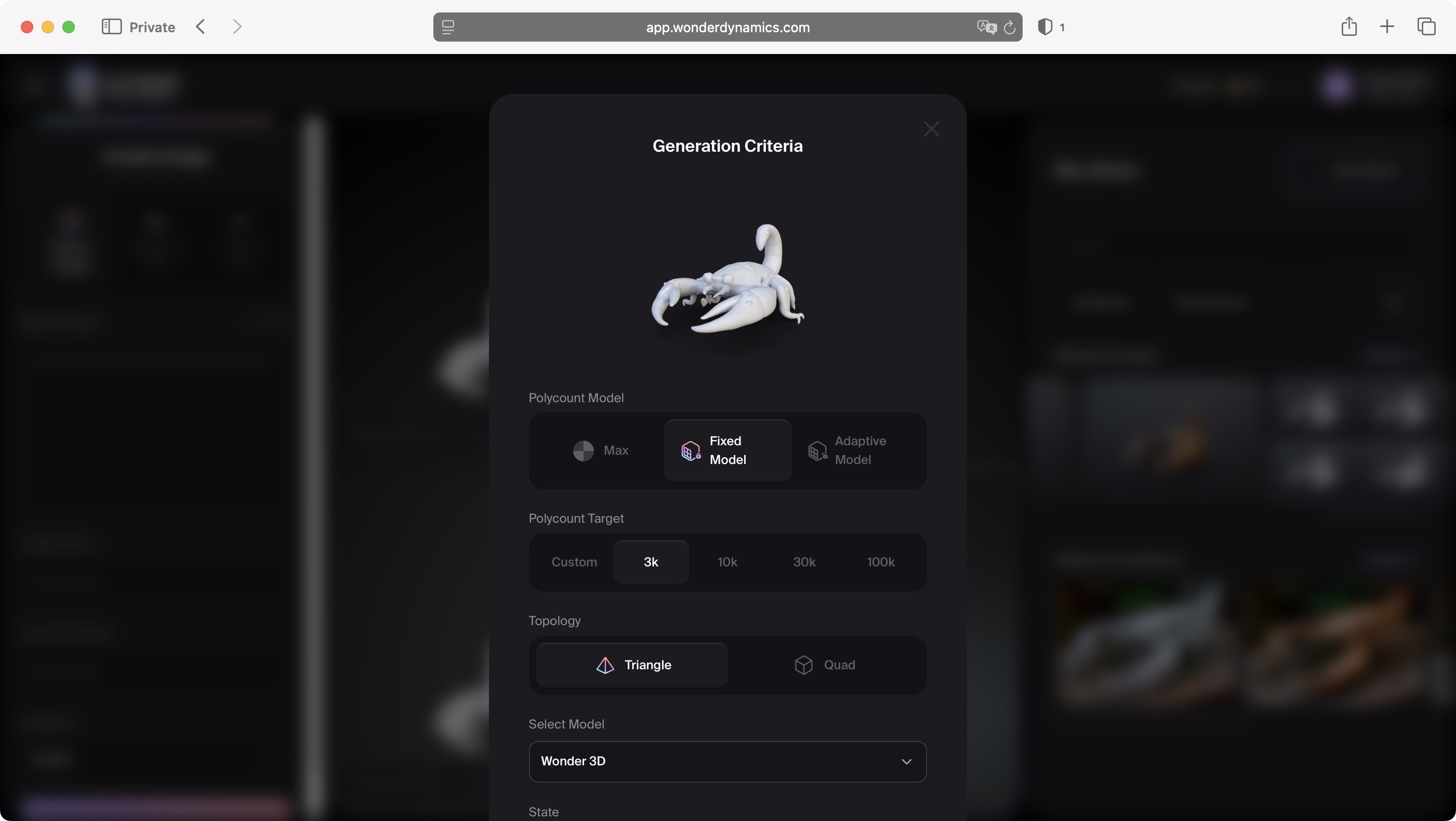

Once you've selected one of those four variants, you're then given multiple options. This is essentially where the texture is applied and the model is rendered, although you do have the option of rendering the model without any texture at all, so I'd be left with an albino scorpion in this case.

It’s an option.

Of interest are the ‘Polycount Model’ and ‘Topology’ parameters. What would be the difference between ‘Max’, ‘Fixed Model' and ‘Adaptive Model' for instance? And how different would the model be were I to use ‘Quad’ instead of ‘Triangle' for the topology?

By default, ‘Max’ and ‘Triangle’ are pre-selected.

Obviously, if you’re familiar with 3D modelling, you’ll know exactly what those terms mean, but if you’re new to all of this, I’ll explain what they are a little further down.

As a sidee-note, the free tier can only perform one action at a time. So, in this case, I had to wait for each generation to complete before I could manually start another.

On a paid subscription, you can instructed Flow Studio to perform these actions concurrently. The Lite tier lets the service work on 5 generations at a time, Standard, 10, Pro, 15, and Enterprise, 20. There’s always an advantage to paying, although remember: patience is also a virtue.

Just like the images Wonder 3D created for me, it’s easy to preview those 3D models in exactly the same way: click on the thumbnail of the model you're interested in, and it’ll appear in the centre of the interface.

Unlike the image which is static, however, you have a certain degree of control when previewing your model: using your laptop's trackpad or your mouse, rotate the model in three dimensions, seeing what it looks like from behind, above and below, and every angle in between. It's also possible to zoom in and get up close with your model.

And this is where you can start to appreciate some of the differences between all the various options, the most noticeable for my scorpion was what happens when I reduced the number of polygon targets from 30,000 to 3,000 (i.e., from the maximum to the minimum allowed number): my model couldn’t be rendered with so few of them, leaving it riddled with holes.

As for the other renders, they appeared at first glance to be much of a muchness... but that’s because these options depend on what you wish to do with your completed render.

Fixed, Adaptive, or Max

This is where it gets a little technical. Let’s start with the type of polygon model you're after: do you want Fixed, Adaptive, or Max?

Fixed means every part of your model will have the same level of smoothing, irrespective of the complexity of a particular area, so whether one section has a smooth surface and another has a complex curved shape, they’ll both get the same number of polygons. This is good for simple objects, but can be an issue for more complex ones. It is however a good option for creating game assets.

Adaptive is cleverer than that, as it will allocate a different number of polygons to different areas, based on its complexity. This does create a more complex model, and is ideal for detailed constructs.

Max appears to take Adaptive and puts a cap on it, restricting how many times a polygon can be subdivided into smaller ones, thereby preventing any potential system crashes, as without constraints, an overly keen Adaptive render might want to create smaller and smaller polygons to produce greater and great detail, exceeding the capability of your computer when rendering a scene.

And what about Triangles and Quads? The latter are 4-sided polygons, and are best suited for models that are destined to be animated, or organic modelling. Triangles on the other hand, are preferred for rigid models, such as a chair or weapon for instance.

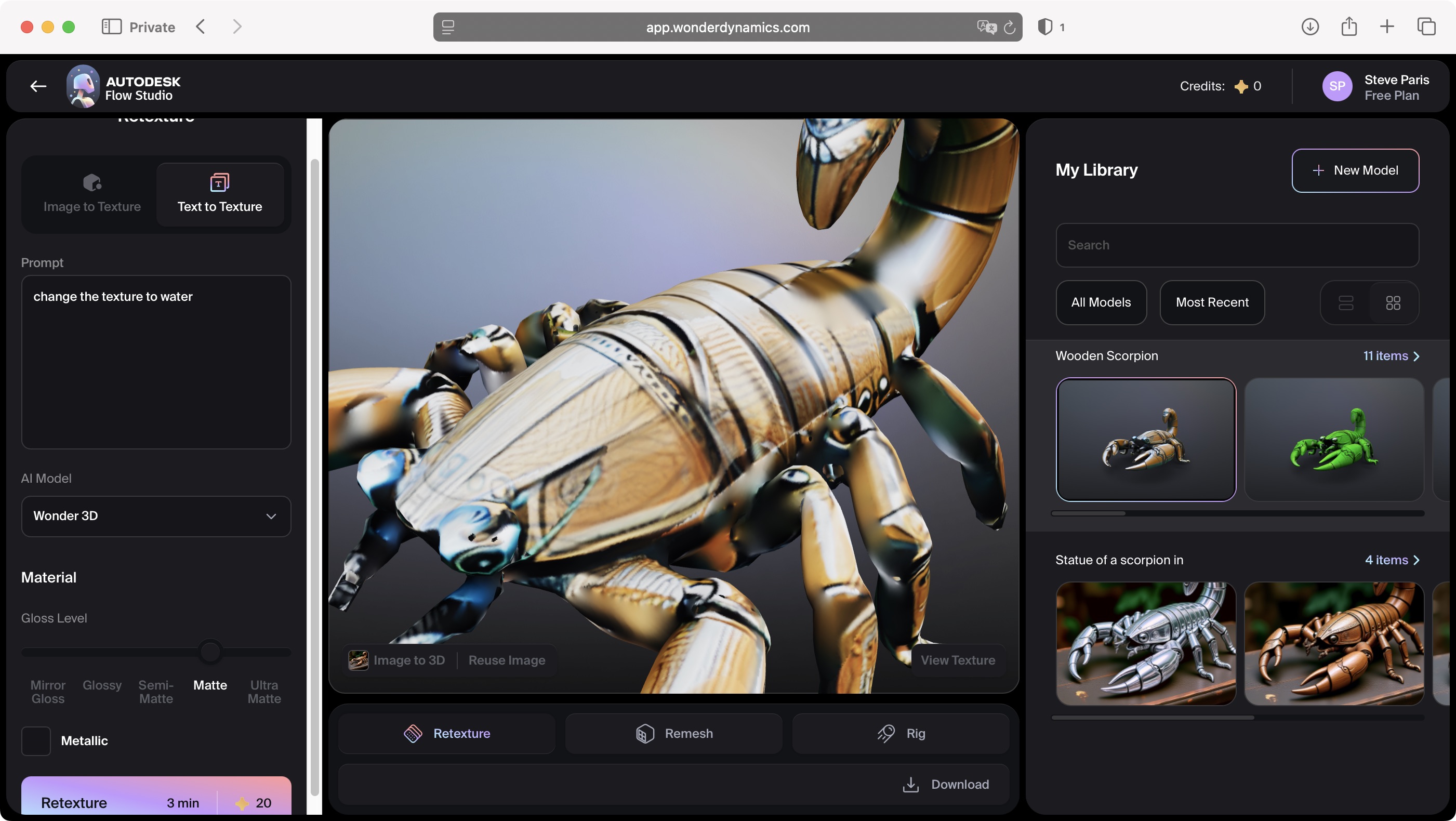

Retexture

The other buttons in that same region of the interface are Remesh, which takes you back the rendering options I mentioned above, but in the sidebar instead of a floating window, and Retexture.

That last one’s pretty obvious: it lets you remove the model’s skin and apply another one, but I couldn’t manage to get it to accept images of grass or water (yes, I wanted a grass or water scorpion).

However, using an image of the same scorpion I created earlier, only with a different finish (metal instead of wood in this case) worked like charm. It’s not clear to me why some images worked and others didn’t.

There is another retexture option: ‘Text to Texture’. That’s right: the good old text prompt is there to do your bidding: instead of a grass scorpion, I got a green one, and my water scorpion just had hints of blue here and there. Not too bad actually, but not what I was after.

Coming soon

If some of these rendering options I've explored are ideal for animating models, I hear you ask, it must mean you can set articulation points for your model.

Technically yes, and that's called a rig, which allows you to add a digital 3D skeleton to your model, showing which parts can articulate and which can’t.

However, just like the List View in your Library, there's a button for that, lower right of the main preview section labelled Rig. Sadly, as of this writing, it doesn’t do anything, as that feature isn’t ready to be unveiled.

Conclusion

One thing to bear in mind here, despite some features that don’t quite work as expected, and others that aren’t even functioning, these tools work incredibly fast: it takes seconds to create and image and a handful of minutes - around 2 or 3 for most requests - to get you a complex 3D model based either on an image or a text prompt.

This allows you to turn ideas into actual digital objects fast, with options to edit said creation to fine tune what you're after, all from a page in your web browser.

That’s impressive, and even if you know little about 3D rendering, you can see the potential for channeling your creativity here.

With that in mind, in my next article, I’ll be exploring if it’s possible to create a usable model, fine tune it, and (hopefully) export it with only 300 credits.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Steve has been writing about technology since 2003. Starting with Digital Creative Arts, he's since added his tech expertise at titles such as iCreate, MacFormat, MacWorld, MacLife, and TechRadar. His focus is on the creative arts, like website builders, image manipulation, and filmmaking software, but he hasn’t shied away from more business-oriented software either. He uses many of the apps he writes about in his personal and professional life. Steve loves how computers have enabled everyone to delve into creative possibilities, and is always delighted to share his knowledge, expertise, and experience with readers.

Become a TechRadar Insider

Become a TechRadar Insider