Moore's Law: how long will it last?

As long as the laws of physics allow

In 1965, future Intel co-founder Gordon E. Moore published a paper entitled 'Cramming more components onto integrated circuits'.

In it, he made an historic technology prediction, which you can boil down to a simple statement: 'The number of transistors incorporated in a chip will approximately double every 24 months'.

Moore could only guess at the impact of this transistor-doubling. In his original paper, written in an age of cabinet-sized mainframes and $16,000 mini computers, he suggested that new transistor-based integrated circuits would lead to "such wonders as home computers - or at least terminals connected to a central computer - automatic controls for automobiles, and personal portable communications equipment."

Fast-forward to today and we have powerful desktop PCs and ultra-thin laptops, self-driving cars (almost), smartphones and tablets.

For the first 30 years of microprocessor development, speeds ramped up from 1MHz to 5GHz - a 3,500 fold increase. But Moore's Law isn't strictly about performance.

Show me the money

It's about economics. "What I was trying to do [in the paper]," Moore explained in 2005, "was to get across the idea that this was the way electronics was going to become cheap… you could see the changes that were coming, make the yields go up, and get the cost per transistors down dramatically."

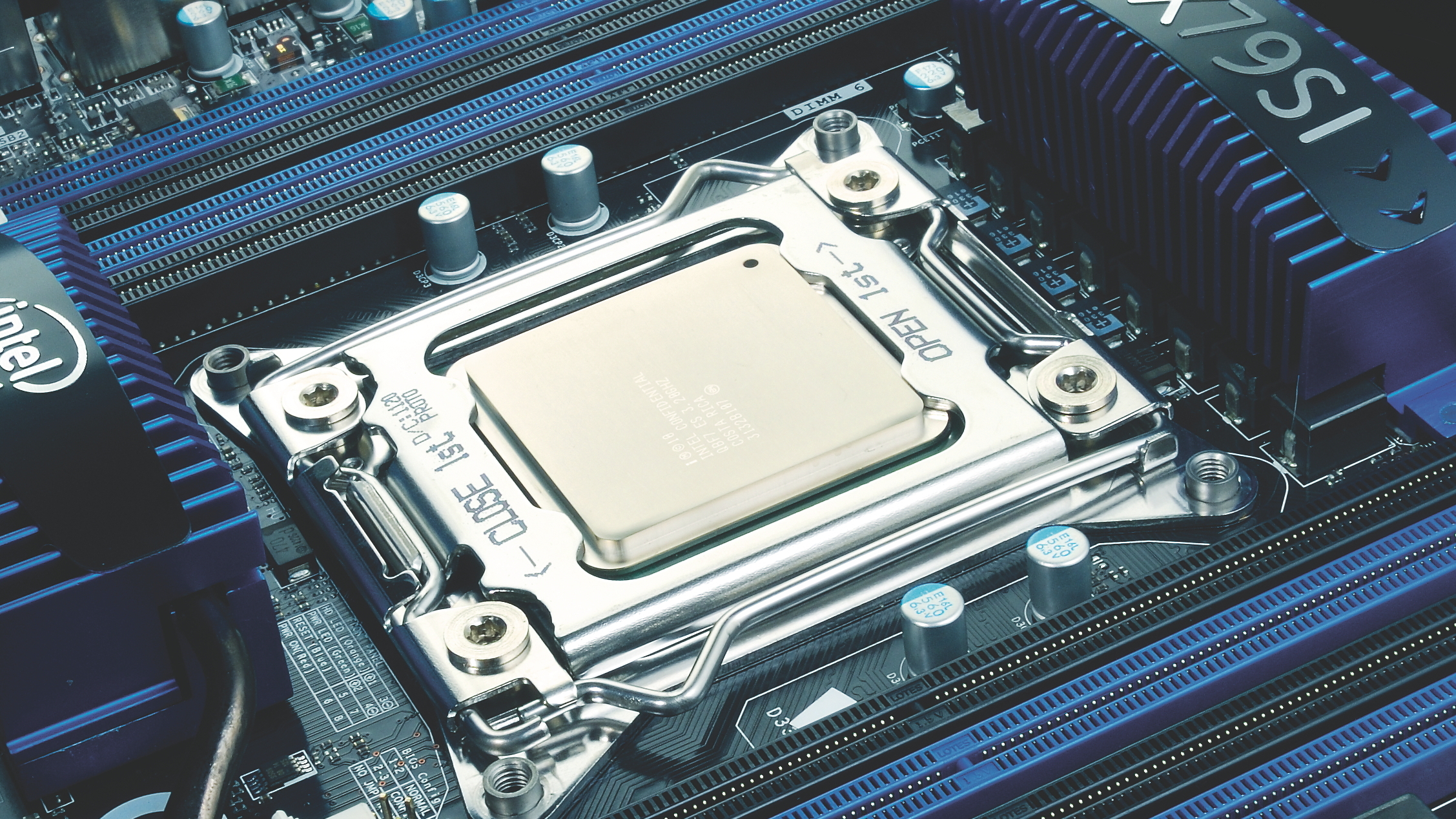

Amazingly, Moore's Law has remained applicable to the semiconductor industry for almost 50 years, from the 2,300 transistors in Intel's 10-micron (10,000nm) 4004 microprocessor to the billions of 3D Tri-Gate transistors crammed into its 22nm Ivy Bridge chips.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Of course, There have been obstacles along the way, such as limits imposed by lithographic technology (the process for transferring circuit patterns onto silicon wafers) and transistor gate power leakage. But engineers have always found ways to overcome them - using shorter lithographic wavelengths, double patterning, optical proximity correction, and high-K/metal gate innovations, to name only a few.

Moore's Law is alive and kicking

The immediate future of Moore's Law isn't in doubt. At the 2013 Intel Developer Forum (IDF), Intel CEO Brian Krzanich showed off its next-generation Broadwell SOC chip. "This is it, folks," Krzanich exclaimed on stage. "14nm is here, it's working and we'll be shipping by the end of this year." Although dial down your excitement a bit, as manufacturing issues pushed Broadwell back into 2014.

Nevertheless, Intel seems confident that Moore's Law is alive and kicking as it moves to a 14nm process node, the next stop on a technology roadmap that scales down to 5nm. But while it might be possible to fabricate chips at 5nm and below, the technological challenges involved and the new equipment required surely won't make it cost-effective to do so.

So the question now is: how long does Moore's Law have left before silicon can't be pushed any further? Five years? Ten?

"I pick about 2020 as the earliest we could call [Moore's Law] dead," said DARPA Director and Pentium processor architect Robert Colwell, when he spoke at the Hot Chips conference in 2013. "And I'm picking 7nm. You could talk me into 2022. You might even be able to talk me into 5nm. But you're not going to talk me into 1nm. I think physics dictates against that."

From Silicon to Graphene

In the short term, Moore's Law will continue to hold true as engineers find new ways to push existing CMOS technology to its limit.

There's the promise of performance gains using new materials, such as indium gallium arsenide (InGaAs), indium phosphide (InP) and silicon germanium (SiGe). These have a higher electron mobility and support lower voltages, thereby reducing power consumption.

And keep an eye out for graphene nanoribbons, developed by researchers at the University of California in Berkeley - molecular-scale wires designed to carry data thousands of times faster than traditional copper interconnects.

"These nanoribbons might be a key to keeping up with Moore's Law," said Felix Fischer, a chemist working on the project at Berkeley. Use them in integrated circuits and these one atom-thick, 15 atom-wide graphene strips could potentially increase the number of transistors on a chip by more than 10,000.

Become a TechRadar Insider

Become a TechRadar Insider