Canonical on Ubuntu 'Bionic Beaver' 18.04 LTS and its controversial changes

With ongoing plans to take Canonical to IPO, we spoke to the company behind Ubuntu about the latest release

Magic and bare metal

It seems like YAML transformed a lot of the configuration side of maintaining lots of servers.

DB: Yeah, I think it’s a very simple configuration format. It also has some compatibility with JSON. It really gets out of the way. There was a move to standardise on something like XML, which was nice for computers to read and produce and store data, but it was very poor for humans to read. So YAML’s kind of taken the other approach of making it very easy to parse with your eyes, and to view and edit as a human. I think even though it has a few quirks with presentation, it still is a much nicer unified format for editing.

Again, it came from feedback. One you’ve already hinted at, which is a small thing, but people clamour for it – htop. Anywhere that Ubuntu Server is installer, htop will now be available and supported by Canonical. That is a big one. Sysadmins have been asking for it for a while.

Article continues belowThe last one that I kind of wanted to bullet point was LXD 3.0, which is Canonical’s supported container solution [...] and the big feature with LXD 3.0 is clustering. So you will now be able to cluster together multiple LXD servers into just a lightweight development cloud where you can make requests of it, and spin up containers and delete them. A team of people can operate on that little LXD cluster.

Does that come with a dashboard model setup?

DB: No. It’s targeted at a team of developers, not something that you’d spin up to replace OpenStack or anything like that. It’s just a nice way for people to centralise their LXD workloads that they’re already running on their developer workstations – if they need to.

Do you get involved with Conjure-up [a front-end that uses Juju and how that’s been received as well, from people who use it?

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

DB: Yeah. Conjure-up is, again, available as a snap. If you want a snap, install conjure-up and try it out. That is our way to demonstrate and try out some of the big software technologies that we have. I think the two main ones that I want to call attention to are OpenStack, which as you know, in a production setup requires tens and dozens of servers. So just spinning that up locally already lets you ‘proof of concept’ something that will be very difficult to acquire than a number of compute resources that you need to try it out.

Then the second thing that we install via conjure-up is what we call the Canonical distribution of Kubernetes, which is the newer way to try out Kubernetes. It’s a one-button experience to get Kubernetes running on a series of LXD containers, and see exactly how it functions, and how you interact with it before you go and deploy it onto your bare metal somewhere.

So conjure-up, I think the experience of 18.04 has just been refined. We don’t have a tonne of improvements from 17.10, except for bug fixes and stability, and just making that experience nice and smooth and polished. That’s what our focus has been on in 18.04.

Could you explain the benefits of Metal as a Service (MaaS), and give us a clearer understanding of that?

DB: MAAS has quite an elastic goal. It’s to model your data center. So, it really transforms the way that you manage machines and physical hardware in your data centre. So it has the ability to model your networking that you have in your data centre, and to say which VLAN connects to where… you know, which machines can see which VLAN.

It also has just the concept that these machines all fit into the same rack. They all share kind of an availability zone in the cloud. If these ones go down, you know, they’re all connected in the same kind of fault domain.

So it has some of those data center concepts in it. But once you get it installed and once you’re using it, it is a tool that’s used to just launch operating systems on machines. Instead of having a machine that is long-running and can never change and takes a dedicated administrator to do software updates on it.

You can treat your physical hardware, just like it were in a cloud. And you can launch it with a cloud in its script. You can use other deployments and technologies like Juju and Ansible to launch other Ubuntu instances. And then they come up fresh, and they’re ready to go. You can leave them long-running, or you can just say, “I’m done with it. I’ve released it back into the pool. I’ve other available machines to use.”

It’s really about transforming your data centre into something that resembles the new cloud world that we live in.

At what scale does that become really beneficial?

DB: The nice thing about MaaS is, I have a MaaS running at my house with six machines that I use with six little NUCs for doing testing. If I need to know what Ubuntu looks like and how it’s functioning on a physical machine, and I need to tie those physical machines together in a VM… and, you know, I’m kind of at the limit of the VMs I can launch, I can just use MaaS to spin that up in my own little test lab that I have. Which, again, is just six NUCs that I have.

So you can go down to whatever the smallest workload size that you want for a test lab. It can also scale up to the thousands of nodes that are in the data centre. It has the concept of a rack controller, and the region controller. So a rack controller, instead of the top of each rack in a data centre, you’ll be able to host images and handle the network traffic that’s necessary.

But then your region will be your entire data centre. That is all handled in the architecture of MaaS. It really is nice in that way. It scales down to the test lab, and up to the production environment.

If you do operating system testing at all, it’s a fantastic tool.

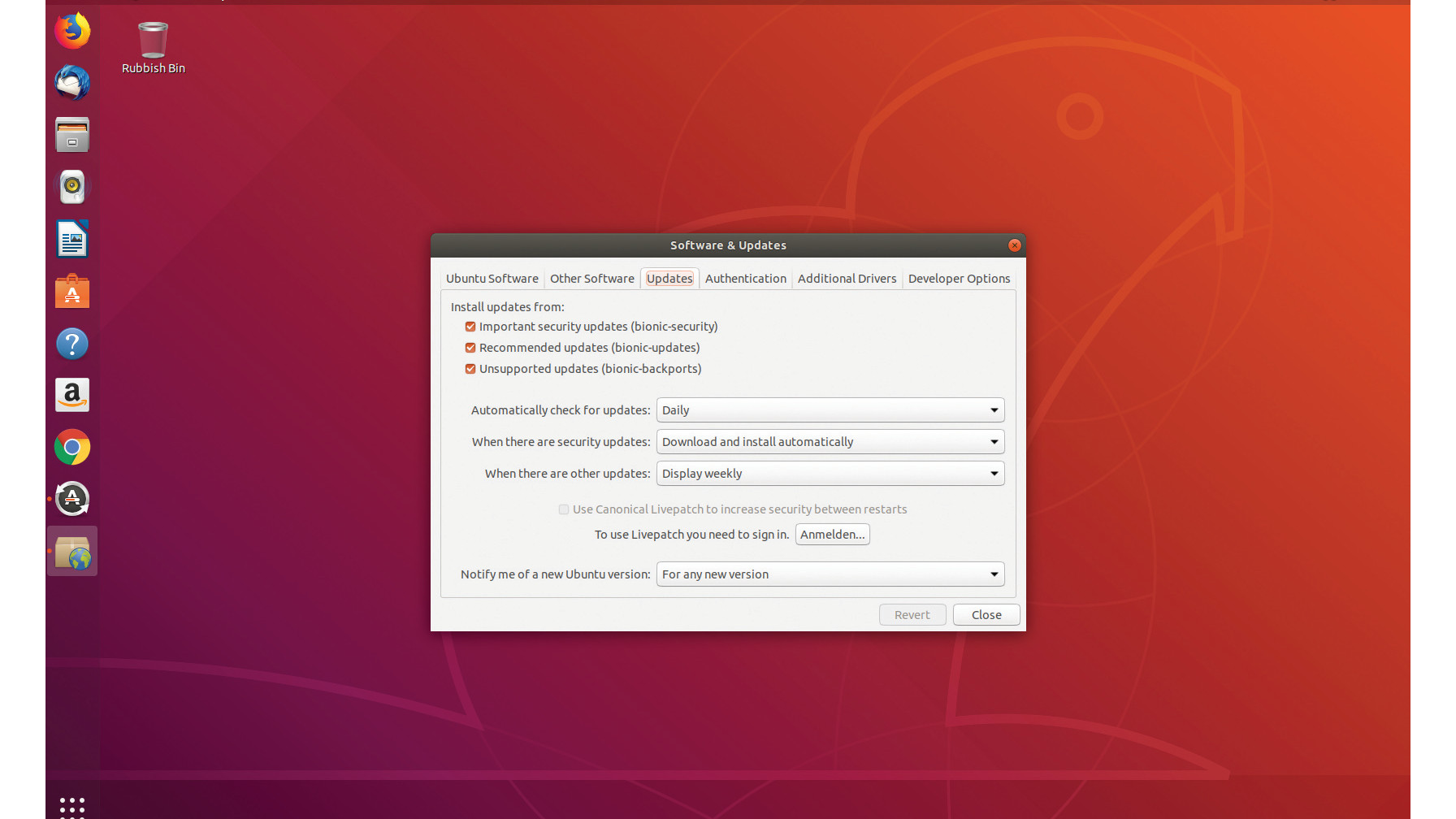

I did want to mention one other thing, since we’re talking 18.04. The Ubuntu Advantage product that we have – I know that that’s not always the most interesting to end developers and to single users of Ubuntu. But as Ubuntu is used in just so many large-scale applications, we do have a product that we’re continuing for 18.04 called Livepatch.

In 18.04, we’re definitely carrying that forward. The cool thing about Livepatch is it installs hot patches for your kernel, so that you’re always up to date and secure with any of the major CVEs or vulnerabilities that come out.

But it is also available as a free service for up to three computers for anybody that signs up for it with Canonical. It’s not just something that you need to come to Canonical and set up an account and pay for on a monthly basis. That’s really what we’re targeting large-scale users of Livepatch. But just for the people that are running their own workstation at home or their own little test lab that they have like I do, they can go and get Livepatch; and next year, any machine that’s long-running can be up to date with kernel hotfixes that come out.

Chris Thornett is the Technology Content Manager at onebite, editor, writer and freelance tech journalist covering Linux and open source. Former editor of Linux User and Developer magazine.

Become a TechRadar Insider

Become a TechRadar Insider