Best SEO tool of 2025

Make your website more search engine friendly

- Best overall

- Best all-in-one platform

- Best alternative

- Best for professionals

- Best for tracking links

- Best for page crawling

- Best for keyword research

- Best for newbies

- Best for individuals and companies

- Best with integrations

- Best free platform

- Best for businesses

- Best free toolbar extension

- Best SEO tool FAQs

- How we test

We list the best SEO tools, to make it simple and easy to optimize your website to be more search engine friendly, while also catering for general users.

The best SEO tools make optimizing your website for search engines and monitoring your rankings simple and easy. At its core, SEO (Search Engine Optimization) was designed to extend web accessibility by following HTML4 guidelines and help identify the purpose and content of a document. This meant ensuring web pages had unique page titles that accurately reflected their content, using keyword headings to highlight the content of individual pages, and treating other tags accordingly. These practices were necessary because web developers often focused only on coding issues, ignoring the user experience and web publishing guidelines, a problem that still occurs today.

Over time, SEO has evolved to include factors beyond the content on your page, such as links from other websites and user experience. Search engines like Google are continually updating their ranking algorithms, so staying on top of your SEO with the best SEO tools remains crucial. Below, we've curated a list of our top picks.

We've listed the best SEO keyword research tool.

The best SEO tools of 2025 in full:

Why you can trust TechRadar

Best SEO tool overall

Reasons to buy

Reasons to avoid

A 14-day free trial is available for Techradar readers to try out the service

Semrush is one of the most popular SEO tools globally, offering a wide range of SEO and content marketing features. You can conduct keyword research, monitor backlinks, analyze competitors, optimize content, and much more, all through this platform.

However, what sets Semrush apart is its Competitor Analysis tool. You can monitor your competitors' SEO strategies, including their keyword rankings, advertising strategies, and backlink profiles. Additionally, its ability to track trends and changes in the SEO landscape provides a dynamic view of the competitive landscape.

Moving forward, Semrush features a powerful Keyword Research tool that offers insights such as search volume, difficulty levels, and trends, all of which are crucial for an effective SEO strategy. It also includes a Site Audit functionality that thoroughly analyzes your website’s health and identifies issues that must be addressed.

Semrush also offers Traffic Analytics, which helps identify your competitors' main sources of web traffic, such as the top referring sites. This allows you to delve into the details of how your and your competitors' sites compare in terms of average session duration and bounce rates. Additionally, the "Traffic Sources Comparison" feature provides an overview of digital marketing channels for multiple competitors simultaneously. For those new to SEO, 'bounce rates' refer to the percentage of visitors who visit a website and then leave without accessing any other pages on the same site.

The platform also includes a Domain Overview feature, which does much more than summarize your competitors' SEO strategies. You can detect specific keywords they've targeted and access the relative performance of your domains on both desktop and mobile devices.

Over time, Semrush has added a few more tools to its offerings, including a writer marketplace, a toolset for agencies, and even a white-glove service for PR agencies.

Read our full SEMrush review.

Best all-in-one SEO tool platform

Reasons to buy

Reasons to avoid

If you’ve ever set your foot in SEO, there is a very high probability that you must’ve come across Ahrefs. It is a comprehensive SEO tool that comes with various features, including Site Explorer, Keywords Explorer, Site Audit, Content Explorer, etc.

Ahrefs boasts the largest backlink index of top SEO tools, with over 295 billion indexed pages and more than 16 trillion backlinks. Throw in an upgraded keywords explorer, and tools for monitoring the competition, plus a serious amount of user documentation, and Ahrefs may be the tool you need to rank better and increase traffic.

At its core, Ahrefs comes out as a strong contender regarding backlink analysis, keyword research, and competitive analysis. Plus, you can see the search volume, keyword difficulty, and SERP analysis to build your content strategy through their Keyword Explorer.

Ahrefs boasts several powerful features that help set it apart, including a proprietary web crawler which is one of the best in the market. However, Ahrefs even comes with some drawbacks. For instance, the tool’s depth can be a bit hard to learn for beginners, and its paid plans can be a major reason for concern for small businesses. Conversely, large enterprises might find the investment justifiable for the breadth of insights offered.

While price points are broadly aligned with those of similar products, best-in-class link analysis, powerful research tools, and knowledgeable user support help make Ahrefs one of the best options for understanding and improving your domain’s online presence.

Read our full Ahrefs review.

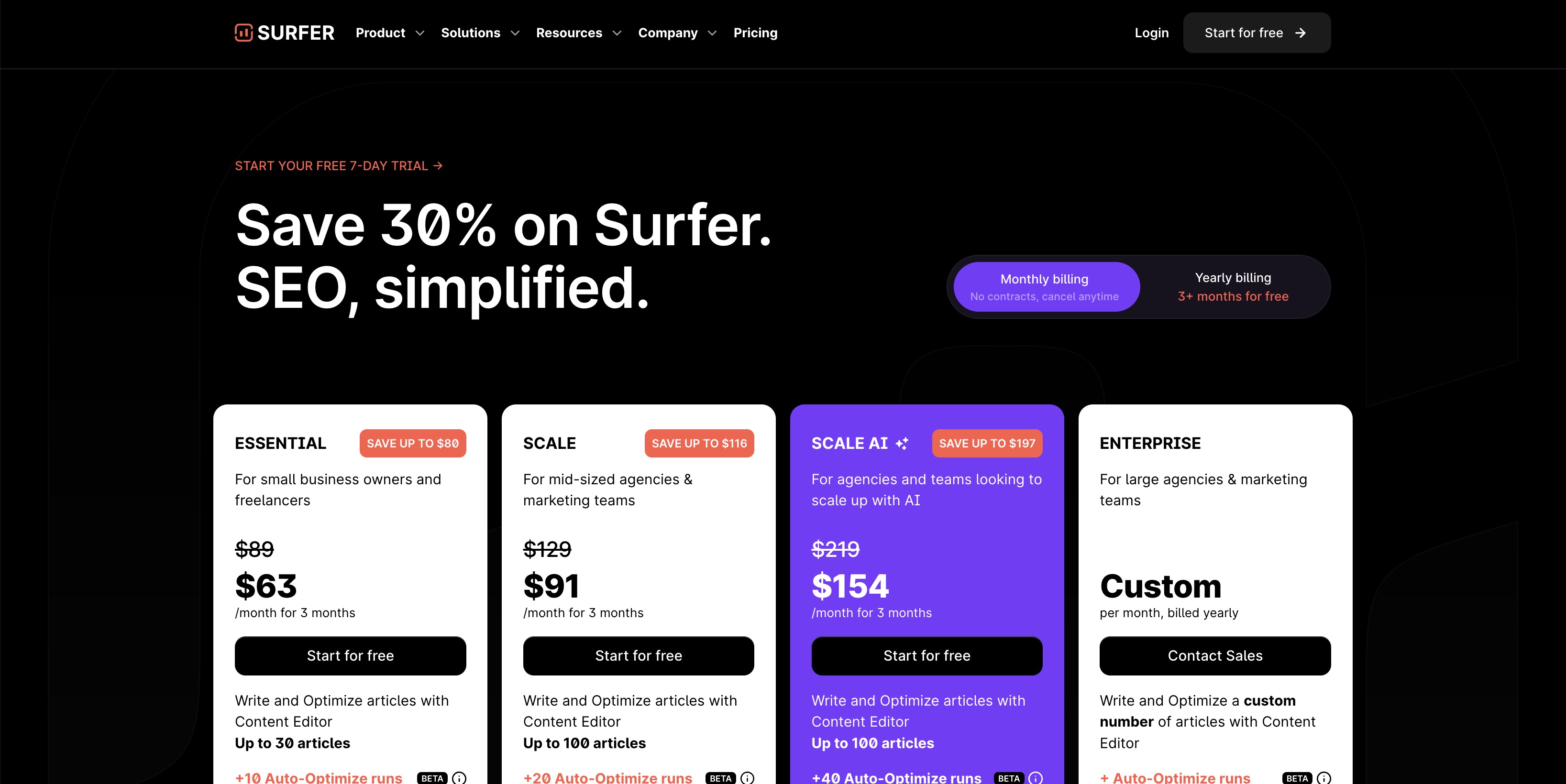

Best SEO tool alternative

Reasons to buy

Reasons to avoid

When it comes to optimizing your website for search engines, using the right SEO tools is crucial. There are many options available in the market, but not all of them are created equal. While most SEO optimization tools come equipped with basic features such as keyword research and content editors, some like SurferSEO offer much more.

SurferSEO is a comprehensive SEO optimization tool that goes above and beyond to provide users with an all-in-one solution. It's equipped with a range of features that include keyword research, content analysis, SERP analyzer, audio tools, and more, all powered by AI technology. This makes it a top choice for marketers who want to stay ahead of the game and optimize their content to its fullest potential.

One of the best things about SurferSEO is its seamless setup process. Regardless of your skill level, you can easily set up your account and start using the tool right away. It's also available at various price points, making it accessible to businesses of all sizes. Plus, it's enriched with a growing list of AI-based features, which are increasingly vital in the industry.

SurferSEO also provides an extensive array of training tools, including a blog, knowledge base, private SurferSEO community, and live training from the Surfer Academy. This allows users to stay up-to-date with the latest trends and best practices in SEO optimization. By providing detailed insights and clear guidance, SurferSEO empowers marketers to optimize their content effectively and compete successfully in search engine rankings.

Whether you're a seasoned SEO professional or just starting, integrating SurferSEO into your SEO strategy could significantly enhance your online presence and drive more organic traffic to your site. With its advanced features, user-friendly interface, and comprehensive training resources, SurferSEO is undoubtedly one of the best SEO optimization tools available in the market today.

Read our full SurferSEO review.

Best SEO tool for professionals

Reasons to buy

Reasons to avoid

Moz Pro is a great SEO tool that helps you increase traffic, rankings, and visibility across search engine results.

Moz Pro comes with a Link Explorer that examines and analyzes your website’s backlink profile along with your competitors, giving detailed insights into the quantity and quality of the backlinks. You can even manage listings, monitor reviews, and publish accurate location data across the web through Moz Local. Additionally, SERP analysis uses the Search Engine Results Pages to deliver insights into the factors influencing the ranking of pages, helping to understand and identify opportunities that have room left for improvement.

Plus, you get a competitor analysis tool that compares your sites with your competitors, focusing on various aspects, including keyword rankings and online visibility, to gauge where you stand. There is also a Rank Checker tool that can check your website’s ranking for specific keywords to further escalate your SEO practices.

Similarly, users can also study Keywords and Keyword combinations that might be ideal for targeting through Moz Pro’s Keyword Research Tool. And, there's also a backlink analysis tool that mixes a combination of metrics, including anchor text in links as well as estimated domain authority.

Pricing for Moz Pro begins at $99 per month for the Standard plan, which covers the basic tools. The Medium plan offers a wider range of features for $179 per month, and a free trial is available. Note that plans come with a 20% discount if paid for annually. Additional plans are available for agency and enterprise needs, and there are additional paid-for tools for local listings and STAT data analysis.

Even if you don't sign up for Moz Pro, a number of free tools are available. There's also a huge supporting community ready to offer help, advice, and guidance across the breadth of search marketing issues.

Read our full Moz Pro review.

Best SEO tool for tracking links

Reasons to buy

Reasons to avoid

Majestic SEO tools have consistently received praise from SEO veterans since its inception in 2011. This also makes it one of the oldest SEO tools available today.

Majestic offers Trust Flow & Citation Flow Metrics that help to know the quality of the backlinks pointing to a website. You even have a Backlink History Checker that lets you see the number of backlinks detected by the platform over time. But, what makes Majestic stand out is its Search Explorer, which offers a search engine-like experience to rank pages based on their backlinks instead of the traditional search algorithms, giving a unique perspective on search relevance.

If you find yourself integrating your tools with custom applications and reports, then Majestic is just what you need as you get the API access for the platform to play around with it. Plus, users can even create custom reports and set up alerts for changes in the backlink profile or new backlinks, all informing the user about the backlink’s health

The tool's main focus is on backlinks, which represent links between one website and another. This has a significant influence on SEO performance and as such, Majestic has a huge amount of backlink data.

Users can search both a 'Fresh Index' which is crawled and updated throughout the day, in addition to a 'Historic Index' which has been praised online for its lightning retrieval speed. One of the most popular features is the 'Majestic Million' which displays a ranking of the top 1 million websites.

The 'Lite' version of Majestic incorporates useful features such as a bulk backlink checker, a record of referring domains, IPs, and subnets as well as Majestic's integrated 'Site Explorer'. This feature which is designed to give you an overview of your online store has received some negative comments due to looking a little dated. Majestic also has no Google Analytics integration.

Read our full Majestic SEO Tools review.

Best SEO tool for page crawling

Reasons to buy

Reasons to avoid

SEO Spider was originally created in 2010 by the euphemistically named "Screaming Frog". This rowdy reptile's clients include major players like Disney, Shazam, and Dell.

At its core, SEO Spider excels in technical SEO, particularly through its advanced site crawling and auditing capabilities. This feature is extensively used for conducting thorough website audits and identifying a range of SEO issues like broken links, redirect chains, and server errors.

A standout feature is its duplicate content detection, widely used to resolve detrimental content duplication issues. Additionally, it offers detailed insights on pages with missing title tags, duplicate meta tags, and tags of the wrong length, as well as check the number of links placed on each page.

SEO Spider’s Broken Links Checker is another invaluable offering that identifies and reports each and every broken link throughout the website. However, one of the most attractive features of SEO Spider is its ability to integrate with Google Analytics and Search Console. It connects with the website you are trying to audit, uses GA and GSC API to fetch data from both ends and creates comprehensive reports for individual URLs on the website.

SEO Spider even excels when it comes to security features as it checks for secure pages and protocols, all of which are critical in identifying mixed content issues that could affect the site’s security. Despite its rich functionality, SEO Spider can have a steep learning curve for beginners, but luckily there are plenty of guides on the web to help you out.

There is both a free and paid version of SEO Spider. The free version contains most basic features such as crawling redirects but this is limited to 500 URLs. This makes the 'Lite' version of SEO Spider suitable only for smaller domains. The paid version is $180 per year and includes more advanced features as well as free tech support.

Read our full SEO Spider review.

Best SEO tool for keyword research

Reasons to buy

Reasons to avoid

SpyFu is a search analytics company that scrapes the internet for data. It is used to identify the keywords that companies are bidding on using Google AdWords. SpyFu also matches search results with search terms, providing companies with deeper insight into the types of searches and keywords for which they appear on Google’s Search Engine Results Page (SERP).

SpyFu's unique selling point is its ability to ‘spy’ on your competitors by helping you pinpoint the keywords your competitors target for online advertising and generating a report of the words and phrases that generate the most traffic. In this way, you can keep a step ahead of other companies or services working in your industry and attract more traffic to your own sites.

The tool's user interface stands out for its balance of in-depth analytics and accessibility, making it suitable for both seasoned professionals and newcomers to SEO. It provides historical data tracking, a unique feature that allows an in-depth view of how keywords have performed over time, offering a strategic edge in planning. Additionally, its backlink analysis goes beyond basic insights, providing a nuanced understanding of backlink profiles essential for off-page SEO strategies. However, compared to tools like SEMrush and Ahrefs, it may lag slightly in the breadth of its backlink database.

Few products come close to providing the depth and functionality you get with SpyFu. It is priced below its main competitors, is easy to use, and can be customized to your needs. It provides all of the PPC, SEO, and keyword research tools anyone from a big company to a small startup would need and is a highly effective and well-designed application.

Read our full SpyFu review.

Best SEO tool for newbies

Reasons to buy

Reasons to avoid

RankIQ is a great tool for optimizing SEO, but it has its pros and cons. Let's start with the good news.

RankIQ is one of the best SEO solutions for content analysis. Its AI-powered content optimizer gives clear and specific guidance to writers on how to optimize their content for SEO. This can be particularly useful for those who are new to SEO or want to streamline their content creation process.

Moreover, RankIQ offers an excellent keyword product that provides users with a list of high-traffic, low-competition keywords specifically tailored to their niche. This targeted approach can significantly improve the chances of ranking high on search engine results pages (SERPs).

RankIQ is also user-friendly and affordable, making it accessible to bloggers, freelancers, and small businesses.

However, there are some downsides to using RankIQ. The service is best suited for bloggers and smaller websites, not larger enterprises. This lack of scalability could be an issue for rapidly growing businesses.

Additionally, RankIQ's SEO tools are more basic and may not be suitable for larger enterprises that require advanced features. The service also doesn't integrate with other digital marketing tools, which could be problematic for users who need such integrations.

Finally, RankIQ does provide a free trial, but some users may prefer to test the tool before committing to a subscription.

After testing RankIQ, we concluded that it's an effective tool for bloggers and smaller companies looking to improve their search engine ranking. It offers a user-friendly interface and AI-driven insights that can be useful for those new to SEO or who have limited time. However, larger enterprises or experienced SEO professionals may require a more comprehensive and integrated approach that RankIQ may not be able to provide.

As with any tool, it's essential to consider your specific needs, level of expertise, and resources before deciding if RankIQ is the right fit for your SEO strategy.

Best SEO tool for individuals and companies

Reasons to buy

Reasons to avoid

MarketMuse is a versatile tool that caters to individuals, small and large teams alike. It is designed in such a way that it can help individuals and large organizations efficiently. It comes with an AI-powered guidance that assists in creating detailed and informative content related to your chosen topic. MarketMuse's AI-based guidance ensures that the content you create is comprehensive, authoritative, and relevant. By enhancing the relevance and depth of the content, MarketMuse can significantly improve the SEO success rate. With MarketMuse's ability to recognize related content, users can create a robust, interlinked content strategy that can enhance the website's domain authority.

However, there are a few things to criticize about MarketMuse. Firstly, there is a steep learning curve to master the platform's full range of features. Although users of all backgrounds can get started with MarketMuse with relative ease, it is recommended to opt for paid training through MarketMuse to gain expertise and maximize the benefits. MarketMuse offers training and support to help users overcome the learning curve and achieve their goals.

It is also essential to note that SEO optimization tools like MarketMuse have a significant drawback. They have no control over the dynamic nature of search engine algorithms. Whenever search engines change how they handle searches, even the best tools will be affected. Therefore, companies like MarketMuse must adjust the data behind their offerings, and end-users will also need to make necessary adjustments. This can be a challenging process for everyone involved. However, MarketMuse is known for regular updates and adapting to the changes in the search engine algorithms.

Overall, MarketMuse is one of the best SEO optimization tools available in the market. It is worth considering, and users can take advantage of the free trial to assess whether it’s worth the price of admission for their website. MarketMuse's comprehensive support and regular updates make it a reliable and efficient tool for content optimization.

Best SEO tool for integrations

Reasons to buy

Reasons to avoid

Clearscope is a content optimization tool that has been gaining popularity among SEO professionals. It uses AI technology to analyze content and provide suggestions for using keywords that can enhance the chances of a piece of content ranking well on search engines.

One of the features that makes Clearscope stand out is its user interface, which is designed to suit experienced SEO professionals perfectly. Additionally, Clearscope integrates seamlessly with two widely used software products: Google Docs and WordPress. This makes it easy for content creators to optimize their content without having to switch between different applications.

Another notable feature of Clearscope is its reports, which provide real-time feedback that can assist in crafting search engine-friendly and relevant content. This feature is especially useful for content creators and marketers who need to optimize their content quickly and accurately.

However, as with any tool, Clearscope has its drawbacks. One major deterrent for some individuals might be the cost of using Clearscope. While the platform provides a lot of value, it may be too expensive for some users. Providing a trial could attract a more extensive user base regardless of Clearscope's pricing structure.

Moreover, beginners in SEO optimization might find it challenging to navigate Clearscope despite its user design. There is still a learning curve involved in using Clearscope. However, the platform offers plenty of resources to help users get started, including tutorials and webinars.

Another downside is that AI content outline generation is exclusively available to customers on the business plan with Clearscope. This limitation may seem unreasonable, especially considering the pricing tiers, particularly for the essentials package. Clearscope, it would benefit all your customers to access your AI tools.

Overall, Clearscope is a valuable tool for individuals and organizations looking to enhance their content SEO potential with data-driven insights and optimization suggestions. While the pricing and learning curve may deter some users, the platform's accurate recommendations, user-friendly interface, and immediate feedback make it a valuable resource for content creators and marketers striving to create content that performs well in search engine results.

Best free SEO tools

Although we've highlighted the best paid-for SEO tools out there, a number of websites offer more limited tools that are free to use. Here we'll look at the best free SEO tools available.

Best free SEO tool platform

Reasons to buy

Reasons to avoid

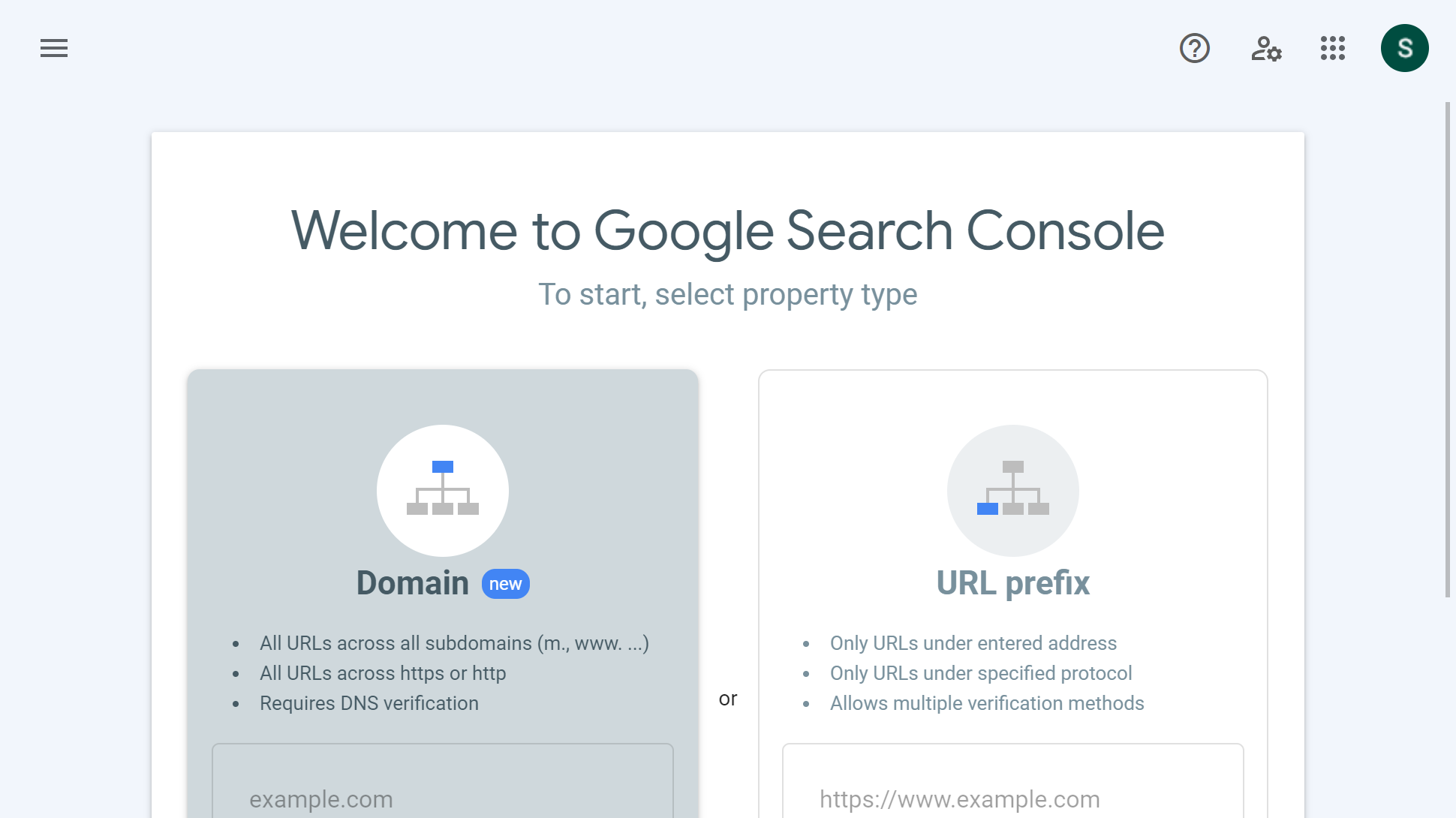

Google Search Console (GSC) is an excellent way for newbie webmasters to get started with SEO. This free service offered by Google is a powerhouse of data and insights, crucial for understanding and optimizing your website's performance in Google search results.

Using GSC, you can analyze clicks, impressions, click-through rates (CTR), and the average position of your site's URLs for specific queries and how it performs in Google Search. This data is pivotal for understanding what's working in your SEO strategy and what needs improvement. The best part? All this data is presented by Google itself and not a third-party app, so there’s no room for inaccuracies.

Another key feature is the ability to submit and test sitemaps. Ensuring that Google can find and crawl all important pages of your site is essential, and GSC aids in this by not only allowing sitemap submissions but also providing feedback on any sitemap-related issues, such as URLs that Google couldn't index.

While Google Search Console also has a Links report that showcases both internal and external links to your website, it’s limited to only 1000 links, which is not enough if you have a growing website.

The tool also plays a crucial role in site security and health, alerting you if any manual actions from Google are taken against your website. With page experience becoming a significant ranking factor, you’ll find GSC’s performance reports on page speed and Core Web Vitals to be very insightful too. They help identify pages needing improvements in loading speed, interactivity, and stability.

Overall, GSC is an invaluable tool for SEO, offering extensive data and insights across various aspects of website performance and health in Google Search. Even if you're not headstrong in SEO, whatever the size of your site or blog, Google's laudable Search Console (formerly Webmaster Central) and the myriad user-friendly tools under its bonnet should be your first port of call.

Read our full Google Search console review.

Best SEO tool for businesses

12. Google Analytics

Reasons to buy

Reasons to avoid

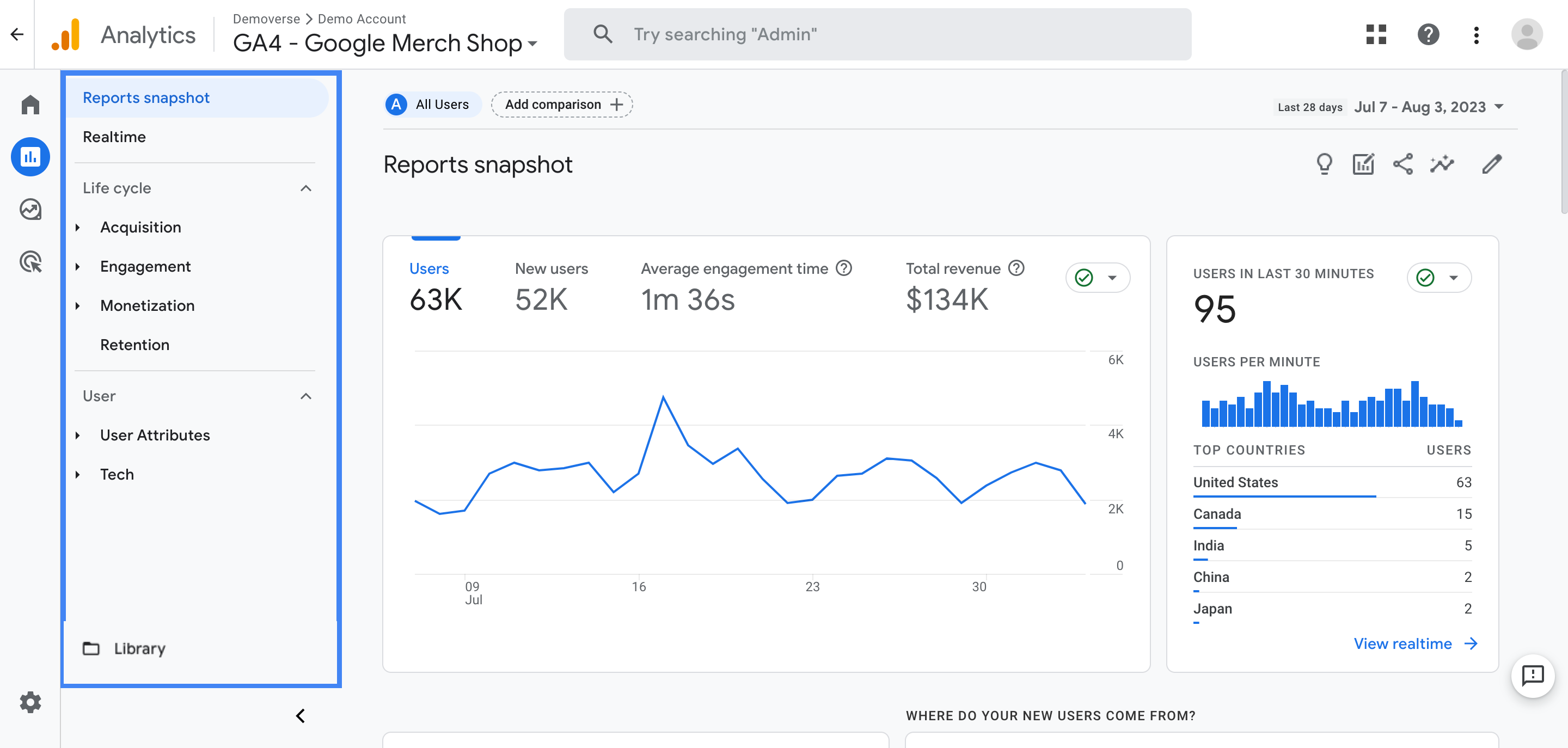

Google Analytics (GA) stands as a critical tool for digital analytics, distinct yet complementary to Google Search Console (GSC). GA is like this all-seeing eye for your website. It doesn't just count how many people visit your site; it tells you where they're coming from, what device they’re using, and even what they do when they’re on your site. It’s a lot more in-depth than GSC, which mainly focuses on how your site’s doing in Google Search.

One of the coolest things about Google Analytics is its real-time reporting. You can watch what’s happening on your site as it happens. It’s something GSC doesn't offer, and it's super useful for seeing the immediate effects of any changes you make or campaigns you run.

Then there's conversion tracking. Google Analytics lets you set specific goals, like if you want people to sign up for something or buy a product. It tracks the steps users take to get there. This is way more detailed than GSC’s search performance info and helps in understanding if your site is doing its job.

Google Analytics also digs deep into who's visiting your site. We're talking age, interests, demographics, gender, location, and behavior data. This level of detail is way beyond what you get with any SEO tool out there, be it free or paid. It's perfect for tailoring your content or ads to your audience.

Custom reports are another win for Google Analytics. You can slice and dice your data however you want. This feature allows for a more focused examination of specific areas like what pages get the most traffic from social media, or what pages are users not reading entirely, which can help you identify and fix problems you wouldn't notice otherwise.

Despite its comprehensive features, Google Analytics can be complex, with a steeper learning curve than GSC. However, the depth of insights available in Google Analytics, from user behavior to detailed conversion tracking, makes it an invaluable tool for a more complete understanding of your website's performance and audience.

Best free SEO toolbar extension

13. Detailed SEO

Reasons to buy

Reasons to avoid

The Detailed SEO Browser Extension is a specialized tool for on-page SEO analysis, catering to those who need quick, in-depth insights into the SEO setup of any webpage. This free tool, available as a browser add-on, is notable for its user-friendly interface and the efficiency it brings to SEO tasks.

One of the standout features of the extension is its ability to provide an instant overview of critical on-page SEO elements. This includes page titles, meta descriptions, canonical URLs, robot tags, and X-Robots tags. Such a snapshot is invaluable for SEO professionals and content creators looking to assess and optimize web pages for search engine visibility quickly.

Then there's this neat feature where you can export all the links from a page. It’s perfect when you're trying to figure out the internal link game of a site or checking out backlinks. Plus, it also lets you download all the image URLs, which is a lifesaver for making sure all the images are SEO-friendly.

Now, what we love about Detailed SEO the most is that it can preview the Open Graph data or Schema markup of any page in a single click. It also gives you direct shortcuts to a website's robots.txt and XML sitemap. These features alone eliminate the need for three separate Chrome extensions and consolidate everything in a single, comprehensive extension.

Moreover, the extension is designed to be lightweight and user-friendly, offering quick SEO insights without the complexity or depth of more advanced, paid SEO tools. While it doesn't provide the extensive analytics and deep-dive capabilities of these more comprehensive tools, it's an excellent choice for basic, on-the-go SEO analysis.

We've featured the best onpage SEO tools

Best SEO tool FAQs

What is an SEO crawler?

An SEO crawler can help you discover and fix issues that are preventing search engines from accessing and crawling your site. It remains an essential yet elusive tool in the arsenal of any good SEO expert. We caught up with Julia Nesterets, the founder of SEO crawler Jetoctopus to understand what exactly an SEO crawler and why is it so important.

If you are a webmaster or SEO professional, this is probably the most heartbreaking message you may receive. Sometimes Google’s bots may ignore your content and SEO efforts and avoid indexing your page. But the good news is that you can fix this issue!

Search engines were designed to crawl, understand, and organize online content to deliver the best and most relevant results to users. Anything getting in the way of this process can negatively affect a website’s online visibility. Therefore, making your website crawlable is one the primary goals and can highlight any issues you have with your web hosting service provider.

By improving your site’s crawlability you can help search engine bots understand what your pages are about and by that leverage your Google ranking. So how can an SEO crawler help?

1. It offers real-time feedback. An SEO crawler can quickly crawl your website (some crawls as fast as 200 pages per second) to show any issues it gives. The reports analyzes the URL, site architecture, HTTP status code, broken links, details of redirect chains and meta robots, rel-canonical URLs, and other SEO issues. These reports can be easily exported and referred to for further action by the technical SEO and development teams. Thus, using an SEO crawler is the best way to ensure your team is up to date on your website crawling status.

2. It identifies indexing errors early. Indexing errors like 404 errors, duplicate title tags, duplicate meta descriptions, and duplicate content, often go unnoticed as they aren’t easy to locate. Using an SEO crawler can help you spot such issues during routine SEO audits, allowing you to avoid bigger problems in the future.

3. It tells you where to start! Deriving insights from all available reports may be intimidating for any SEO professional. Therefore, it’s wise to choose an SEO crawler which is problem-centric and helps you prioritize issues. A good crawler should make it possible for webmasters to concentrate on the main problems by estimating their scale. That way, webmasters can keep fixing critical issues in a timely manner.

How do Google SEO spiders work and many more backlink questions

An SEO crawler can help you discover and fix issues that are preventing search engines from accessing and crawling your site. It remains an essential yet elusive tool in the arsenal of any good SEO expert. We caught up with Julia Nesterets, the founder of SEO crawler Jetoctopus to understand what exactly an SEO crawler, why is it so important and a bevy of questions about backlinks in general.

Google’s SEO spiders are programmed to collect information from webpages and send it to the algorithms responsible for indexing and evaluating content quality. The spiders crawl the URLs systematically. Simultaneously, they refer to the robots.txt file to check whether they are allowed to crawl any specific URL.

Once spiders finish crawling old pages and parsing their content, they check if a website has any new pages and crawl them. In particular, if there are any new backlinks or the webmaster has updated the page in the XML sitemap, Googlebots will add it to their list of URLs to be crawled.

So is it worth retrospectively adding backlinks? It’s worth adding backlinks to content that was posted a while ago, especially if a page is high-quality and on the same subject. This will also help preserve the equity of that page.

Is there a hierarchy of backlinks? Technically, there is no hierarchy of backlinks, as we can’t structure and scale them the way we want. However, we can increase the quality of backlinks based on several criteria like:

Anchor text relevance

Relevance and quality of a linking page

Linking domain quality

IP address

Link clicks and a linking website traffic

Few links on the linking webpage

The links of highest quality have relevant keywords in the anchor text and come from trustworthy websites. But again, there are no hard and fast rules on how Google evaluates backlinks. Some backlinks can still be of proper quality even if they don’t fulfill these parameters.

How often should a site audit links?

Though there’s no right or wrong way of auditing links, there are a few pointers to bear in mind when determining the frequency.

If your website has a long history of inorganic link building, it’s wise to do a monthly disavow.

In most cases, a quarterly audit is recommended. It allows webmasters to keep a website’s link profile clean and track new backlinks pointing.

Links on a website that has been growing ethically and isn’t in a competitive domain can be checked half-yearly as the risk of the negative SEO is low.

Consider a website with hundreds of old, very low traffic pages with no links (e.g. eCommerce/news). Is it worth either 301 these pages to relevant key hubs or update the page with backlinks to the relevant key hubs without updating the dates?

In such a case, choose the pages with the best content and update them. Set up 301 redirects for the pages you do not want your audience to see and point them to the relevant key hubs. The key term here is ‘relevant.’ The 301 redirects should point to thematically relevant hubs. Otherwise, Google will treat them as soft 404s.

Are social media backlinks any good?

Most webmasters may feel that social media backlinks are pointless primarily because they are Nofollow links that do not impact SEO. However, social signals are an important ranking factor for Google. People are constantly clicking on links they see in their newsfeeds. If you offer great content, then this can be a great advantage for you. That’s why, do not ignore social backlinks.

Can adverts impact your SEO negatively?

When you think of SEO, you generally don’t think of ads, and with good reason. Eric Hochberger, Co-Founder and CEO of full service ad management company Mediavine, explains to us the love-hate relationship between these two entities.

By definition, advertising runs counter to the goals of SEO optimization, a process which relies on publisher content and user experience. However, as an ad management company that originated as an SEO marketing firm, we work to find the perfect balance, ensuring the two can coexist. Yes, you can run high-performing ads and still rank well in search engines thanks to the right ad tech. It’s not an either-or scenario, and here’s why:

The first key feature is lazy loading. When a website employs lazy loading, ads only load on a webpage as a user scrolls to them. Meaning, if a user doesn’t scroll to a certain screen view, the ads don’t exist on the page. This function extremely lightens the page load. A lighter page means faster loading which leads to better SEO.

Complying with the Coalition for Better Ads (CBA) standards is critical to SEO because the CBA is what Google uses to power its built-in Chrome Ad Filtering and its Ad Experience Report in Google Search Console (its main SEO tool). There’s a misconception that the number of ads affects SEO, but it’s actually the density. The CBA provides comprehensive insight regarding appropriate ad-to-content ratios for both mobile and desktop.

Lastly, reducing above the fold (ATF) ads, or ads that appear in the first screen view, is huge for both page speed and SEO. If an ad isn’t loading in the first screen view, the site will appear to load faster (how Google measures it), since users don’t notice when an ad loads if it’s below the fold.

Which leads me to this - you’ll often hear that SEO follows user experience. Google uses this line quite a bit, which makes sense. Ultimately, the goal of Google search results is to return the best user experience. If ads are bogging down a website, that doesn’t equal a high-quality user experience, which therefore will not generate good SEO. Do you see the pattern here?

While there is an ability for SEO and advertisements to coexist in a positive way, the existing resources for publishers to promote this are scarce. The solution would be to get a framework that works on the most popular CMS and focuses on Google’s best practices from Core Web Vitals to page experience and employs lazy loading and reducing ads ATF.

What are SEO tools?

SEO tools help users optimize their websites for better visibility and ranking on search engines. These tools give users data about their website in terms of search engine ranking, backlinks, keywords, and much more.

Using the data analyzed through an SEO tool, a user can uncover opportunities to improve their website's ranking.

How to choose the best SEO tools for you?

Before you select the best SEO tool for yourself, you'll have to evaluate your SEO needs. If you're just starting out, it may be better to opt for a tool that's simple and easy to get started with and has beginner-friendly tutorials. But if you're a seasoned digital marketer, then an advanced tool will serve you better.

You'll want to consider the size of your business. New website owners will have different needs from small and midsized businesses and large businesses. If you have many people collaborating with the SEO tools, then a tool with solid collaboration options will be ideal.

Look out for the user interface and dashboard as well. If the data's not presented in an easy-to-consume way, then you may miss out on key insights and lose time.

Lastly, you need to consider your budget and pick a tool accordingly.

How we tested the best SEO tools

To test the best SEO tools, we considered many points, from their user interface and dashboard to the data presentation and pricing.

We evaluated what types of users the different tools would be best suited for (new website owners, small and midsized businesses, and large businesses). We checked if they had mobile apps, extensive documentation for learning, and the ability to process large amounts of data. We also looked at their pricing plans and the variety of tools they offered.

Read how we test, rate, and review products on TechRadar.

Get in touch

- Want to find out about commercial or marketing opportunities? Click here

- Out of date info, errors, complaints or broken links? Give us a nudge

- Got a suggestion for a product or service provider? Message us directly

- You've reached the end of the page. Jump back up to the top ^

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Désiré has been musing and writing about technology during a career spanning four decades. He dabbled in website builders and web hosting when DHTML and frames were in vogue and started narrating about the impact of technology on society just before the start of the Y2K hysteria at the turn of the last millennium.