Cloud control to Major Tom: NASA's space missions are going 'cloud native'

Pioneering the cloud for storing, analysing and processing big data from deep space

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

"Hey Curiosity, be a pal and move north 10 metres to look at that big red rock, would ya?"

If the revelation that engineers at NASA's Jet Prolusion Laboratory (JPL) use Alexa to control its Mars rovers wasn't enough, consider this – its latest missions are conducted entirely using the cloud. Since adopting AWS eight years ago, data scientists at JPL and NASA have been on their own journey into the unknown, pioneering the exploration of cloud computing in all-new ways.

Curiosity and the cloud

For the two live Mars rovers, Opportunity and Curiosity, NASA uses the cloud for mission-critical controls. The rovers' operations are controlled via applications that sit on the cloud, from downloading reports on yesterday's movements to uploading manoeuvres for the following day.

Article continues below"One of the most common myths is that Mars rovers are operated by joysticks," said Khawaja Shams, Senior Manager, Software Development at AWS, but until recently a software engineer at JPL. Shams was delivering a talk called 'Inspiring Innovation in the Cloud: NASA/JPL and Beyond' at AWS reInvent 2015 in Las Vegas.

"That would be super-nice, but since Earth and Mars are about 100 million miles apart, it takes seven to 20 minutes to give a rover an instruction … if you told it to go forward and waited for an acknowledgement, the rover might already be in a ditch somewhere," he says. The rover works semi-autonomously, with scientists sending commands up via cloud apps.

Low-cost missions

JPL's use of the cloud is also about saving money. "Landing on Mars (with Curiosity) was 100 times cheaper than nine years prior by using AWS," says Tom Soderstrom, IT Chief Technology Officer at Jet Propulsion Laboratory, about the 2012 mission, which continues to this day. "We streamed 175TB, 80,000 requests per second, it was an amazing performance," he adds. "You all saw the pictures at the same time we did."

Instant image sharing

How JPL's cloud works is as simple as it is streamlined. "The images go from the Mars rover out to the orbiters, back to the Deep Space Network then into the JPL data centres," explains Shams. "Data goes from JPL to S3 (AWS' Simple Storage Service), it's processed by EC2 (Elastic Compute Cloud, AWS' resizable cloud hosting services) and within seconds of the data arriving on Earth it gets processed, stored on S3 in JPEG formats, and is available for everyone to consume almost instantly."

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

That even applies to separating stereo images taken by Curiosity (3D photos help operators give exact navigational instructions to the rover), and to the automatic stitching together of separate one megapixel images to create vast five gigapixel panoramas; it's all automated, and it needs to be.

"We often have less than four hours to react to information we've just gotten, so it's imperative we produce these panoramas as quickly as possible," says Shams about how images help the rovers' operators make snap decisions. He adds that it's all down to the elastic provisioning and workflow orchestration that the cloud now allows.

Data science experiments

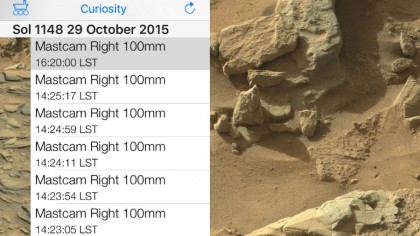

Thanks to an internal image search service – indexed inside of DynamoDB – JPL scientists can now search and query images more freely, but even this was opened up to developers. API requests can be crafted that build on top of the database, and can be pulled into third-party apps to reuse. Examples include the MSL Image Explorer and the Mars Images app for iOS, which deliver the very latest images from NASA's Mars rovers by interrogating that same internal image search archive.

"You can see the images the moment they hit Earth back from Mars," says Shams. "It's about building platforms and thriving ecosystems that allow others to build apps for once we've stopped working on them."

Jamie is a freelance tech, travel and space journalist based in the UK. He’s been writing regularly for Techradar since it was launched in 2008 and also writes regularly for Forbes, The Telegraph, the South China Morning Post, Sky & Telescope and the Sky At Night magazine as well as other Future titles T3, Digital Camera World, All About Space and Space.com. He also edits two of his own websites, TravGear.com and WhenIsTheNextEclipse.com that reflect his obsession with travel gear and solar eclipse travel. He is the author of A Stargazing Program For Beginners (Springer, 2015),