Screen scraping: how to stop the internet's invisible data leeches

Automated bots are on the prowl

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Data is your business's most valuable asset, so it's never a good idea to let it slip into the hands of competitors.

Sometimes, however, that can be difficult to prevent due to an automated technique known as 'screen scraping' that has for years provided a way of extracting data from website pages to be indexed over time.

This poses two main problems: first, that data could be used to gain a business advantage - from undercutting prices (in the case of a price comparison website, for example) to obtaining information on product availability.

Article continues belowPersistent scraping can also grind down a website's performance, which recently happened to LinkedIn when hackers used automated software to register thousands of fake accounts in a bid to extract and copy data from member profile pages.

Ashley Stephenson, CEO of Corero Network Security, explains the origins behind the phenomenon, how it could be affecting your business right now and how to defend from it.

TechRadar Pro: What is screen scraping? Can you talk us through some of the techniques, and why somebody would do it?

Ashley Stephenson: Screen scraping is a concept that was pioneered by early terminal emulation programs decades ago. It is a programmatic method to extract data from screens that are primarily designed to be viewed by humans.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Basically the screen scraping program pretends to be a human and "reads" the screen, collecting the interesting data into lists that can be processed automatically. The most common format is name:value pairs. For example, information extracted from a travel site reservation screen might look like the following -

Origin: Boston, Destination:Atlanta, Date:10/12/13, Flight:DL4431, Price:$650

Screen scraping has evolved significantly over the years. A major historical milestone occurred when the screen scraping concept was applied to the Internet and the web crawler was invented.

Web crawlers originally "read" or screen scraped website pages and indexed the information for future reference (e.g. search). This gave rise to the search engine industry. Today webcrawlers are much more sophisticated and websites include information (tags) dedicated to the crawler and never intended to be read by a human.

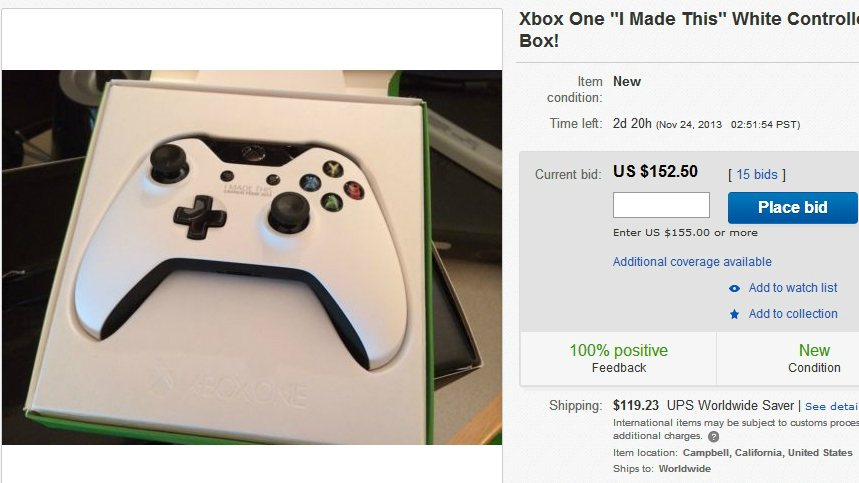

Another subsequent milestone in the evolution of screen scraping was the development of e-retail screen scraping, perhaps the most well know example being the introduction of price comparison websites.

These sites employ screen scraping programs to periodically visit a list of known e-retail sites to obtain the latest price and availability information for a specific set of products or services. This information is then stored in a database and used to provide aggregated comparative views of the e-retail landscape to interested customers.

In general the previously described screen scraping techniques have been welcomed by website operators who want their sites to be indexed by the leading search engines such as Google or Bing, similarly e-retailers typically want their products to be displayed on the leading comparison shopping sites.

TRP: Have there been any recent developments in competitive screen scraping?

AS: In contrast over the past few years, recent developments in competitive screen scraping are not necessarily so welcome. For a site to be scraped by a search engine crawler is OK if the crawler visits are infrequent.

For a site to be the target of a price comparison site scraper is OK if the information obtained is used fairly. However as the number of specialized search engines continues to increase and the frequency of price check visits skyrockets these automated page views can rise to levels which impact the intended operation of the target site.

More specifically, if the target site is the victim of competitive scraping the information obtained can be used to undermine the business of the site owner. For example, undercutting prices, beating odds, aggressively acquiring event tickets, reserving inventory, etc.