How universal memory will replace DRAM, flash and SSDs

Next-gen memory will change the way your OS works

In about five years, flash and DRAM are going to get too small for what we want to do with computers.

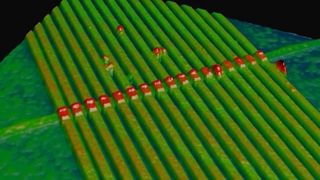

Just as CPUs have to have more cores rather than just running faster because you can't get enough electrons into ever-smaller transistors to reliably store the ones and zeroes that make up data, we're going to reach a point where we can't pack memory in any more densely.

Capacities are still going up. Terabyte SSDs are in the shops, Samsung is stacking flash chips vertically, and even the tiny eXtended Capacity version of SD cards will go up to 2TB, but they'll hit the same problem of no longer being able to cram ever-more information into the same space.

The next generation of memory technologies aims to fix that. You could have hundreds of gigabytes or even a few terabytes of fast memory in your tablet or phone, that doesn't forget what was in it when you turn your device off.

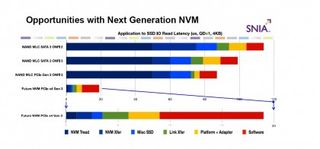

"There have been about a dozen possible technologies [in development]," says Intel's Jim Pappas, "and if at least one of them succeeds it will be the single largest change to computer architecture that has happened in decades. It's about a thousand times faster than NAND flash and about a million times faster than a hard drive." That means it can work as memory and storage (without slowing your system down the way it does when you have to swap information from fast memory to slower storage).

"What does it mean when your storage system is as fast as system memory or your memory is as vast as all your storage?" Pappas asks. For one thing, you have to think very differently about what your operating system does with memory, because it never goes away.

OS repercussions

Spencer Shepler is a file system expert at Microsoft who's looking into what persistent memory means. It's more than just making sure the kernel knows that information in memory sticks around or even letting the memory manager make direct links to files that live in memory all the time so they can be accessed the way they would be if they were on a disk.

Are you a pro? Subscribe to our newsletter

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

You might also want to retrieve much less of a file at once; just a few bytes of information out of a 4GB file. "We're talking about accessing very small sub-segments, small blocks of data." Applications will need to be written to handle that kind of granular access instead of expecting that they'll get a whole file at once.

The operating system could do indexing far more often, because it can do it in memory without slowing you down by having to go back and forth to disk to look at files. You could have a database that's logging every transaction you make, but running as fast as if you didn't have logging turned on, because the log goes into persistent memory. Video editing would benefit particularly, Shepler believes. "You're moving large amounts of data and that's much faster."

But if your memory never goes away, what does it mean to do a backup? How do you check data integrity when your system restarts if parts of it are always on? What happens if you take memory out of a server and put it in another system; do you want the information to move like a disk does or go away like memory today? Maybe there are files, like password logins or bank statements you look at online, that you won't want to have stored in persistent memory an attacker might get access to. And if you can't reboot to refresh a driver or get rid of a virus that's loaded into memory, how do you handle maintenance and security?

Mary (Twitter, Google+, website) started her career at Future Publishing, saw the AOL meltdown first hand the first time around when she ran the AOL UK computing channel, and she's been a freelance tech writer for over a decade. She's used every version of Windows and Office released, and every smartphone too, but she's still looking for the perfect tablet. Yes, she really does have USB earrings.

Most Popular