Comparing Micron's Automata processor to GPGPU, FPGA and classic co-processors

A closer look at this new processing technology

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Check out the first part of this article where we discover more about AP and find out what's in store for the technology in a near future as well as its role as part of a heterogeneous system.

The Automata Processor should be considered an accelerator or co-processor that works in conjunction with a convention CPU/NPU.

This is a common characteristic of each of the technologies you referenced (GPGPU, FPGA and 487's), although direct comparisons to each of these technologies is unique. I'll touch on the unique aspects below.

Article continues belowComparison to GPU

GPUs have a 'base' function that originated in processing 3D graphical images. CPUs were not purpose built for this function and so a great opportunity was created for a purpose built architecture that could handle acceleration of raster graphic algorithms in PCs and workstations.

The same basic situation exists for the Automata Processor. In the case of the AP, however, the algorithms which are being accelerated are related to the processing of unstructured streams of information. This is another application area where traditional CPUs are poorly matched.

GPGPUs don't displace CPUs in any application where they exist, however, GPUs can allow systems with much lower power processors to perform at a much higher performance level. In this respect, the AP and GPGPUs are similar. Now, when considering the AP vs. a GPGPU, we must consider the nature of the problems for which each architecture is best suited.

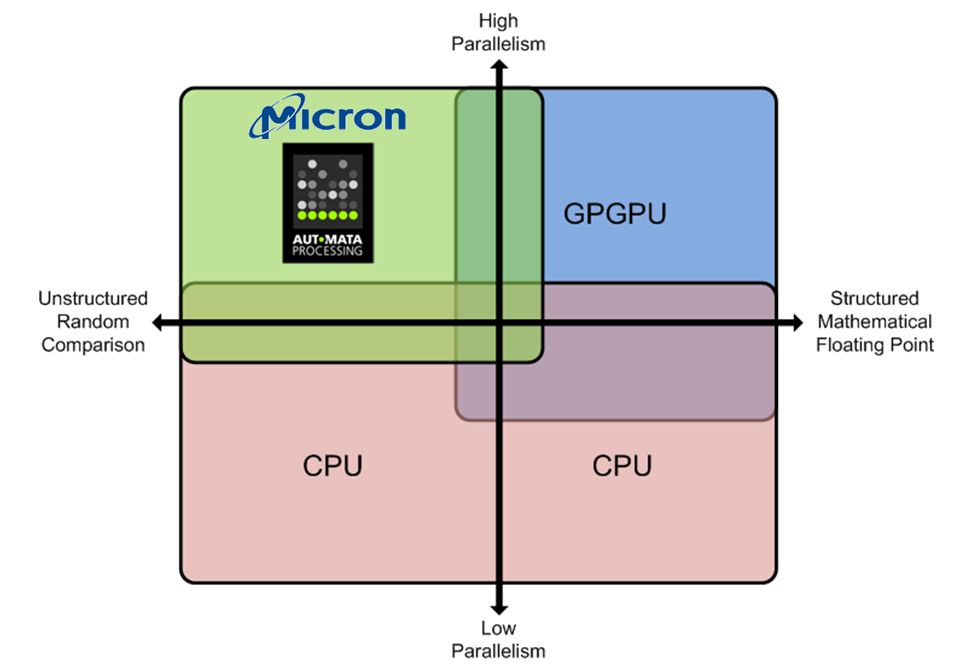

You can see that the Automata Processor is designed to address a class of problems for which neither CPUs or GPGPUs are well suited. For problems that are based on massive parallelism and require the random, comparison based operations often associated with applications in bioinformatics, pattern recognition, data analytics and image processing the Automata Processor is likely a superior solution.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

This can be attributed to the fact that the Automata Processor was purpose built to support the highly parallel operations intrinsic to these types of applications. Although GPGPUs are certainly more 'parallel' than CPUs, GPGPUs still fall far short of the fine grained parallelism that is found in the Automata Processor. In the AP, tens of thousands of small analysis engines (we call them STEs) are used to create individual thread execution units that are assigned to analyze a data stream.

The AP allows thousands and thousands of these thread execution units to be used to perform complex analysis of the data stream, simultaneously and in parallel. Furthermore, the AP doesn't require programmers to try to manage this level of parallelism.

Simply put, each Automaton (thread execution unit) works independently from all others and the programmer doesn't need to be concerned with traditional factors that make exploiting CPU and GPU parallelism so difficult.

Comparison to FPGA

Both the Automata Processor and FPGAs share a very important characteristic; namely, the ability to be reconfigured. The concept of reconfigurable computing is a highly studied branch of computer science and system level design. By allowing HW machines to be exactly adapted to the problem at hand, much more efficient processing systems can be developed.

It is simply a matter of fact that purpose built machines can often perform processing functions more efficiently than general purpose processors. FPGAs and the AP are significantly different, though.

FPGA are targeted at allowing design engineers to implement large scale Boolean logic circuits. Indeed, FPGAs can be used to implement almost any conceivable digital circuit.

Combined with the ability to be reconfigured, FPGAs are excellent choices for ASIC prototyping or for production uses when the expected volume is not sufficient to cover the development of a custom ASIC.

Although the Automata Processor is considered Turing complete, it would likely not be a good choice to use for implementation of large scale Boolean logic circuits. The Automata Processor was designed from the ground up to enable highly parallel comparison and routing operations.

Désiré has been musing and writing about technology during a career spanning four decades. He dabbled in website builders and web hosting when DHTML and frames were in vogue and started narrating about the impact of technology on society just before the start of the Y2K hysteria at the turn of the last millennium.