Next gen Nvidia Mellanox InfiniBand will take supercomputers to the next level

Exascale AI supercomputing is now within reach

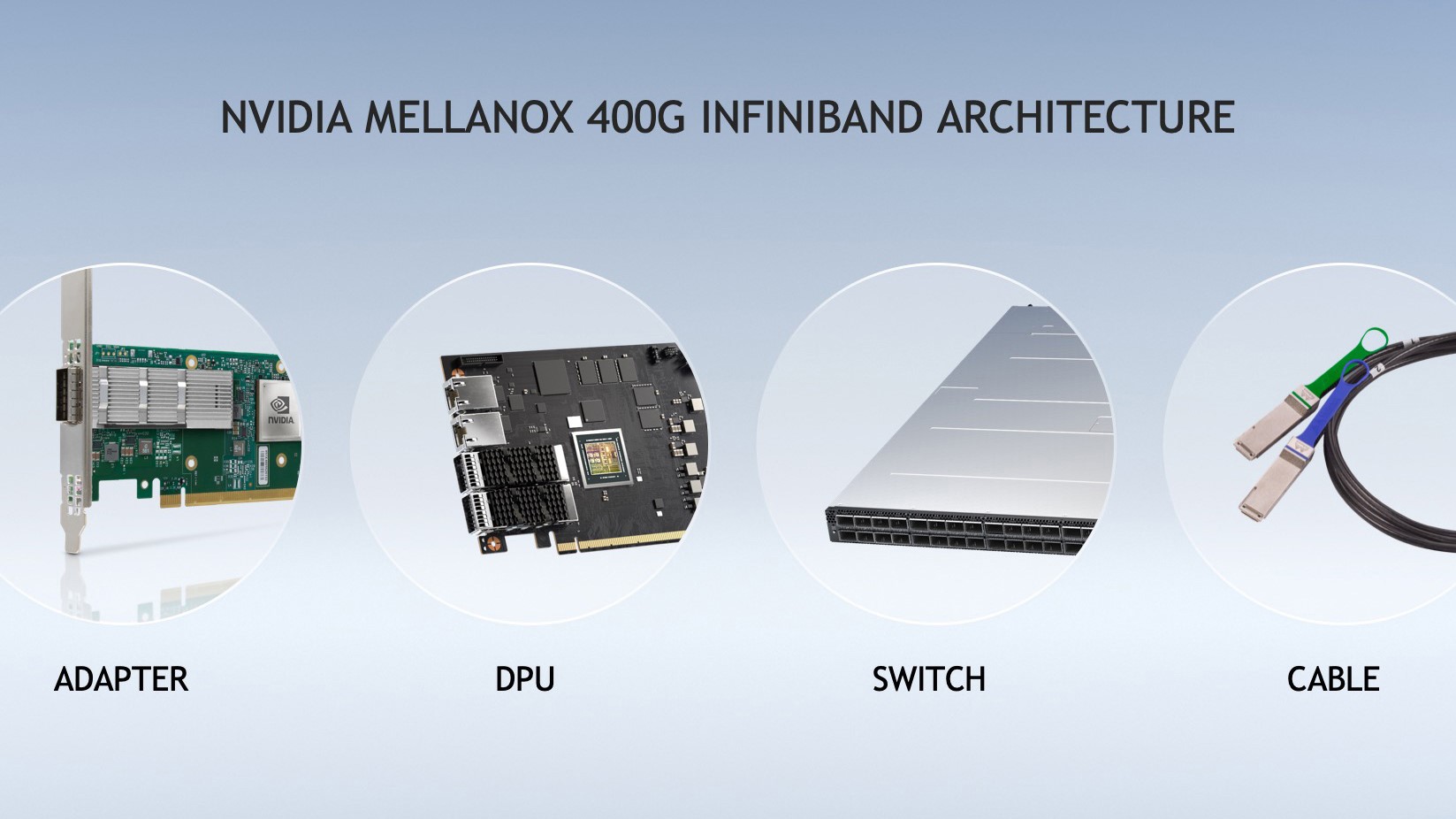

In order to give AI developers and scientific researchers the fastest networking performance available on their workstations, Nvidia has introduced the next generation of its Nvidia Mellanox 400G InfiniBand.

Nvidia Mellanox 400G InfinBand accelerates work in fields such as drug discovery, climate research and genomics through a dramatic leap in performance offered on the world's only fully offloadable, in-network computing platform.

The seventh generation of Mellanox InfiniBand provides users with ultra-low latency and doubles data throughput with NDR 400Gb/s while also adding additional acceleration through new Nvidia In-Network Computing engines.

- We've assembled a list of the best cloud computing services

- These are the best business computers on the market

- Also check out our roundup of the best video editing PCs

The world's leading infrastructure manufacturers including Atos, Dell Technologies, Fujitsu, Gigabyte, Inspur, Lenovo and Supermicro plan to integrate Nvidia Mellanox 400G InfiniBand into their existing enterprise solutions and HPC offerings. At the same time, leading storage infrastructure partners such as DDN and IBM Storage will also offer extensive support.

Nvidia Mellanox 400G InfiniBand

Nvidia's latest announcement builds on Mellanox InfiniBand's lead as the industry's most robust solution for AI supercomputing and the Nvidia Mellanox 400G InfiniBand offers three times the switch port density and boosts AI acceleration power by 32 times. Additionally, it surges switch system aggregated bi-directional throughput by five times to 1.64 petabits per second which enables users to run larger workloads with fewer constraints.

Since offloading operations is crucial for AI workloads, the third-generation Nvidia Mellanox Sharp technology allows deep learning training operations to be offloaded and accelerated by the InfiniBand network resulting in 32 times higher AI acceleration power. By combining the Nvidia Magnum IO software stack with the Nvidia Mellanox 400G InfiniBand, AI developers and researchers can benefit from out-of-the-box accelerated scientific computing.

Edge switches based on the Mellanox InfiniBand architecture are capable of carrying an aggregated bi-directional throughput of 51.2Tb/s with a capacity of more than 6.65bn packets per second. The modular switches based on Mellanox InfiniBand on the other hand can carry up to an aggregated bi-directional throughput of 1.64 petabits per second which is five times higher than the last generation.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

SVP of networking at Nvidia Gilad Shainer explained how the Nvidia Mellanox 400G InfiniBand can aid AI developers and researchers dealing with increasingly complex applications in a press release, saying:

“The most important work of our customers is based on AI and increasingly complex applications that demand faster, smarter, more scalable networks. The NVIDIA Mellanox 400G InfiniBand’s massive throughput and smart acceleration engines let HPC, AI and hyperscale cloud infrastructures achieve unmatched performance with less cost and complexity.”

- We've also highlighted the best mobile workstations

After working with the TechRadar Pro team for the last several years, Anthony is now the security and networking editor at Tom’s Guide where he covers everything from data breaches and ransomware gangs to the best way to cover your whole home or business with Wi-Fi. When not writing, you can find him tinkering with PCs and game consoles, managing cables and upgrading his smart home.

Become a TechRadar Insider

Become a TechRadar Insider