When will Singularity happen – and will it turn Earth into heaven or hell?

Some think that artificial brains will overtake humans in 2045

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Defined as the point where computers become more intelligent than humans and where human intelligence can be digitally stored, Singularity hasn't happened yet. First theorised by mathematician John von Neumann in the 1950s, the 'Singularitarian Immortalist' (and Director of Engineering at Google) Ray Kurzweil thinks that by 2045, machine intelligence will be infinitely more powerful than all human intelligence combined, and that technological development will be taken over by the machines.

"There will be no distinction, post-Singularity, between human and machine or between physical and virtual reality," he writes in his book 'The Singularity Is Near'. But – 2045? Are we really that close?

Hardware innovation

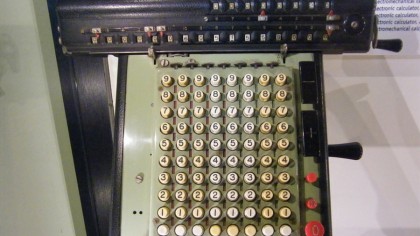

Moore's Law states that computer processing power will double every 18 months, which is a thousand-fold increase every decade. Is Singularity really so unbelievable? What started early in the 20th century with the development of the Monroe mechanical calculator has gone on a journey via innovations like massive parallelism (the use of multiple processors or computers to perform computations) and supercomputer clusters, cloud computing, personal assistants like Siri and artificial intelligence like Watson and Deep Blue. The law of accelerating returns is in full swing.

Article continues below

What is cognitive computing?

We're already in the era of cognitive hardware and brain-inspired architecture. IBM's latest cognitive chip, the postage stamp-sized SyNAPSE, is a new kind of computer that eschews maths and logic for more humanlike skills such as recognising images and patterns, the latter crucial for understanding human conversations.

It's powered by one million neurons, 256 million synapses and 5.4 billion transistors, and has an on-chip network of 4,096 neurosynaptic cores. It's a low-power supercomputer that only operates when it needs to and, crucially, has sensory capabilities – it's aware of its surroundings. This digital 'brain' is the latest step in artificial intelligence that could be used in robots, futuristic driverless cars, drones, digital doctors and all kinds of responsive infrastructure.

But aren't there innately human skills, such as being able to tell when someone is lying? A study last March by the University of California San Diego and the University of Toronto found that a computer can spot false faces better than people.

"The computer system managed to detect distinctive dynamic features of facial expressions that people missed," says Marian Bartlett, research professor at UC San Diego's Institute for Neural Computation and lead author of the study. "Human observers just aren't very good at telling real from faked expressions of pain."

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

What does Singularity have to do with artificial intelligence?

"Artificial intelligence only gets better, it never gets worse," says Dr Kevin Curran, IEEE Technical Expert and group leader for the Ambient Intelligence Research Group at University of Ulster. "Computational Intelligence techniques simply keep on becoming more accurate and faster due to giant leaps in processor speeds."

However, AI is only one piece of the jigsaw. "Artificial intelligence refers more narrowly to a branch of computer science that had its heyday in the 90s," says Sean Owen, Director of Data Science at Cloudera, who makes a distinction between game-playing, expert systems, robotics and computer vision, and machine learning, which has most of the focus today. "I do think the classic topics of AI are making a comeback, especially robotics."

"AI technologies like Siri, Watson, and Deep Blue are a step towards Singularity," says Owen. "If Singularity is about technology making qualitative change in how we live, there is evidence that some basic sci-fi has already landed – for example you can talk to your phone or car."

The Turing Test

Although it's important not to confuse AI with Singularity, it will play a decisive role in machines learning new tasks and becoming more humanlike. Cue the famous Turing Test, as set out by Alan Turing, the pioneering British computer scientist and code-breaker.

"The Turing Test is a test of a machine's ability to exhibit intelligent behaviour equivalent to, or indistinguishable from, that of an actual human," says Curran. "In the original illustrative example, a human judge engages in a natural language conversation with a human and a machine designed to generate performance indistinguishable from that of a human being. All participants are separated from one another. If the judge cannot reliably tell the machine from the human, the machine is said to have passed the test."

The Turing Test does not directly test whether the computer behaves intelligently, but only whether the computer behaves like a human being. "Since human behaviour and intelligent behaviour are not exactly the same thing, the test can fail to accurately measure intelligence," says Curran. Nothing has yet passed the Turing Test.

Jamie is a freelance tech, travel and space journalist based in the UK. He’s been writing regularly for Techradar since it was launched in 2008 and also writes regularly for Forbes, The Telegraph, the South China Morning Post, Sky & Telescope and the Sky At Night magazine as well as other Future titles T3, Digital Camera World, All About Space and Space.com. He also edits two of his own websites, TravGear.com and WhenIsTheNextEclipse.com that reflect his obsession with travel gear and solar eclipse travel. He is the author of A Stargazing Program For Beginners (Springer, 2015),