TechRadar Verdict

The SuperFlex MicroBlade is a very competent and competitive product with plenty to offer buyers building server consolidation, web hosting, HPC and private cloud platforms.

Pros

- +

Latest Xeon E5-2600 v3 processors plus DDR4 memory

- +

Modular scalability and redundancy options

- +

Integrated 10/40GbE switch modules with SDN

Cons

- -

Limited storage within the MicroBlade enclosure

- -

Unable to support 16/18 core Xeon v3 processors

- -

Multiple management interfaces

Why you can trust TechRadar

Like other blade server platforms, the concept behind the Boston SuperFlex MicroBlade is an easy one. Take a bunch of servers (in this case up to 28 Intel Xeon dual-socket servers) and, instead of putting each inside a separate 1U rack-mount chassis, fix them onto hot-swap 'sleds', then slide those sleds into a compact enclosure (here it's just 6U high) to save massively on rack space.

But then space saving isn't the only benefit, with lots of other features aimed at buyers looking to consolidate their existing server estate, build a private cloud or host other compute-intensive applications.

Design

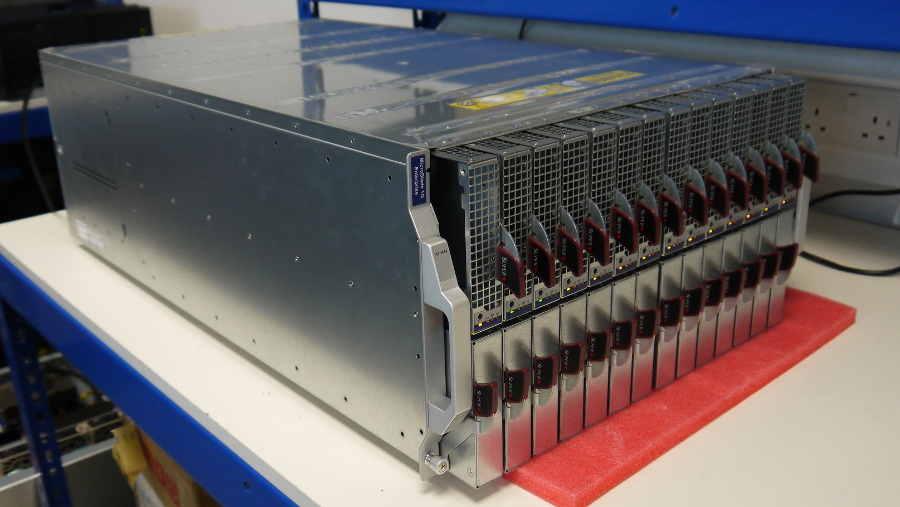

Based on SuperMicro hardware, the Boston MicroBlade is an impressive piece of kit, housed in a massively engineered all-metal chassis which, even when empty, requires two people to lift into place in a standard 19-inch rack.

As you can see from the photographs we tested on a bench, the server sleds sliding in from the front, in two rows of fourteen, engaging into an active backplane that does away with the need for individual power and networking cables to each node.

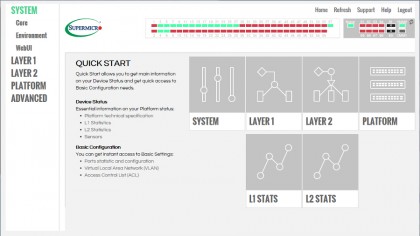

A custom Chassis Management Module (CMM) slides in at the rear to enable remote management of both the enclosure and its nodes. In fact there's room for two CMMs for extra redundancy plus additional modules to provide network connectivity, which we'll talk about shortly.

The power supplies, similarly, slide into slots at the back of the enclosure with space for up to eight 1600 Watt PSUs arranged in an N+1 or N+N configuration. Ours had just four PSUs plus four additional fan-only assemblies, giving eight fans altogether to look after chassis cooling.

Of course, all those fans can get quite noisy. Especially at power up or when swapping one of the server SLEDs, the fans making a jet-like woosh as they ramp up to full speed. Still, that's not unusual on hardware of this type, which is typically destined to be locked away in a (hopefully) soundproof machine room.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Processors to burn

It's important to understand that each sled (node) within the SuperFlex MicroBlade is a self-contained server in its own right, holding a custom SuperMicro motherboard with two sockets on board for the latest Haswell-based Intel Xeon E5-2600 v3 processors.

Unfortunately power and cooling requirements limit the SuperFlex MicroBlade to processors with up to 14 cores and a TDP of 120 Watt. However, that still leaves a lot of scope, with the review system featuring more 'modest' 6-core E5-2620 v3 processors, with a relatively low 85 Watt TDP.

Up to 128GB of DDR4 memory can also be accommodated in the eight DIMM slots on each server node, with just 64GB fitted on ours, along with a single 250GB SATA hard disk per node plus space for another disk, if wanted. A small flash memory module – a SATA DOM (Disk on Motherboard) can also be fitted to, for example, boot straight into a suitable hypervisor.

Exactly how many servers you need will depend on application requirements and budget. The review system had only 14 server nodes configured which with associated power, management and networking modules resulted in a price of £37,200 ex VAT (around $54,350, or AU$71,800). A mind boggling figure for those more used to buying notebook computers, but remarkably affordable in the data centre world, especially when compared to other blade server platforms and more conventional rack alternatives.

Flexible connections

Networking is another strong point of this product with lots of options to be had, starting with the server motherboards which can be equipped with 10GbE controllers, although those we tested came with four Gigabit networking ports each. Rather than have their own ports and a spaghetti mess of cables, however, these connect via the enclosure backplane direct to Ethernet switch modules located at the rear of the chassis, making for a much less cluttered and cable-free setup.

Of course some cables are still needed and, as the MicroBlade equivalent of conventional top-of-rack aggregation switches, each switch module can, in turn, be cabled to the rest of the network via either eight 10GbE or two 40GbE uplinks, using SFP+ modules to provide the necessary interfaces. A single Gigabit uplink and out-of-band management port are also to be found on each of the switch modules.

Moreover, the switches in the SuperFlex MicroBlade also support Software Defined Networking (SDN) using the OpenFlow protocol, enabling flexible management of all the available ports and L2 networking features via either the management software provided or a compatible management platform.

With just 14 servers, the review system had only two switch modules fitted, although four can be installed to handle the networking load on a fully populated enclosure.